Is Apple M1 Good Enough for AI Development?

Introduction

The world of artificial intelligence is on fire! Large Language Models (LLMs) are rapidly changing how we interact with technology, from writing emails to generating code. These AI powerhouses need processing muscle to function, and the question on everyone's lips is: Can your device handle the workload? Today we're diving deep into the Apple M1 chip and its potential to power local LLM development.

Imagine a world where you can run cutting-edge AI models right on your own computer, without relying on cloud services. With the rise of smaller LLMs and the increasing power of local hardware, this dream is becoming a reality.

This article will explore the capabilities of the Apple M1 chip, comparing its performance on various LLM models, and providing insights into whether it can be a reliable tool for your AI development journey. Buckle up, it's going to be a wild ride!

Apple M1 Token Speed Generation: How Fast is It?

The Apple M1 chip boasts impressive performance for its size and energy efficiency. We'll be looking at how it tackles different LLM models with varying sizes and quantized formats. The numbers we'll be using are tokens per second (tokens/s), representing the speed at which the model processes and generates text.

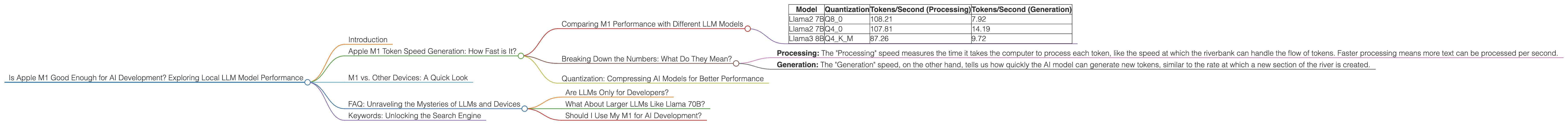

Comparing M1 Performance with Different LLM Models

Let's look at the numbers! We'll be focusing on the performance of the M1 chip for "Llama27B" and “Llama38B" models in various quantization formats:

| Model | Quantization | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| Llama2 7B | Q8_0 | 108.21 | 7.92 |

| Llama2 7B | Q4_0 | 107.81 | 14.19 |

| Llama3 8B | Q4KM | 87.26 | 9.72 |

Important Note: Data for Llama2 7B and Llama3 8B models was not available in F16 format.

It's clear that the M1 chip is a capable performer for smaller LLMs like Llama2 7B and Llama3 8B. The results show significant token speeds, particularly in the "Processing" category, which is crucial for model inference efficiency.

Breaking Down the Numbers: What Do They Mean?

Imagine a text stream as a river, flowing along with individual words being the tokens. Our CPU is the riverbank, diligently processing each token as it passes by.

- Processing: The "Processing" speed measures the time it takes the computer to process each token, like the speed at which the riverbank can handle the flow of tokens. Faster processing means more text can be processed per second.

- Generation: The "Generation" speed, on the other hand, tells us how quickly the AI model can generate new tokens, similar to the rate at which a new section of the river is created.

Quantization: Compressing AI Models for Better Performance

Quantization is like compressing an image file - it reduces the size of the AI model without losing too much accuracy. It allows us to squeeze more power out of our hardware. The Apple M1 chip demonstrates excellent performance with quantized models, especially in the Q8_0 format where the model sizes are significantly reduced.

Think of it as a train carrying a load. A smaller train (quantized model) can carry a similar amount of cargo (information) yet needs less track (processing power) to travel.

M1 vs. Other Devices: A Quick Look

While this article focuses on the Apple M1, it's worth noting that other devices offer competitive AI capabilities. The Nvidia GeForce RTX 3090, for example, often delivers superior performance for larger LLM models.

However, the M1 chip shines in its power efficiency and accessibility. Its smaller footprint and versatility make it a compelling choice for developers who want to explore AI development on their personal computers.

FAQ: Unraveling the Mysteries of LLMs and Devices

Are LLMs Only for Developers?

Not at all! LLMs are opening up a whole new world of possibilities for both developers and everyday users. Imagine using AI to write a letter, craft creative content, or even translate languages.

What About Larger LLMs Like Llama 70B?

The M1 chip's capabilities are not yet fully realized for massive LLMs. Larger models require more processing power and memory than the M1 can currently offer. However, as hardware technology advances, we can expect to see even more impressive AI performance on smaller devices.

Should I Use My M1 for AI Development?

The answer depends on your needs. If you're experimenting with smaller LLMs and prioritize accessibility and energy efficiency, the M1 chip is a fantastic choice. However, for massive LLMs demanding extreme processing power, consider more powerful hardware options.

Keywords: Unlocking the Search Engine

Apple M1, LLM, Llama2, Llama3, AI Development, AI Inference, Quantization, Tokens per Second, Local AI, GPU, CUDA, OpenAI, GPT, BARD, AI Models, Machine Learning.