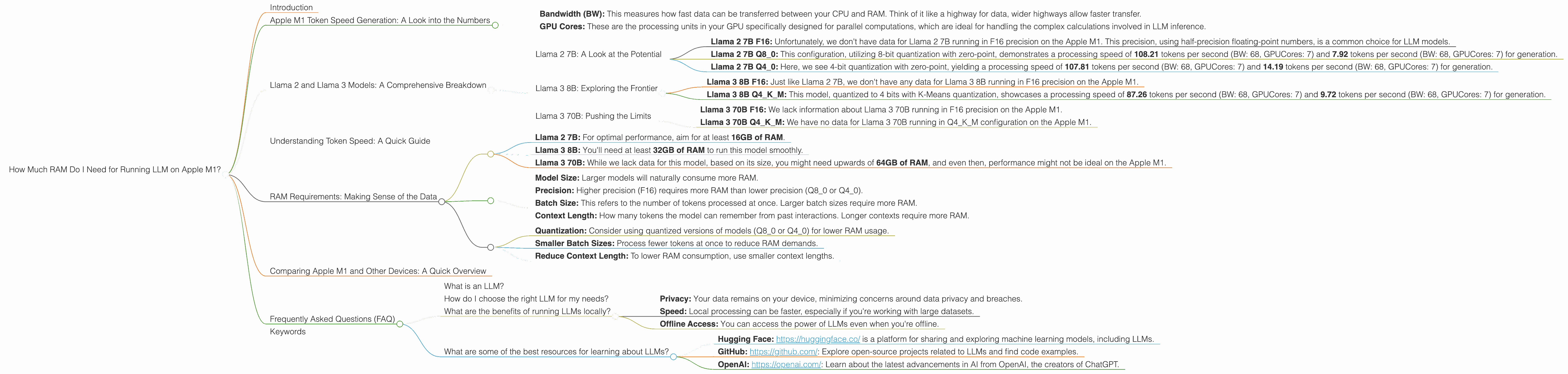

How Much RAM Do I Need for running LLM on Apple M1?

Introduction

You've probably heard buzzwords like "LLMs" and "AI" flying around, but have you ever considered bringing the magic of these models into your own machine? Running local LLM models on your computer offers a world of possibilities, from generating creative text and translating languages to summarizing documents and even writing code. But before you dive into this exciting realm, you need to understand a crucial aspect: RAM.

RAM, or Random Access Memory, is the short-term working space for your computer. Think of it as the desk where your computer keeps its thoughts and calculations while working. LLMs, like the ones you'll find in Llama 2, Llama 3, and others, are huge language models, and they require a significant amount of RAM to operate smoothly.

This guide will explore how much RAM you need to run various LLMs on your Apple M1 chip, providing insights and recommendations based on specific models and their configurations. Whether you're a developer or a curious tech enthusiast, this information will empower you to make informed decisions about your LLM setup.

Apple M1 Token Speed Generation: A Look into the Numbers

We'll dive into the fascinating world of token speeds and how they relate to RAM usage when running LLMs on your Apple M1. For this exploration, we'll focus on two key factors:

- Bandwidth (BW): This measures how fast data can be transferred between your CPU and RAM. Think of it like a highway for data, wider highways allow faster transfer.

- GPU Cores: These are the processing units in your GPU specifically designed for parallel computations, which are ideal for handling the complex calculations involved in LLM inference.

Our dataset, sourced from Performance of llama.cpp on various devices by ggerganov and GPU Benchmarks on LLM Inference by XiongjieDai, showcases the performance of different LLM models on Apple M1 devices. We'll break down the numbers and explain their implications for your RAM requirements.

Llama 2 and Llama 3 Models: A Comprehensive Breakdown

Llama 2 7B: A Look at the Potential

- Llama 2 7B F16: Unfortunately, we don't have data for Llama 2 7B running in F16 precision on the Apple M1. This precision, using half-precision floating-point numbers, is a common choice for LLM models.

- Llama 2 7B Q8_0: This configuration, utilizing 8-bit quantization with zero-point, demonstrates a processing speed of 108.21 tokens per second (BW: 68, GPUCores: 7) and 7.92 tokens per second (BW: 68, GPUCores: 7) for generation.

- Llama 2 7B Q4_0: Here, we see 4-bit quantization with zero-point, yielding a processing speed of 107.81 tokens per second (BW: 68, GPUCores: 7) and 14.19 tokens per second (BW: 68, GPUCores: 7) for generation.

Llama 3 8B: Exploring the Frontier

- Llama 3 8B F16: Just like Llama 2 7B, we don't have any data for Llama 3 8B running in F16 precision on the Apple M1.

- Llama 3 8B Q4KM: This model, quantized to 4 bits with K-Means quantization, showcases a processing speed of 87.26 tokens per second (BW: 68, GPUCores: 7) and 9.72 tokens per second (BW: 68, GPUCores: 7) for generation.

Llama 3 70B: Pushing the Limits

- Llama 3 70B F16: We lack information about Llama 3 70B running in F16 precision on the Apple M1.

- Llama 3 70B Q4KM: We have no data for Llama 3 70B running in Q4KM configuration on the Apple M1.

Understanding Token Speed: A Quick Guide

Imagine a language model as a translator, deciphering words and phrases into a format it understands. These "words" are called tokens, and token speed represents how quickly the model can process these tokens. Higher token speeds mean faster translations, which ultimately translate to faster responses and smoother interactions with your LLM.

RAM Requirements: Making Sense of the Data

Understanding the Trade-Off: The data shows that quantization, the process of reducing the size of model weights, can significantly improve performance. This is especially true for models like Llama 2 7B, where we see a significant jump in token speed using Q80 and Q40 configurations compared to F16. However, running models like Llama 3 8B, even with Q4KM, requires a significant amount of RAM.

Important Note: We don't have data for Llama 3 70B models on the Apple M1, making it difficult to estimate RAM requirements. However, given its size, it's safe to assume that running this model would require considerably more resources than the smaller ones.

General RAM Recommendations:

- Llama 2 7B: For optimal performance, aim for at least 16GB of RAM.

- Llama 3 8B: You'll need at least 32GB of RAM to run this model smoothly.

- Llama 3 70B: While we lack data for this model, based on its size, you might need upwards of 64GB of RAM, and even then, performance might not be ideal on the Apple M1.

Factors Affecting RAM Usage:

- Model Size: Larger models will naturally consume more RAM.

- Precision: Higher precision (F16) requires more RAM than lower precision (Q80 or Q40).

- Batch Size: This refers to the number of tokens processed at once. Larger batch sizes require more RAM.

- Context Length: How many tokens the model can remember from past interactions. Longer contexts require more RAM.

Tips for RAM Optimization:

- Quantization: Consider using quantized versions of models (Q80 or Q40) for lower RAM usage.

- Smaller Batch Sizes: Process fewer tokens at once to reduce RAM demands.

- Reduce Context Length: To lower RAM consumption, use smaller context lengths.

Comparing Apple M1 and Other Devices: A Quick Overview

While this article focuses on the Apple M1, remember that other devices like the MacBook Pro and other Mac models with different M-series chips might offer varying performance and RAM requirements. It's always a good idea to check the specific device specifications and data sources like those we mentioned previously for the most up-to-date information.

Frequently Asked Questions (FAQ)

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence that excels at understanding and generating human-like text. Think of it as a super-powered language translator and writer, capable of creating compelling stories, translating languages, summarizing documents, and more.

How do I choose the right LLM for my needs?

Consider factors like the size and complexity of the task, the desired level of detail, and the availability of resources. Smaller LLMs, like Llama 2 7B, can be ideal for basic tasks, while larger models like Llama 3 70B might be better suited for more complex challenges, but require significantly more RAM.

What are the benefits of running LLMs locally?

Running LLMs locally provides a number of benefits, including:

- Privacy: Your data remains on your device, minimizing concerns around data privacy and breaches.

- Speed: Local processing can be faster, especially if you're working with large datasets.

- Offline Access: You can access the power of LLMs even when you're offline.

What are some of the best resources for learning about LLMs?

- Hugging Face: https://huggingface.co/ is a platform for sharing and exploring machine learning models, including LLMs.

- GitHub: https://github.com/: Explore open-source projects related to LLMs and find code examples.

- OpenAI: https://openai.com/: Learn about the latest advancements in AI from OpenAI, the creators of ChatGPT.

Keywords

LLM, Apple M1, RAM, Llama 2 7B, Llama 3 8B, Llama 3 70B, Token Speed, GPU Cores, Quantization, F16, Q80, Q40, Q4KM, Bandwidth, Inference, Generation, Processing, Local Models, Device Comparison, Performance Analysis