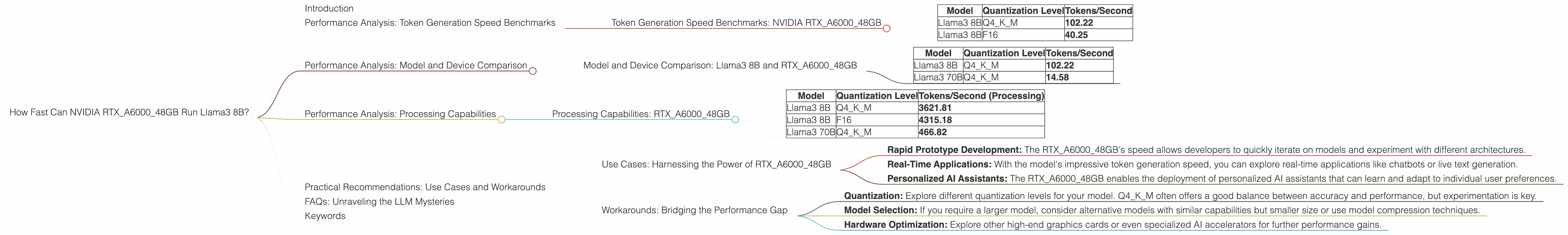

How Fast Can NVIDIA RTX A6000 48GB Run Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, pushing the boundaries of what's possible with artificial intelligence. LLMs are powerful tools with diverse applications from generating creative content to providing insightful answers. But running these models locally requires powerful hardware.

This article deep dives into the performance of the NVIDIA RTXA600048GB graphics card when running the Llama3 8B model. We'll explore its token generation speed, processing capability, and discuss the impact of quantization levels, providing insights for developers looking to optimize their local LLM setup.

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a crucial metric for LLM performance. It essentially measures how quickly your device can generate text, which directly impacts user experience.

Token Generation Speed Benchmarks: NVIDIA RTXA600048GB

The following table highlights the token generation speed of the NVIDIA RTXA600048GB for different configurations of the Llama3 8B model:

| Model | Quantization Level | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 8B | F16 | 40.25 |

Key Observations:

- The RTXA600048GB delivers impressive token generation speeds when running the Llama3 8B model.

- The Q4KM quantization level significantly outperforms the F16 level in terms of token generation speed, nearly 2.5x faster.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B and RTXA600048GB

To understand the RTXA600048GB's performance relative to other hardware, let's compare it to the Llama3 70B model.

| Model | Quantization Level | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 70B | Q4KM | 14.58 |

Key Observations:

- The RTXA600048GB exhibits a significant performance difference between the Llama3 8B and Llama3 70B models. The smaller Llama3 8B model runs significantly faster, showcasing the impact of model size on performance.

- This highlights a crucial consideration: the trade-off between model size and performance. While larger models offer greater capabilities, they come with the cost of reduced speed.

Performance Analysis: Processing Capabilities

Processing Capabilities: RTXA600048GB

Beyond token generation speed, it's essential to consider the processing capability of the device. This metric reflects how efficiently the device can handle complex operations involved in running LLM models.

| Model | Quantization Level | Tokens/Second (Processing) |

|---|---|---|

| Llama3 8B | Q4KM | 3621.81 |

| Llama3 8B | F16 | 4315.18 |

| Llama3 70B | Q4KM | 466.82 |

Key Observations:

- The RTXA600048GB demonstrates excellent processing capability, reaching thousands of tokens per second.

- The F16 quantization level exhibits slightly higher processing speed compared to Q4KM, suggesting that for certain models, reduced precision may actually lead to faster processing.

- The difference in processing speed between Llama3 8B and Llama3 70B models showcases the impact of model size on processing capability.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Harnessing the Power of RTXA600048GB

The RTXA600048GB provides a compelling setup for local LLM development and deployment. Here are some potential use cases:

- Rapid Prototype Development: The RTXA600048GB's speed allows developers to quickly iterate on models and experiment with different architectures.

- Real-Time Applications: With the model's impressive token generation speed, you can explore real-time applications like chatbots or live text generation.

- Personalized AI Assistants: The RTXA600048GB enables the deployment of personalized AI assistants that can learn and adapt to individual user preferences.

Workarounds: Bridging the Performance Gap

While the RTXA600048GB delivers impressive performance, it's important to acknowledge that larger models can still push the limits. Here are some workarounds to consider:

- Quantization: Explore different quantization levels for your model. Q4KM often offers a good balance between accuracy and performance, but experimentation is key.

- Model Selection: If you require a larger model, consider alternative models with similar capabilities but smaller size or use model compression techniques.

- Hardware Optimization: Explore other high-end graphics cards or even specialized AI accelerators for further performance gains.

FAQs: Unraveling the LLM Mysteries

Q1: What exactly is quantization?

A: Quantization is like downsizing an image for a smaller file size. In the world of LLMs, it reduces the precision of numbers used to represent the model's weights. This can make the model smaller and faster, but also slightly less accurate.

Q2: How does model size affect performance?

A: Think of a model as a recipe. Larger models are like complex recipes with many ingredients and instructions. They can make more sophisticated dishes, but they take longer to prepare. Smaller models are like simpler recipes, quicker to make but might not have the same gourmet appeal.

Q3: Why are some LLM configurations missing data?

A: The data we used is based on publicly available benchmarks conducted by different researchers. Not all model/device combinations have been tested or released.

Keywords

NVIDIA RTXA600048GB, Llama3 8B, LLM, large language model, token generation speed, processing capability, quantization, model size, performance, use cases, workarounds, AI, deep learning, GPU, graphics card, development, deployment, real-time, chatbots, AI assistants, optimization, hardware, benchmark, comparison, FAQs.