How Fast Can NVIDIA RTX A6000 48GB Run Llama3 70B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These AI marvels can generate human-quality text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But with increasing model sizes (we are talking billions of parameters here!), having the right hardware is crucial for running these models locally.

Today, we're going to take a deep dive into the NVIDIA RTXA600048GB graphics card, a popular choice for AI development, and see how it performs while running the Llama3 70B model. We'll analyze the token generation speed, compare different quantization methods, and explore practical recommendations for optimizing your setup.

So grab your coffee (or your favorite energy drink), and let's get started!

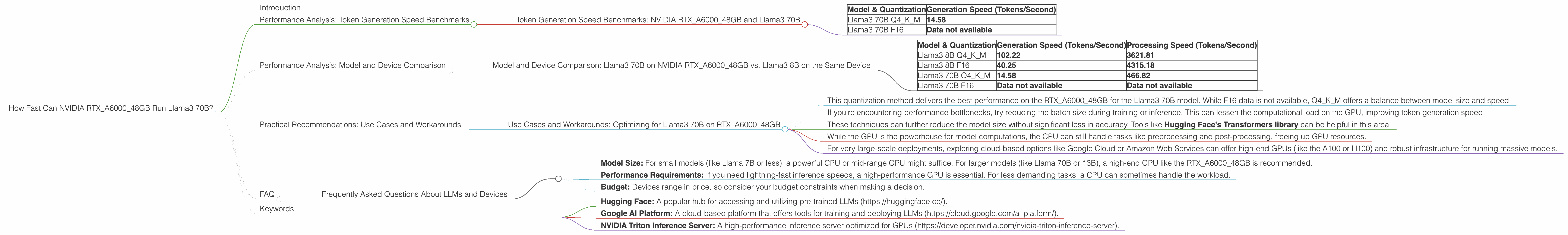

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTXA600048GB and Llama3 70B

The token generation speed is a critical metric for evaluating LLM performance. It measures how many tokens a model can process per second, directly impacting the speed of text generation and other tasks. Let's delve into the numbers:

| Model & Quantization | Generation Speed (Tokens/Second) |

|---|---|

| Llama3 70B Q4KM | 14.58 |

| Llama3 70B F16 | Data not available |

What does this mean?

The NVIDIA RTXA600048GB can process 14.58 tokens per second when running the Llama3 70B model using Q4KM quantization. This is a fairly decent speed, though it's important to note that the F16 (half-precision) quantization data is not available for Llama3 70B on this specific GPU.

Let's put this in perspective: Imagine a human typing 60 words per minute – roughly 1 word per second. The RTXA600048GB running Llama3 70B is 14 times faster than a human typist!

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 70B on NVIDIA RTXA600048GB vs. Llama3 8B on the Same Device

Let's compare the Llama3 70B performance with the Llama3 8B performance on the RTXA600048GB.

| Model & Quantization | Generation Speed (Tokens/Second) | Processing Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B Q4KM | 102.22 | 3621.81 |

| Llama3 8B F16 | 40.25 | 4315.18 |

| Llama3 70B Q4KM | 14.58 | 466.82 |

| Llama3 70B F16 | Data not available | Data not available |

What do these numbers tell us?

As expected, the smaller Llama3 8B model runs significantly faster than the Llama3 70B model on the RTXA600048GB. This is due to the significantly larger number of parameters in the 70B model. It's like trying to run a marathon in a small car versus a luxury SUV – the smaller car is nimbler and faster!

It's also interesting to note that while the generation speeds show a big difference between 8B and 70B models, the processing speeds are closer. This is likely due to the GPU's ability to handle massive parallel computations for model processing.

Practical Recommendations: Use Cases and Workarounds

Use Cases and Workarounds: Optimizing for Llama3 70B on RTXA600048GB

Now that we've analyzed the performance data, let's discuss how to make the most of the RTXA600048GB when running Llama3 70B:

1. Prioritize Q4KM Quantization: - This quantization method delivers the best performance on the RTXA600048GB for the Llama3 70B model. While F16 data is not available, Q4KM offers a balance between model size and speed.

2. Consider Smaller Batch Sizes: - If you're encountering performance bottlenecks, try reducing the batch size during training or inference. This can lessen the computational load on the GPU, improving token generation speed.

3. Explore Model Pruning and Quantization: - These techniques can further reduce the model size without significant loss in accuracy. Tools like Hugging Face's Transformers library can be helpful in this area.

4. Leverage CPU Resources: - While the GPU is the powerhouse for model computations, the CPU can still handle tasks like preprocessing and post-processing, freeing up GPU resources.

5. Consider Cloud-Based Solutions: - For very large-scale deployments, exploring cloud-based options like Google Cloud or Amazon Web Services can offer high-end GPUs (like the A100 or H100) and robust infrastructure for running massive models.

FAQ

Frequently Asked Questions About LLMs and Devices

Q: What is quantization?

A: Quantization is a technique used to reduce the size of LLM models by representing their weights with fewer bits (e.g., 4-bit quantization). This allows for faster inference and smaller memory footprint, especially on devices with limited memory, like mobile phones or embedded systems. Think of it like compressing a file – you reduce the size without losing too much quality.

Q: How do I choose the right device for my LLM application?

A: The best device depends on your specific requirements:

- Model Size: For small models (like Llama 7B or less), a powerful CPU or mid-range GPU might suffice. For larger models (like Llama 70B or 13B), a high-end GPU like the RTXA600048GB is recommended.

- Performance Requirements: If you need lightning-fast inference speeds, a high-performance GPU is essential. For less demanding tasks, a CPU can sometimes handle the workload.

- Budget: Devices range in price, so consider your budget constraints when making a decision.

Q: What are the differences between Llama 7B and Llama 70B?

A: The main difference is the number of parameters. Llama 7B has 7 billion parameters, while Llama 70B has 70 billion parameters. This means Llama 70B is significantly more complex and capable but also requires more computational resources to run. Think of it like comparing a basic smartphone to a powerful laptop – the laptop can do more but demands more resources.

Q: Where can I learn more about using LLMs locally?

A: There are plenty of resources available:

- Hugging Face: A popular hub for accessing and utilizing pre-trained LLMs (https://huggingface.co/).

- Google AI Platform: A cloud-based platform that offers tools for training and deploying LLMs (https://cloud.google.com/ai-platform/).

- NVIDIA Triton Inference Server: A high-performance inference server optimized for GPUs (https://developer.nvidia.com/nvidia-triton-inference-server).

Keywords

NVIDIA RTXA600048GB, Llama3 70B, Llama3 8B, Token Generation Speed, Quantization, Q4KM, F16, Performance Benchmarks, Local LLM, GPU, Inference, Deep Dive, Practical Recommendations, Use Cases, Workarounds, LLM Training, Hugging Face, Google AI Platform, NVIDIA Triton Inference Server