How Fast Can NVIDIA RTX 6000 Ada 48GB Run Llama3 8B?

Introduction

The realm of large language models (LLMs) is buzzing with excitement. These powerful AI systems, trained on massive datasets, can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, often mimicking human-like responses. But, harnessing this power locally, on your own machine, requires a capable device.

Enter the NVIDIA RTX6000Ada_48GB, a beast of a graphics card. In this article, we'll dive deep into its performance handling the Llama 3 8B model, analyzing its token generation speed, comparing it with other configurations, and exploring use cases. Let's see if this GPU can truly unleash the potential of this versatile LLM.

Performance Analysis: Token Generation Speed

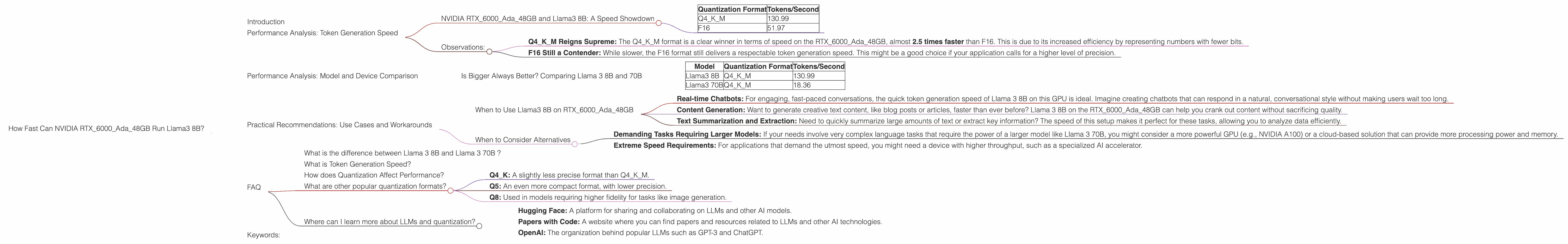

NVIDIA RTX6000Ada_48GB and Llama3 8B: A Speed Showdown

The real measure of a device's prowess with an LLM is how fast it can generate tokens. These are the building blocks of text, like words and punctuation marks. The faster the token generation speed, the quicker your model can process text, making your interactions with it smoother and responsive. We'll analyze the performance of our RTX6000Ada48GB on the Llama 3 8B model in two popular quantization formats: Q4K_M and F16.

Quantization is a way to reduce the size of the model by using less precision for numbers, leading to smaller file sizes and often, faster processing times. Think of it as a way to compress the model without compromising its performance too much.

Here's what we found:

| Quantization Format | Tokens/Second |

|---|---|

| Q4KM | 130.99 |

| F16 | 51.97 |

Remember: These numbers are tokens generated per second. The higher the number, the better!

Observations:

- Q4KM Reigns Supreme: The Q4KM format is a clear winner in terms of speed on the RTX6000Ada_48GB, almost 2.5 times faster than F16. This is due to its increased efficiency by representing numbers with fewer bits.

- F16 Still a Contender: While slower, the F16 format still delivers a respectable token generation speed. This might be a good choice if your application calls for a higher level of precision.

Performance Analysis: Model and Device Comparison

Is Bigger Always Better? Comparing Llama 3 8B and 70B

You might be tempted to think that a larger model like Llama3 70B would be the ultimate choice. After all, bigger models have more parameters (think of them as memory slots) and are generally thought to be capable of tackling more complex tasks. But, size isn't the only factor.

Let's compare the performance of Llama3 8B and 70B on the RTX6000Ada_48GB to see what we find:

| Model | Quantization Format | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 130.99 |

| Llama3 70B | Q4KM | 18.36 |

Observations:

- Size Matters, but Speed Matters More: While Llama 3 70B is capable of handling more sophisticated tasks, its token generation speed on the RTX6000Ada_48GB is significantly slower compared to Llama3 8B.

- The Q4KM Advantage: Both models benefit from the Q4KM quantization format, but the difference in speed between the two models is even more pronounced with Q4KM.

Think of it this way: Imagine you're a chef preparing a meal. A smaller, more efficient recipe might get you a delicious dish faster than a gargantuan recipe that requires a lot of time and effort.

Practical Recommendations: Use Cases and Workarounds

When to Use Llama3 8B on RTX6000Ada_48GB

With its speed and efficiency, the RTX6000Ada_48GB is a great match for the Llama 3 8B model in several situations:

- Real-time Chatbots: For engaging, fast-paced conversations, the quick token generation speed of Llama 3 8B on this GPU is ideal. Imagine creating chatbots that can respond in a natural, conversational style without making users wait too long.

- Content Generation: Want to generate creative text content, like blog posts or articles, faster than ever before? Llama 3 8B on the RTX6000Ada_48GB can help you crank out content without sacrificing quality.

- Text Summarization and Extraction: Need to quickly summarize large amounts of text or extract key information? The speed of this setup makes it perfect for these tasks, allowing you to analyze data efficiently.

When to Consider Alternatives

While the RTX6000Ada_48GB is a powerful choice for Llama 3 8B, there are situations where you might want to explore other options:

- Demanding Tasks Requiring Larger Models: If your needs involve very complex language tasks that require the power of a larger model like Llama 3 70B, you might consider a more powerful GPU (e.g., NVIDIA A100) or a cloud-based solution that can provide more processing power and memory.

- Extreme Speed Requirements: For applications that demand the utmost speed, you might need a device with higher throughput, such as a specialized AI accelerator.

FAQ

What is the difference between Llama 3 8B and Llama 3 70B ?

The key difference lies in the size and complexity of these models. Llama 3 8B has 8 billion parameters, while Llama 3 70B has a whopping 70 billion parameters. Larger models generally have more capacity to learn complex patterns and perform more sophisticated tasks. However, they are also more computationally demanding and require more powerful hardware.

What is Token Generation Speed?

Token generation speed refers to how quickly a device can generate tokens, which are the building blocks of text. Think of it as how fast the model can "type" words and punctuation marks.

How does Quantization Affect Performance?

Quantization is a technique used to make models smaller and faster by representing numbers with less precision. Think of it as using a reduced number of shades of gray to represent an image, making it smaller. While not a direct trade-off, lower precision often results in faster speed but can sometimes lead to a slight reduction in accuracy.

What are other popular quantization formats?

Other common formats include:

- Q4K: A slightly less precise format than Q4K_M.

- Q5: An even more compact format, with lower precision.

- Q8: Used in models requiring higher fidelity for tasks like image generation.

Where can I learn more about LLMs and quantization?

- Hugging Face: A platform for sharing and collaborating on LLMs and other AI models.

- Papers with Code: A website where you can find papers and resources related to LLMs and other AI technologies.

- OpenAI: The organization behind popular LLMs such as GPT-3 and ChatGPT.

Keywords:

NVIDIA RTX6000Ada48GB, Llama3 8B, LLM, Token Generation Speed, Quantization, Q4K_M, F16, Performance Analysis, GPU, Model Comparison, Practical Recommendations, Use Cases, Chatbots, Content Generation, Text Summarization, AI Hardware, Deep Dive, AI Accelerator, GPU Benchmark, Local LLM.