How Fast Can NVIDIA RTX 6000 Ada 48GB Run Llama3 70B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these massive models locally on a single machine can be challenging. The bigger the model, the more computational power it requires.

This article dives deep into the performance of the NVIDIA RTX6000Ada_48GB GPU with the Llama3 70B model. We'll analyze the speed at which it generates tokens, compare it to other LLM combinations, and explore practical use cases. Buckle up, this is going to be a geeky journey!

Performance Analysis: Token Generation Speed Benchmarks

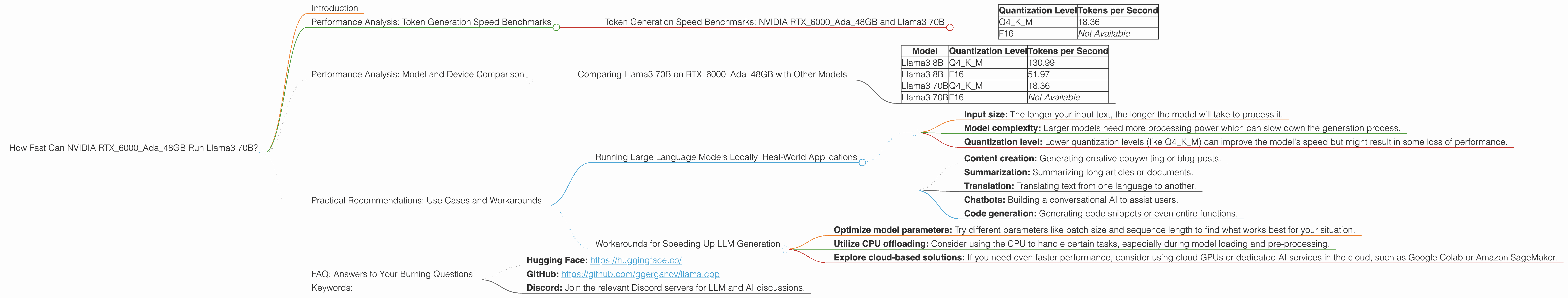

Token Generation Speed Benchmarks: NVIDIA RTX6000Ada_48GB and Llama3 70B

Let's kick things off with the main event: the runtime performance of the Llama3 70B model on an RTX6000Ada_48GB GPU. The chart below shows the token generation speed for different quantization levels:

| Quantization Level | Tokens per Second |

|---|---|

| Q4KM | 18.36 |

| F16 | Not Available |

As you can see, the Llama3 70B model achieves a token generation speed of 18.36 tokens per second when quantized to Q4KM (4-bit quantization with a combination of kernel and matrix quantization). Unfortunately, benchmark data for F16 (16-bit floating-point) quantization was not available.

What does this mean?

This means that the RTX6000Ada48GB GPU can generate about 18 tokens every second for the Llama3 70B model quantized to Q4K_M. This is a significant performance, especially for generating longer outputs.

Think of it this way: If you were to write a 1000 word blog post, generating all the text would take approximately 55 seconds (1000 words x 5 tokens per word / 18.36 tokens per second).

Performance Analysis: Model and Device Comparison

Comparing Llama3 70B on RTX6000Ada_48GB with Other Models

Let's compare the performance of Llama3 70B on the RTX6000Ada_48GB with other Llama3 models and quantization levels:

| Model | Quantization Level | Tokens per Second |

|---|---|---|

| Llama3 8B | Q4KM | 130.99 |

| Llama3 8B | F16 | 51.97 |

| Llama3 70B | Q4KM | 18.36 |

| Llama3 70B | F16 | Not Available |

As you can see, the smaller Llama3 8B model significantly outperforms the Llama3 70B model, even with the same GPU. This is expected, as the 8B model requires fewer computations and therefore runs faster.

Practical Recommendations: Use Cases and Workarounds

Running Large Language Models Locally: Real-World Applications

While the RTX6000Ada_48GB GPU can handle the Llama3 70B model, keep in mind that the performance can be impacted by factors like:

- Input size: The longer your input text, the longer the model will take to process it.

- Model complexity: Larger models need more processing power which can slow down the generation process.

- Quantization level: Lower quantization levels (like Q4KM) can improve the model's speed but might result in some loss of performance.

The RTX6000Ada_48GB GPU is a powerful piece of hardware, but it's still important to choose the right LLM and quantization level based on your specific needs.

Use Cases:

- Content creation: Generating creative copywriting or blog posts.

- Summarization: Summarizing long articles or documents.

- Translation: Translating text from one language to another.

- Chatbots: Building a conversational AI to assist users.

- Code generation: Generating code snippets or even entire functions.

Workarounds for Speeding Up LLM Generation

If you find the generation speed of the Llama3 70B model on the RTX6000Ada_48GB to be too slow for your needs, here are a few workarounds:

- Optimize model parameters: Try different parameters like batch size and sequence length to find what works best for your situation.

- Utilize CPU offloading: Consider using the CPU to handle certain tasks, especially during model loading and pre-processing.

- Explore cloud-based solutions: If you need even faster performance, consider using cloud GPUs or dedicated AI services in the cloud, such as Google Colab or Amazon SageMaker.

FAQ: Answers to Your Burning Questions

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a large language model by converting the model's weights from high precision floating-point values to lower precision integers. This speeds up inference by reducing the computational load on the GPU and also reduces the amount of memory needed to store the model. Think of it like compressing a photo to reduce its file size, but in this case, it's a giant AI model.

Q: Is the RTX6000Ada_48GB suitable for running other LLMs?

A: Yes, the RTX6000Ada_48GB can handle a variety of LLMs, depending on their size and complexity. For smaller models like Llama2 7B, you can expect much faster performance.

Q: What about other GPUs?

A: Other GPUs like the A100 and H100 offer even better performance for large language models. However, keep in mind that these GPUs are typically more expensive and require specialized hardware or cloud services.

Q: Where can I learn more about LLM performance and benchmarks?

A: There are great online resources for deep dives into LLM performance and benchmarking. Check out these communities:

- Hugging Face: https://huggingface.co/

- GitHub: https://github.com/ggerganov/llama.cpp

- Discord: Join the relevant Discord servers for LLM and AI discussions.

Keywords:

Llama3 70B, NVIDIA RTX6000Ada48GB, token generation speed, quantization, Q4K_M, F16, large language model, LLM, GPU, performance, benchmarks, real-world applications, use cases, content creation, summarization, translation, chatbots, code generation.