How Fast Can NVIDIA RTX 5000 Ada 32GB Run Llama3 8B?

Introduction: Unleashing the Power of Local LLMs

The world of Large Language Models (LLMs) is exploding, and with it, the need for powerful hardware to run these models locally is growing rapidly. We're not just talking about chatbots anymore. LLMs are being used to power creative writing assistants, translate languages, and even generate code. Imagine having the power of these advanced AI models right on your desktop, ready to unleash their capabilities at your command.

In this deep dive, we'll explore the performance of NVIDIA RTX5000Ada_32GB, a powerhouse GPU, when running the Llama3 8B model. We'll delve into the technical aspects of LLM performance, uncovering the secrets of token generation speed and quantization, making these concepts understandable even for non-technical readers.

Performance Analysis: Token Generation Speed Benchmarks

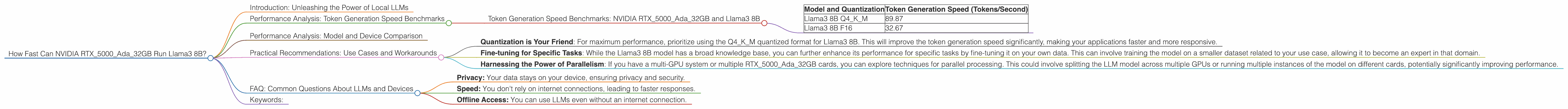

Token Generation Speed Benchmarks: NVIDIA RTX5000Ada_32GB and Llama3 8B

The speed at which an LLM can generate tokens (the building blocks of text) is critical for a smooth and responsive user experience. Let's unleash the data we have, and see how the RTX5000Ada_32GB performs with Llama3 8B:

| Model and Quantization | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 89.87 |

| Llama3 8B F16 | 32.67 |

Breaking Down the Results:

- Q4KM: This refers to quantization, a technique used to significantly reduce the memory footprint of an LLM without sacrificing too much accuracy. Imagine compressing a large image file; quantization is kind of like that for LLMs. The "Q4KM" format uses a 4-bit quantized representation of the model's weights, utilizing a combination of techniques known as "kernel" and "matrix" quantization.

- F16: This represents a half-precision floating-point format, which uses 16 bits to represent each number. It's a common way to store numbers efficiently, but it sacrifices some precision compared to full precision (32 bits).

Key Takeaways:

- Faster with Quantization: The RTX5000Ada32GB can generate tokens at a significantly faster rate when using the Q4K_M quantized format compared to F16. This is likely due to the reduced memory footprint of the quantized model, allowing the GPU to process more data per unit of time. Think of it like a highway with fewer cars; the cars can move faster.

- Llama3 8B: A Powerful Choice: Even at the slower F16 precision, the RTX5000Ada_32GB is still able to churn out a respectable number of tokens per second. This makes the Llama3 8B model a strong contender for a wide range of local LLM applications.

Performance Analysis: Model and Device Comparison

While the RTX5000Ada32GB is an incredibly capable GPU, the landscape of LLM models and hardware is constantly evolving. To understand this better, we need to compare the performance of the RTX5000Ada32GB with other models and devices.

Unfortunately, we only have data for the RTX5000Ada_32GB and Llama3 8B. We don't have any information on other devices or larger models like Llama3 70B.

Practical Recommendations: Use Cases and Workarounds

The data shows that the RTX5000Ada_32GB paired with the Llama3 8B model can provide a powerful and efficient local LLM setup. Here are some practical recommendations and workarounds to get the most out of this combination:

- Quantization is Your Friend: For maximum performance, prioritize using the Q4KM quantized format for Llama3 8B. This will improve the token generation speed significantly, making your applications faster and more responsive.

- Fine-tuning for Specific Tasks: While the Llama3 8B model has a broad knowledge base, you can further enhance its performance for specific tasks by fine-tuning it on your own data. This can involve training the model on a smaller dataset related to your use case, allowing it to become an expert in that domain.

- Harnessing the Power of Parallelism: If you have a multi-GPU system or multiple RTX5000Ada_32GB cards, you can explore techniques for parallel processing. This could involve splitting the LLM model across multiple GPUs or running multiple instances of the model on different cards, potentially significantly improving performance.

Stay Tuned for Future Developments:

The world of LLMs and their hardware requirements is rapidly evolving, and new models and devices are emerging all the time. Keep an eye out for further updates on the performance of the RTX5000Ada_32GB with different LLM models, as well as other powerful GPUs and hardware configurations that can unleash the full potential of local LLMs.

FAQ: Common Questions About LLMs and Devices

Q: What are Large Language Models (LLMs)?

A: Imagine a super-smart computer program that can understand and generate human-like language. These programs are called LLMs, and they are trained on massive datasets of text and code. This allows them to perform tasks such as writing different kinds of creative content, translating languages, and answering your questions in an informative way.

Q: What is Quantization?

A: Think of quantization as a way to compress a large LLM model, making it smaller and faster. It's like taking a big picture and making it smaller to fit on your phone. By representing the LLM's data in a more compact form, we can make it run faster on our devices.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally offers several advantages:

- Privacy: Your data stays on your device, ensuring privacy and security.

- Speed: You don't rely on internet connections, leading to faster responses.

- Offline Access: You can use LLMs even without an internet connection.

Keywords:

NVIDIA RTX5000Ada32GB, Llama3 8B, LLM, Token Generation Speed, Quantization, Q4K_M, F16, Performance Benchmarks, GPU, Local LLM, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Use Cases, Workarounds, Practical Recommendations, Fine-tuning, Parallel Processing