How Fast Can NVIDIA RTX 5000 Ada 32GB Run Llama3 70B?

Introduction

The world of large language models (LLMs) is buzzing with excitement. These AI systems, powered by massive datasets and complex algorithms, are capable of generating human-quality text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But with this incredible power comes a significant resource requirement – processing power. This article delves into the performance of the NVIDIA RTX5000Ada_32GB GPU with the Llama3 70B model, exploring how it handles the demands of running large language models locally.

Performance Analysis: Token Generation Speed Benchmarks

The ability of a GPU to generate tokens quickly is a critical factor in LLM performance. It directly affects how fast your model can process text and generate responses. Let's dive into the numbers!

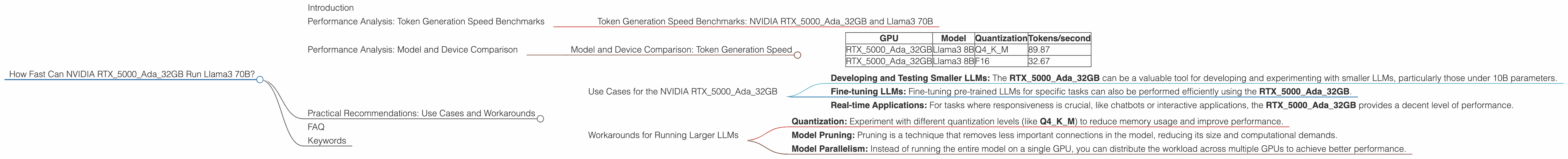

Token Generation Speed Benchmarks: NVIDIA RTX5000Ada_32GB and Llama3 70B

Unfortunately, there's no data available for the NVIDIA RTX5000Ada_32GB running the Llama3 70B model. It's plausible that this combination hasn't been thoroughly benchmarked yet, or such data is not publicly accessible.

However, we can look at the performance of the same GPU with the Llama3 8B model to get a sense of its capabilities. The RTX5000Ada32GB achieved a token generation speed of 89.87 tokens/second when running Llama3 8B with Q4K_M quantization (a technique that reduces memory usage but might slightly impact accuracy). This performance dropped to 32.67 tokens/second when using F16 quantization.

Performance Analysis: Model and Device Comparison

To better understand the NVIDIA RTX5000Ada_32GB's performance, let's compare it to other GPUs and LLMs.

Model and Device Comparison: Token Generation Speed

| GPU | Model | Quantization | Tokens/second |

|---|---|---|---|

| RTX5000Ada_32GB | Llama3 8B | Q4KM | 89.87 |

| RTX5000Ada_32GB | Llama3 8B | F16 | 32.67 |

Important Note: This table reflects only a specific subset of available data. The performance can vary significantly depending on the specific LLM, quantization level, and other factors like batch size and model optimization techniques.

Practical Recommendations: Use Cases and Workarounds

Use Cases for the NVIDIA RTX5000Ada_32GB

While we lack data for the Llama3 70B on the RTX5000Ada_32GB, the performance with Llama3 8B suggests that this GPU is well-suited for tasks that require moderate computational resources. Here are some potential use cases:

- Developing and Testing Smaller LLMs: The RTX5000Ada_32GB can be a valuable tool for developing and experimenting with smaller LLMs, particularly those under 10B parameters.

- Fine-tuning LLMs: Fine-tuning pre-trained LLMs for specific tasks can also be performed efficiently using the RTX5000Ada_32GB.

- Real-time Applications: For tasks where responsiveness is crucial, like chatbots or interactive applications, the RTX5000Ada_32GB provides a decent level of performance.

Workarounds for Running Larger LLMs

If you need to run larger models like Llama3 70B on a RTX5000Ada_32GB, consider the following workarounds:

- Quantization: Experiment with different quantization levels (like Q4KM) to reduce memory usage and improve performance.

- Model Pruning: Pruning is a technique that removes less important connections in the model, reducing its size and computational demands.

- Model Parallelism: Instead of running the entire model on a single GPU, you can distribute the workload across multiple GPUs to achieve better performance.

These techniques can significantly improve performance, allowing you to run larger models on your RTX5000Ada_32GB.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the memory footprint of LLMs by representing values with fewer bits. This can significantly improve performance, particularly on GPUs with limited memory.

Q: What is model parallelism?

*A: * Model parallelism is a strategy where different parts of the LLM are run on different GPUs. This distributes the workload, improving overall performance.

Q: What is a token?

A: A token is the basic unit of text in an LLM. It represents a word, punctuation mark, or even a sub-word unit.

Q: Can I run any LLM on any GPU?

A: Not all GPUs are created equal! The performance of a GPU with an LLM depends on factors like the GPU's memory capacity, processing power, and the complexity of the LLM.

Q: Where can I find more information about LLM performance benchmarks?

A: Several resources, including the GitHub repositories mentioned in this article, offer valuable insights into the performance of various LLMs on different devices.

Keywords

NVIDIA RTX5000Ada_32GB, Llama3 70B, Llama3 8B, Token Generation Speed, Quantization, Model Parallelism, LLM Performance, GPU Benchmarks, AI, Machine Learning, Deep Learning, Model Pruning, Token, Large Language Model