How Fast Can NVIDIA RTX 4000 Ada 20GB x4 Run Llama3 8B?

Introduction

The world of large language models (LLMs) is exploding, and with it, the demand for powerful hardware to run these models locally. You might be wondering, "Can I run Llama3 8B on my shiny new NVIDIA RTX4000Ada20GBx4?" Well, buckle up, geeks, because we're about to dive deep into the performance of this GPU running Llama3 8B, and let's just say it's not all rainbows and unicorns.

Think of it like this: Imagine training your own AI assistant to write your next novel. But instead of waiting for an API to respond, you want to have it running locally, spitting out words faster than you can type. That's where powerful GPUs like the RTX4000Ada20GBx4 come in, but before you start churning out the next bestseller, let's see how well these GPUs handle Llama3 8B.

Performance Analysis: Token Generation Speed Benchmarks

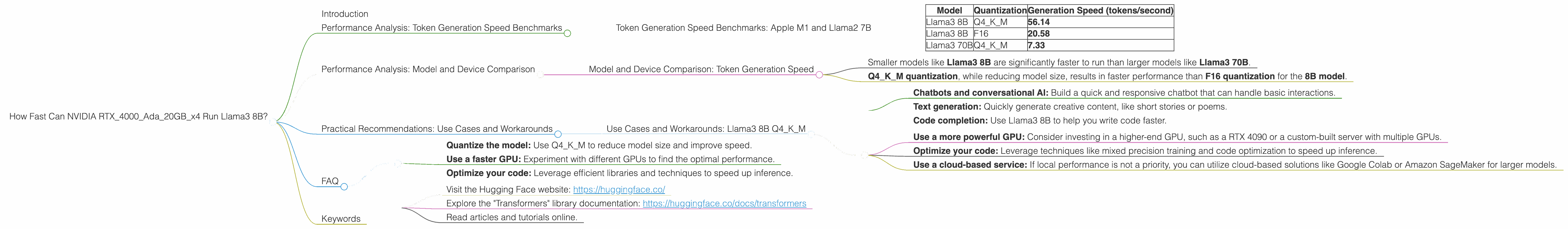

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start by looking at the token generation speed - a metric that measures how quickly your GPU can generate new words.

Table 1. Token Generation Speed Benchmarks

| Model | Quantization | Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 56.14 |

| Llama3 8B | F16 | 20.58 |

| Llama3 70B | Q4KM | 7.33 |

Whoa, hold on! What's this quantization business? Imagine you have a super detailed image. You can compress it by reducing the number of colors, and the image will still be recognizable, but less detailed. Quantization does the same with LLMs, reducing the amount of data while preserving most of the information.

Q4KM and F16 are different quantization techniques. Q4KM is a more aggressive form of quantization which results in much smaller models, but may sacrifice some accuracy. F16 is a less aggressive method that maintains more accuracy.

What does this tell us?

- Llama3 8B Q4KM: This is a clear winner, generating 56.14 tokens per second. Remember, a token isn't necessarily a full word, but it's a building block of a sentence.

- Llama3 8B F16: This is faster than the 70B model, but not as fast as the Q4KM version of the 8B model.

- Llama3 70B Q4KM: This model is significantly slower, generating 7.33 tokens per second due to its larger size.

Think of it like this: It's like comparing a compact car to a truck and a giant, lumbering bus. The compact car (Llama3 8B Q4KM) is quick and nimble, the truck (Llama3 8B F16) is still speedy, but the bus (Llama3 70B Q4KM) is slow and cumbersome.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Token Generation Speed

We can't compare the performance of this device with other devices directly using the data provided, as this data only covers performance on one specific device.

However, we can analyze the data and draw some general conclusions.

- Smaller models like Llama3 8B are significantly faster to run than larger models like Llama3 70B.

- Q4KM quantization, while reducing model size, results in faster performance than F16 quantization for the 8B model.

Practical Recommendations: Use Cases and Workarounds

Use Cases and Workarounds: Llama3 8B Q4KM

So, what are some practical use cases for this setup?

- Chatbots and conversational AI: Build a quick and responsive chatbot that can handle basic interactions.

- Text generation: Quickly generate creative content, like short stories or poems.

- Code completion: Use Llama3 8B to help you write code faster.

What about workarounds if you need to handle larger models?

- Use a more powerful GPU: Consider investing in a higher-end GPU, such as a RTX 4090 or a custom-built server with multiple GPUs.

- Optimize your code: Leverage techniques like mixed precision training and code optimization to speed up inference.

- Use a cloud-based service: If local performance is not a priority, you can utilize cloud-based solutions like Google Colab or Amazon SageMaker for larger models.

FAQ

1. Can I run Llama3 8B on my laptop?

Possibly, but it depends on the specs of your laptop. You'll need a powerful GPU and ample RAM to run Llama3 8B smoothly.

2. What's the best way to optimize the performance of Llama3 8B?

- Quantize the model: Use Q4KM to reduce model size and improve speed.

- Use a faster GPU: Experiment with different GPUs to find the optimal performance.

- Optimize your code: Leverage efficient libraries and techniques to speed up inference.

3. How can I learn more about LLMs and their applications?

- Visit the Hugging Face website: https://huggingface.co/

- Explore the "Transformers" library documentation: https://huggingface.co/docs/transformers

- Read articles and tutorials online.

Keywords

LLM, Llama3, RTX4000Ada20GB, GPU, local models, token generation speed, quantization, Q4K_M, F16, performance, benchmarks, use cases, workarounds, chatbots, text generation, code completion, optimization, cloud computing, Hugging Face, Transformers.