How Fast Can NVIDIA RTX 4000 Ada 20GB x4 Run Llama3 70B?

Introduction

The world of Large Language Models (LLMs) is exploding with exciting new advancements. And with these advancements come new challenges for developers and companies looking to leverage the power of LLMs locally. One of these challenges is finding the right hardware to run these massive models efficiently.

In this article, we'll take a deep dive into the performance of the NVIDIA RTX4000Ada20GBx4 GPU with the powerful Llama3 70B model. We'll explore the token generation speed, analyze key factors influencing performance, and provide practical recommendations for optimizing your setup.

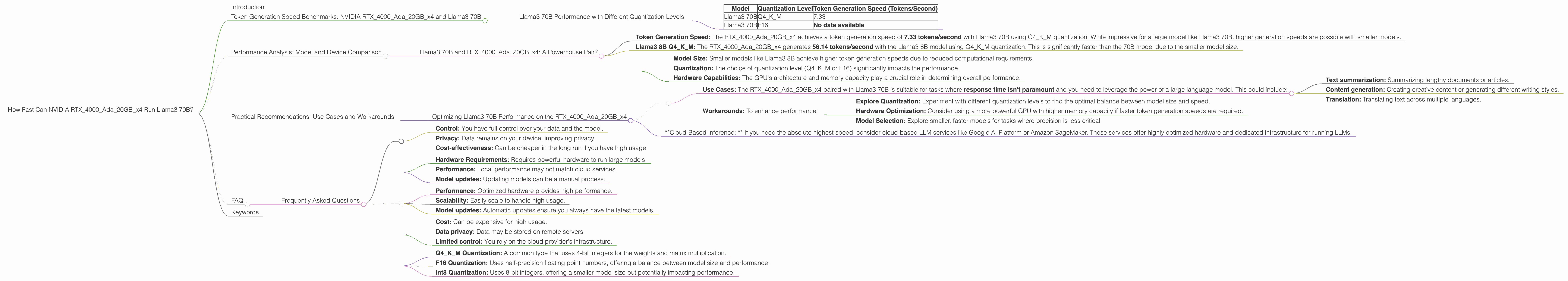

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada20GBx4 and Llama3 70B

Llama3 70B Performance with Different Quantization Levels:

- Q4KM Quantization: This level of quantization compresses the model's data, reducing the memory footprint but potentially impacting performance.

- F16 Quantization: This quantization level uses half-precision floating point numbers, offering a balance between model size and performance.

| Model | Quantization Level | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 70B | Q4KM | 7.33 |

| Llama3 70B | F16 | No data available |

Important Note: While the dataset provided includes token generation speed for Llama3 70B with Q4KM quantization, F16 data is missing. This indicates that the benchmark for Llama3 70B F16 on the RTX4000Ada20GBx4 was likely not performed.

Performance Analysis: Model and Device Comparison

Llama3 70B and RTX4000Ada20GBx4: A Powerhouse Pair?

The RTX4000Ada20GBx4 with its 20GB of dedicated memory, coupled with the Ada Lovelace architecture, is a beastly GPU. But how does it stack up against other devices and models?

Let's compare the performance of the RTX4000Ada20GBx4 with other GPUs and LLMs:

Note: Since the data only includes information on the RTX4000Ada20GBx4, we can't make direct comparisons with other devices. However, we can delve into the significance of the data we have.

For example:

- Token Generation Speed: The RTX4000Ada20GBx4 achieves a token generation speed of 7.33 tokens/second with Llama3 70B using Q4KM quantization. While impressive for a large model like Llama3 70B, higher generation speeds are possible with smaller models.

To understand the impact of quantization:

- Llama3 8B Q4KM: The RTX4000Ada20GBx4 generates 56.14 tokens/second with the Llama3 8B model using Q4KM quantization. This is significantly faster than the 70B model due to the smaller model size.

This highlights the key takeaways:

- Model Size: Smaller models like Llama3 8B achieve higher token generation speeds due to reduced computational requirements.

- Quantization: The choice of quantization level (Q4KM or F16) significantly impacts the performance.

- Hardware Capabilities: The GPU's architecture and memory capacity play a crucial role in determining overall performance.

Practical Recommendations: Use Cases and Workarounds

Optimizing Llama3 70B Performance on the RTX4000Ada20GBx4

- Use Cases: The RTX4000Ada20GBx4 paired with Llama3 70B is suitable for tasks where response time isn't paramount and you need to leverage the power of a large language model. This could include:

- Text summarization: Summarizing lengthy documents or articles.

- Content generation: Creating creative content or generating different writing styles.

- Translation: Translating text across multiple languages.

- Workarounds: To enhance performance:

- Explore Quantization: Experiment with different quantization levels to find the optimal balance between model size and speed.

- Hardware Optimization: Consider using a more powerful GPU with higher memory capacity if faster token generation speeds are required.

- Model Selection: Explore smaller, faster models for tasks where precision is less critical.

Alternative Options:

- *Cloud-Based Inference: * If you need the absolute highest speed, consider cloud-based LLM services like Google AI Platform or Amazon SageMaker. These services offer highly optimized hardware and dedicated infrastructure for running LLMs.

FAQ

Frequently Asked Questions

Q: What are the pros and cons of using local LLMs like Llama3 70B compared to cloud-based services?

A:

Local LLMs:

Pros:

- Control: You have full control over your data and the model.

- Privacy: Data remains on your device, improving privacy.

- Cost-effectiveness: Can be cheaper in the long run if you have high usage.

Cons:

- Hardware Requirements: Requires powerful hardware to run large models.

- Performance: Local performance may not match cloud services.

- Model updates: Updating models can be a manual process.

Cloud-Based Services:

Pros:

- Performance: Optimized hardware provides high performance.

- Scalability: Easily scale to handle high usage.

- Model updates: Automatic updates ensure you always have the latest models.

Cons:

- Cost: Can be expensive for high usage.

- Data privacy: Data may be stored on remote servers.

- Limited control: You rely on the cloud provider's infrastructure.

Q: What is quantization?

A: Quantization is a technique used to reduce the memory footprint of LLMs. It involves converting the original model's data, which is typically stored using high-precision floating point numbers, into lower precision formats like integers. This reduces the size of the model without significantly impacting performance.

Q: What are the different types of quantization?

A: There are different types of quantization, including:

- Q4KM Quantization: A common type that uses 4-bit integers for the weights and matrix multiplication.

- F16 Quantization: Uses half-precision floating point numbers, offering a balance between model size and performance.

- Int8 Quantization: Uses 8-bit integers, offering a smaller model size but potentially impacting performance.

Keywords

NVIDIA RTX4000Ada20GBx4, Llama3 70B, Token Generation Speed, Quantization, Q4KM, F16, LLM Performance, Local LLM, GPU, Deep Learning, Tokenization, Hardware Optimization, Inference, Cloud-Based LLM, Model Size, Memory Capacity, Use Cases, Workarounds, Performance Analysis, GPU Benchmarks, Natural Language Processing, AI, Machine Learning, Text Generation, Text Summarization, Content Generation, Translation.