How Fast Can NVIDIA RTX 4000 Ada 20GB Run Llama3 8B?

Introduction

The world of large language models (LLMs) is buzzing with excitement! These powerful AI models are revolutionizing how we interact with computers. But, running these models locally can be a challenge, especially for older hardware. This article dives deep into the performance of the NVIDIA RTX4000Ada_20GB graphics card when running the Llama 3 8B model. Whether you're a developer looking to build your own AI-powered applications or simply a tech enthusiast curious about the capabilities of this powerful GPU, buckle up for an exciting journey!

Performance Analysis: Token Generation Speed Benchmarks

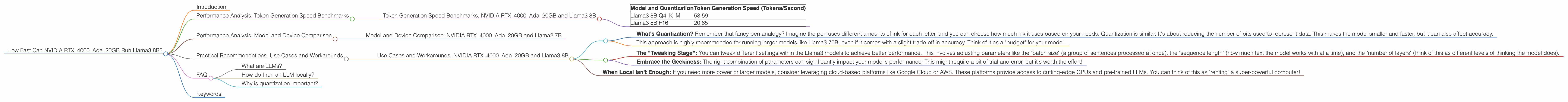

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 8B

Let's get down to the nitty-gritty: how fast can the NVIDIA RTX4000Ada_20GB generate text with the Llama3 8B model? The table below presents token generation speeds for different quantization levels.

| Model and Quantization | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 58.59 |

| Llama3 8B F16 | 20.85 |

Token generation speed basically means how quickly the model can produce new text, like those cool chatbots that can generate responses, or even write poems. The higher the number of tokens generated per second, the faster the model can respond.

The results show that the RTX4000Ada20GB provides a significant performance boost when running the Llama3 8B model. The Q4K_M quantization (we'll explain what that means in a bit), which uses a smaller number of bits to represent the model's data, delivers a much faster token generation rate.

Think of it like this: Imagine you're trying to write a novel with a fancy, complicated pen that takes a long time to write each word. That's a bit like the F16 quantization. Now switch to a simple pen that lets you jot down words faster – that's Q4KM. The RTX4000Ada_20GB is like a magical keyboard that helps you write even faster with that simple pen.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: NVIDIA RTX4000Ada_20GB and Llama2 7B

While this article focuses on the NVIDIA RTX4000Ada20GB and Llama3 8B, it's interesting to compare its performance against other models and devices. However, we don't have data available to compare the RTX4000Ada20GB's performance with Llama3 70B or other devices.

Practical Recommendations: Use Cases and Workarounds

Use Cases and Workarounds: NVIDIA RTX4000Ada_20GB and Llama3 8B

The NVIDIA RTX4000Ada_20GB is a powerful GPU, but it has limitations. While it runs the Llama3 8B model remarkably well, you might find it challenging to handle larger models like Llama3 70B. Here's what you can do:

1. Quantization:

- What's Quantization? Remember that fancy pen analogy? Imagine the pen uses different amounts of ink for each letter, and you can choose how much ink it uses based on your needs. Quantization is similar. It's about reducing the number of bits used to represent data. This makes the model smaller and faster, but it can also affect accuracy.

- This approach is highly recommended for running larger models like Llama3 70B, even if it comes with a slight trade-off in accuracy. Think of it as a "budget" for your model.

2. Optimize Model Parameters:

- The "Tweaking Stage": You can tweak different settings within the Llama3 models to achieve better performance. This involves adjusting parameters like the "batch size" (a group of sentences processed at once), the "sequence length" (how much text the model works with at a time), and the "number of layers" (think of this as different levels of thinking the model does).

- Embrace the Geekiness: The right combination of parameters can significantly impact your model's performance. This might require a bit of trial and error, but it's worth the effort!

3. Cloud-Based LLMs:

- When Local Isn't Enough: If you need more power or larger models, consider leveraging cloud-based platforms like Google Cloud or AWS. These platforms provide access to cutting-edge GPUs and pre-trained LLMs. You can think of this as "renting" a super-powerful computer!

FAQ

What are LLMs?

LLMs are like incredibly smart computers that can understand and generate human-like text. They are trained on massive datasets of text and learn to predict what words should come next in a sentence.

How do I run an LLM locally?

You can use tools like llama.cpp, which is a lightweight library that allows you to run LLMs directly on your computer.

Why is quantization important?

Quantization reduces the size of the model's data, which makes it faster to load, process, and run on your device. It's like having a smaller, lighter version of your model!

Keywords

NVIDIA RTX4000Ada20GB, Llama3 8B, Llama3 70B, Token Generation Speed, Quantization, F16, Q4K_M, Performance Benchmarks, LLM, Large Language Model, AI, Machine Learning, Deep Learning, GPU, Cloud-based LLMs