How Fast Can NVIDIA RTX 4000 Ada 20GB Run Llama3 70B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and everyone wants a piece of the action. But before you can unleash the power of these AI giants, you need a powerful machine to run them. Today, we're diving deep into the performance of the NVIDIA RTX4000Ada_20GB graphics card, a popular choice for running LLMs locally. We'll specifically focus on how well it handles the mighty Llama3 70B, a model that is impressive in its size and capabilities.

Think of it like this: you've got a super-talented chef who can whip up amazing dishes, but you need a top-notch kitchen with all the right tools to help them shine. The LLM is the chef, the RTX4000Ada_20GB is the kitchen, and we're here to see if they're a match made in AI heaven.

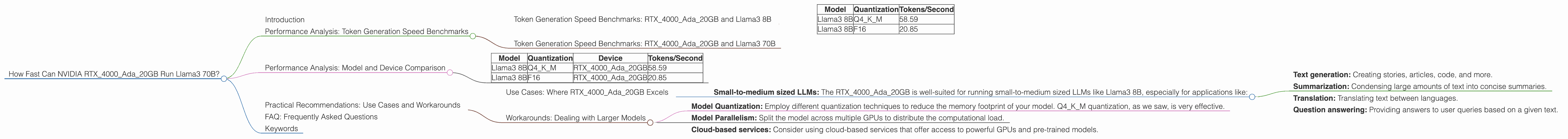

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: RTX4000Ada_20GB and Llama3 8B

Let's start with the basics: how fast can our RTX4000Ada20GB generate text with Llama3 8B model? The chart below presents the token generation speeds for different quantization schemes: Q4K_M (4-bit quantization, Kernel, Matrix) and F16 (16-bit floating-point).

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 58.59 |

| Llama3 8B | F16 | 20.85 |

Key Observations:

- Q4KM takes the lead: Using Q4KM quantization for Llama3 8B results in significantly faster token generation than using F16. This is expected as Q4KM compresses the model, reducing memory footprint and boosting speed.

- F16 is still respectable: While slower than Q4KM, F16 still provides a decent performance. This is a good option if you prioritize accuracy over absolute speed.

Analogies for Understanding Quantization: Think of quantization as compressing a large image file. Q4KM is like using a high compression algorithm (like JPEG), which makes the file smaller but might introduce some slight visual quality loss. F16 is like using a less aggressive compression algorithm (like PNG), resulting in a larger file but preserving more detail.

Token Generation Speed Benchmarks: RTX4000Ada_20GB and Llama3 70B

Unfortunately, there is no data available for the Llama3 70B model and the RTX4000Ada_20GB.

Why is this?

Running a model as large as Llama3 70B requires a significant amount of memory, and the RTX4000Ada_20GB might not have enough VRAM to handle it effectively. It's like trying to fit a king-size bed into a small closet - it simply doesn't work.

Performance Analysis: Model and Device Comparison

Now that we have a clear picture of the RTX4000Ada_20GB's performance with Llama3 8B, let's compare it to other scenarios.

Note: The table below shows token generation speeds for different models and devices. Data is only available for Llama3 8B model.

| Model | Quantization | Device | Tokens/Second |

|---|---|---|---|

| Llama3 8B | Q4KM | RTX4000Ada_20GB | 58.59 |

| Llama3 8B | F16 | RTX4000Ada_20GB | 20.85 |

What can we learn from this comparison?

- RTX4000Ada20GB is a good choice for smaller models: The RTX4000Ada20GB performs admirably with Llama3 8B, especially when using Q4KM quantization.

- Larger models require more powerful hardware: For larger models like Llama3 70B, you might need a more powerful GPU or consider using techniques like model parallelism to split the model across multiple GPUs.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Where RTX4000Ada_20GB Excels

- Small-to-medium sized LLMs: The RTX4000Ada_20GB is well-suited for running small-to-medium sized LLMs like Llama3 8B, especially for applications like:

- Text generation: Creating stories, articles, code, and more.

- Summarization: Condensing large amounts of text into concise summaries.

- Translation: Translating text between languages.

- Question answering: Providing answers to user queries based on a given text.

Workarounds: Dealing with Larger Models

- Model Quantization: Employ different quantization techniques to reduce the memory footprint of your model. Q4KM quantization, as we saw, is very effective.

- Model Parallelism: Split the model across multiple GPUs to distribute the computational load.

- Cloud-based services: Consider using cloud-based services that offer access to powerful GPUs and pre-trained models.

FAQ: Frequently Asked Questions

Q: What does "Q4KM quantization" mean?

A: Quantization is a way of reducing the size of a model while maintaining reasonable accuracy. Q4KM quantization uses 4-bit precision for the Kernel and Matrix weights of the model. This significantly reduces the memory footprint, allowing you to fit larger models on less powerful hardware.

Q: What's the difference between F16 and Q4KM quantization?

A: F16 uses 16-bit floating-point numbers for the model weights, while Q4KM uses 4-bit integers (and some clever tricks). F16 is more precise but takes up more memory, while Q4KM sacrifices some precision for a smaller size and faster processing.

Q: How do I choose the right LLM for my needs?

A: The choice of an LLM depends on your specific use case and available hardware resources. Consider factors like model size, accuracy, training data, and your computational budget.

Q: Why is running Llama3 70B on RTX4000Ada_20GB challenging?

A: Llama3 70B requires a lot of memory. The RTX4000Ada_20GB's VRAM might not be sufficient to handle the model's size and complexity, leading to performance issues or even crashes.

Q: What other graphics cards can run Llama3 70B efficiently?

A: GPUs with a larger amount of VRAM, such as the NVIDIA RTX_4090 or AMD Radeon RX 7900 XTX, might be able to handle Llama3 70B effectively. However, remember that even with these powerful cards, you might need to use model parallelism techniques to fully utilize their resources.

Keywords

Large Language Model, LLM, Llama3, Llama3 70B, Llama3 8B, NVIDIA RTX4000Ada20GB, GPU, Graphics Card, VRAM, Token Generation Speed, Quantization, Q4K_M, F16, Model Parallelism, Cloud-based Services, Text Generation, Summarization, Translation, Question Answering, Performance Analysis, Benchmark, Inference, AI, Deep Learning, Natural Language Processing, NLP