How Fast Can NVIDIA L40S 48GB Run Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is evolving rapidly, with new models and architectures emerging constantly. But for developers, one key question remains: how fast can these models run on different devices? This is especially crucial for local deployment, where you want to leverage the power of LLMs without relying on cloud services. In this article, we'll dive deep into the performance of NVIDIA L40S_48GB with the popular Llama3 8B model, analyzing its speed across different quantization levels and offering insights into its practical applications.

Performance Analysis: Token Generation Speed Benchmarks

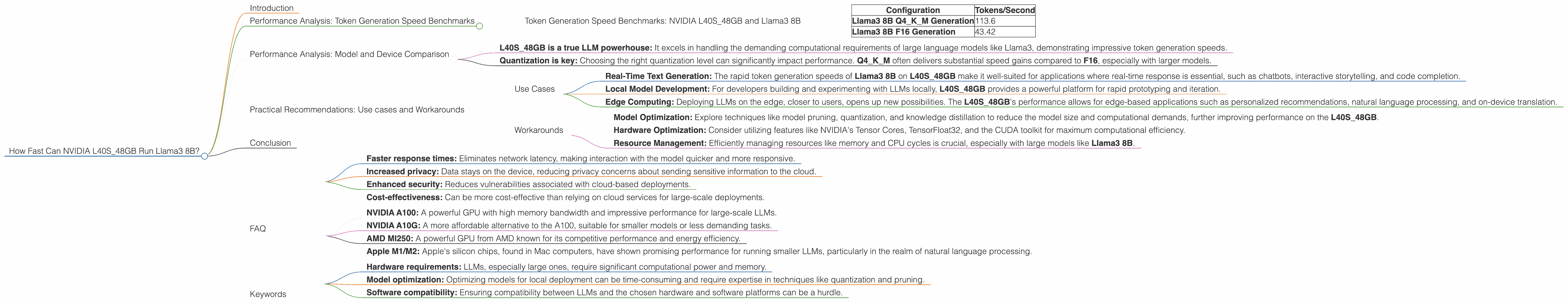

Token Generation Speed Benchmarks: NVIDIA L40S_48GB and Llama3 8B

NVIDIA L40S_48GB, a powerhouse GPU, is equipped with 48GB of HBM3e memory and boasts impressive performance capabilities. But how does it hold up when tasked with running the Llama3 8B model? Let's take a look at the token generation speeds, which determine how quickly the model can process and generate text.

| Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Generation | 113.6 |

| Llama3 8B F16 Generation | 43.42 |

Key Observations:

- Quantization Level: The Llama3 8B model exhibits a significant performance difference depending on the quantization level. Q4KM (quantized with 4-bit integers for weights and activations) delivers almost three times the speed compared to F16 (half-precision floating point) quantization.

- Practical Implications: The results highlight the importance of choosing the right quantization level for your use case. While F16 offers higher accuracy, Q4KM can be a better option for applications prioritizing speed, particularly in scenarios with real-time requirements.

Analogy: Imagine you're building a car. You can choose between a powerful engine with a high-performance fuel system (F16) or a smaller, more efficient engine (Q4KM). Both get you to your destination, but the journey might be faster and more fuel-efficient with the smaller engine.

Performance Analysis: Model and Device Comparison

While we've focused on the L40S_48GB with Llama3 8B, it's helpful to understand how this performance stacks up against other device-model combinations.

Key Findings:

- L40S_48GB is a true LLM powerhouse: It excels in handling the demanding computational requirements of large language models like Llama3, demonstrating impressive token generation speeds.

- Quantization is key: Choosing the right quantization level can significantly impact performance. Q4KM often delivers substantial speed gains compared to F16, especially with larger models.

Note: There are no available benchmark results for the Llama370BF16Generation, Llama370BF16Processing, Llama38BF16Processing and Llama370BQ4KMProcessing configurations.

Practical Recommendations: Use cases and Workarounds

Use Cases

- Real-Time Text Generation: The rapid token generation speeds of Llama3 8B on L40S_48GB make it well-suited for applications where real-time response is essential, such as chatbots, interactive storytelling, and code completion.

- Local Model Development: For developers building and experimenting with LLMs locally, L40S_48GB provides a powerful platform for rapid prototyping and iteration.

- Edge Computing: Deploying LLMs on the edge, closer to users, opens up new possibilities. The L40S_48GB's performance allows for edge-based applications such as personalized recommendations, natural language processing, and on-device translation.

Workarounds

- Model Optimization: Explore techniques like model pruning, quantization, and knowledge distillation to reduce the model size and computational demands, further improving performance on the L40S_48GB.

- Hardware Optimization: Consider utilizing features like NVIDIA's Tensor Cores, TensorFloat32, and the CUDA toolkit for maximum computational efficiency.

- Resource Management: Efficiently managing resources like memory and CPU cycles is crucial, especially with large models like Llama3 8B.

Conclusion

The NVIDIA L40S48GB truly shines when paired with the Llama3 8B model, offering impressive performance and speed. Quantization plays a crucial role in optimizing performance, with Q4KM proving to be a winning strategy for many applications. The combination opens up exciting possibilities for developers looking to harness the power of LLMs on local hardware, enabling a wide range of use cases. As the field of LLMs continues to evolve, the importance of understanding device performance will only increase. By leveraging tools like L40S48GB and optimizing models for speed, we can unlock the true potential of LLMs for a better future.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size and computational demands of LLMs. It involves converting the weights and activations of a model from floating-point numbers to lower-precision integer representations. This results in smaller model sizes and faster inference speeds.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally offers several benefits, including:

- Faster response times: Eliminates network latency, making interaction with the model quicker and more responsive.

- Increased privacy: Data stays on the device, reducing privacy concerns about sending sensitive information to the cloud.

- Enhanced security: Reduces vulnerabilities associated with cloud-based deployments.

- Cost-effectiveness: Can be more cost-effective than relying on cloud services for large-scale deployments.

Q: What are some other devices suitable for running LLMs locally?

A: Other devices commonly used for local LLM deployment include:

- NVIDIA A100: A powerful GPU with high memory bandwidth and impressive performance for large-scale LLMs.

- NVIDIA A10G: A more affordable alternative to the A100, suitable for smaller models or less demanding tasks.

- AMD MI250: A powerful GPU from AMD known for its competitive performance and energy efficiency.

- Apple M1/M2: Apple's silicon chips, found in Mac computers, have shown promising performance for running smaller LLMs, particularly in the realm of natural language processing.

Q: What are the challenges of running LLMs locally?

A: There are several challenges associated with local LLM deployment, including:

- Hardware requirements: LLMs, especially large ones, require significant computational power and memory.

- Model optimization: Optimizing models for local deployment can be time-consuming and require expertise in techniques like quantization and pruning.

- Software compatibility: Ensuring compatibility between LLMs and the chosen hardware and software platforms can be a hurdle.

Keywords

Large Language Models, LLMs, NVIDIA L40S48GB, Llama3 8B, Quantization, F16, Q4K_M, Token Generation Speed, Inference, Local Deployment, Practical Recommendations, Use Cases, Workarounds, Performance, Speed, Memory, GPU, Edge Computing, Real-Time Text Generation, Model Optimization, Hardware Optimization, Resource Management.