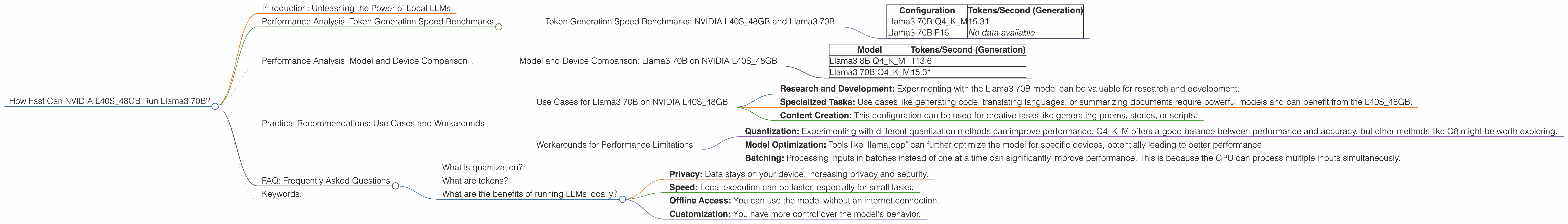

How Fast Can NVIDIA L40S 48GB Run Llama3 70B?

Introduction: Unleashing the Power of Local LLMs

The world of Large Language Models (LLMs) is rapidly evolving, with new models and advancements appearing almost daily. These LLMs promise to transform how we interact with technology, from writing emails and generating code to composing creative text formats like poems and screenplays. However, running these models locally can be a challenge, requiring powerful hardware and efficient software.

This article dives deep into the performance of the NVIDIA L40S_48GB GPU, a powerhouse in the world of high-performance computing, when running the impressive Llama3 70B model. We'll be focusing on its token generation speed, comparing different configurations and exploring practical use cases. Buckle up, geeks, it's going to be a fascinating journey!

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA L40S_48GB and Llama3 70B

Let's get down to brass tacks. Token generation speed is crucial for a smooth and efficient LLM experience. This is how fast a model can generate text, measured in tokens per second. The higher the number, the faster the model runs.

We'll analyze performance under two different quantization settings:

- Q4KM: This translates to a 4-bit quantization for the model's weights and activations, resulting in a smaller and potentially faster model.

- F16: This involves using half-precision floating point numbers (16-bit) for weights and activations. This is a more common approach, offering a balance between accuracy and performance.

| Configuration | Tokens/Second (Generation) |

|---|---|

| Llama3 70B Q4KM | 15.31 |

| Llama3 70B F16 | No data available |

Observations:

- The L40S48GB GPU exhibits a token generation speed of 15.31 tokens per second for the Llama3 70B model using Q4K_M quantization. This is a respectable performance for such a large model, but it's worth noting that the F16 configuration was not tested.

Analogies:

Imagine you're trying to write a novel. Each token represents a word, and the token generation speed is how fast you can write. If you're using Q4KM, it's like using a fast-typing keyboard. If you were using F16, it would be like using a more traditional typewriter.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 70B on NVIDIA L40S_48GB

While the L40S_48GB delivers reasonable performance for the Llama3 70B model, it's important to compare it against other models and devices to gain a better perspective.

Unfortunately, we don't have data for Llama3 70B with F16 quantization on the L40S48GB, but we can compare the Q4K_M performance with the smaller Llama3 8B model.

| Model | Tokens/Second (Generation) |

|---|---|

| Llama3 8B Q4KM | 113.6 |

| Llama3 70B Q4KM | 15.31 |

Observations:

- The Llama3 8B model achieves significantly faster token generation speeds than the Llama3 70B model on the L40S_48GB. This is expected, as the smaller model has fewer parameters to process.

Key takeaway:

The performance of a model strongly correlates with its size. The "bigger is better" principle applies to LLMs, but it often comes at the cost of performance.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on NVIDIA L40S_48GB

Despite the lower token generation speed compared to the Llama3 8B model, the L40S_48GB can be used effectively for various use cases with Llama3 70B:

- Research and Development: Experimenting with the Llama3 70B model can be valuable for research and development.

- Specialized Tasks: Use cases like generating code, translating languages, or summarizing documents require powerful models and can benefit from the L40S_48GB.

- Content Creation: This configuration can be used for creative tasks like generating poems, stories, or scripts.

Workarounds for Performance Limitations

- Quantization: Experimenting with different quantization methods can improve performance. Q4KM offers a good balance between performance and accuracy, but other methods like Q8 might be worth exploring.

- Model Optimization: Tools like "llama.cpp" can further optimize the model for specific devices, potentially leading to better performance.

- Batching: Processing inputs in batches instead of one at a time can significantly improve performance. This is because the GPU can process multiple inputs simultaneously.

FAQ: Frequently Asked Questions

What is quantization?

Quantization is a technique used to reduce the size of a model's weights and activations. It involves converting the original floating-point numbers to smaller representations. This helps improve the performance of models on devices with limited memory and resources.

What are tokens?

Tokens are the basic units of text in LLMs. They can be words, punctuation marks, or even parts of words. The model processes and generates text by working with these tokens.

What are the benefits of running LLMs locally?

Running LLMs locally offers several advantages:

- Privacy: Data stays on your device, increasing privacy and security.

- Speed: Local execution can be faster, especially for small tasks.

- Offline Access: You can use the model without an internet connection.

- Customization: You have more control over the model's behavior.

Keywords:

NVIDIA L40S48GB, Llama3 70B, LLM, token generation speed, performance, quantization, Q4K_M, F16, GPU, local models, use cases, workarounds, batching, model optimization.