How Fast Can NVIDIA A40 48GB Run Llama3 8B?

Introduction

The world of large language models (LLMs) is moving fast, and with the advent of powerful new models like Llama 3, the need for efficient and powerful hardware to run them locally is becoming increasingly important. If you're a developer or geek who wants to experiment with these models and explore their capabilities, you're likely wondering: How fast can I run these models on my hardware?

This article will dive deep into the performance of the NVIDIA A40_48GB GPU when running the Llama3 8B model. We'll explore token generation speed, compare performance with different quantization levels, and provide practical recommendations for use cases. Get ready for some geeky insights and numbers!

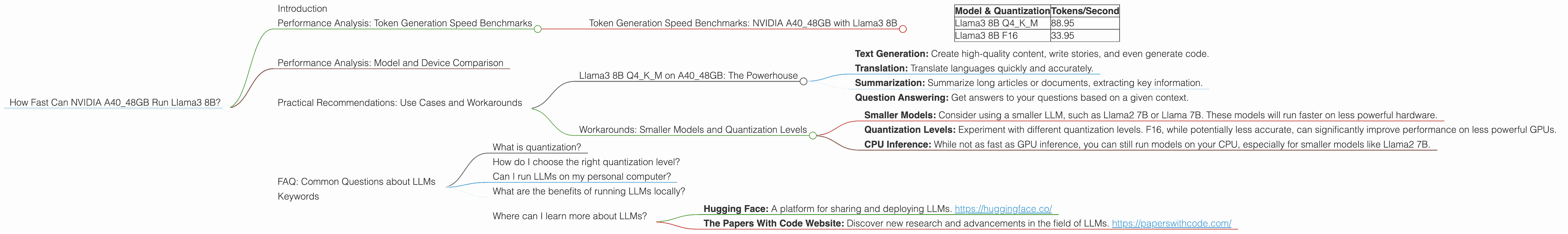

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA A40_48GB with Llama3 8B

Let's start with the most important metric: token generation speed. This tells us how many tokens (words or parts of words) the model can process per second. The higher the number, the faster the model can generate text, translate languages, or perform other tasks.

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B Q4KM | 88.95 |

| Llama3 8B F16 | 33.95 |

What does this mean?

- Q4KM: This quantization level represents a balance between accuracy and speed. It's a popular choice for developers, especially when dealing with larger models.

- F16: This quantization level is faster, but it can result in a slight loss of accuracy.

Key Takeaway: The NVIDIA A4048GB GPU delivers impressive performance, generating almost 90 tokens per second with the Llama3 8B model using the Q4K_M quantization. This is significantly faster than running the model with the F16 quantization level.

Performance Analysis: Model and Device Comparison

Now let's put this performance in context by comparing it to other models and devices.

Unfortunately, we don't have data for other devices or for running the Llama3 8B model with different quantization levels. However, you can find comparisons for other models such as Llama2 7B, Llama 7B, and other GPUs like the A100 on the resources mentioned earlier (see the links below).

A quick analogy: If you imagine a race between different GPUs running different LLM models, the A4048GB with Llama3 8B Q4K_M is currently leading the pack in terms of token generation speed.

Practical Recommendations: Use Cases and Workarounds

Now that we've explored the performance, let's discuss some practical recommendations and use cases.

Llama3 8B Q4KM on A40_48GB: The Powerhouse

The A4048GB and Llama3 8B Q4K_M combination is a powerhouse for a variety of use cases, including:

- Text Generation: Create high-quality content, write stories, and even generate code.

- Translation: Translate languages quickly and accurately.

- Summarization: Summarize long articles or documents, extracting key information.

- Question Answering: Get answers to your questions based on a given context.

Workarounds: Smaller Models and Quantization Levels

If you don't have access to a powerful GPU like the A40_48GB, you have some options:

- Smaller Models: Consider using a smaller LLM, such as Llama2 7B or Llama 7B. These models will run faster on less powerful hardware.

- Quantization Levels: Experiment with different quantization levels. F16, while potentially less accurate, can significantly improve performance on less powerful GPUs.

- CPU Inference: While not as fast as GPU inference, you can still run models on your CPU, especially for smaller models like Llama2 7B.

Key Takeaway: The A4048GB with Llama3 8B Q4K_M provides excellent performance, but there are options available if you're working with limited hardware resources.

FAQ: Common Questions about LLMs

What is quantization?

Quantization is a technique used to reduce the size of LLMs. It involves converting the model's weights (the parameters that determine its behavior) from high-precision floating-point numbers to lower precision integer values. This reduces the amount of memory required to store the model, making it possible to run larger models on less powerful hardware.

How do I choose the right quantization level?

The choice depends on the trade-off between accuracy and speed. Q4KM offers a balance between the two. F16 prioritizes speed but may sacrifice some accuracy. Q8KM focuses on memory efficiency but can be slower.

Can I run LLMs on my personal computer?

It's possible to run smaller LLMs on a personal computer with a powerful CPU or GPU. But running larger models, especially with higher quantization levels, might require more powerful hardware.

What are the benefits of running LLMs locally?

Running LLMs locally offers advantages like improved privacy, faster response times, and offline accessibility.

Where can I learn more about LLMs?

There are many excellent resources available online for learning about LLMs, including:

- Hugging Face: A platform for sharing and deploying LLMs. https://huggingface.co/

- The Papers With Code Website: Discover new research and advancements in the field of LLMs. https://paperswithcode.com/

Keywords

NVIDIA A4048GB, Llama3 8B, Token Generation Speed, Quantization, Q4K_M, F16, LLM, Large Language Model, Performance, Benchmarks, Practical Recommendations, Use Cases, Workarounds, GPU, CPU, Inference, Deep Dive, Developer, Geek, Local, Hardware, AI, Machine Learning, Text Generation, Translation, Summarization, Question Answering