How Fast Can NVIDIA A100 SXM 80GB Run Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is exploding, with new models and applications emerging daily. Running these LLMs locally can be incredibly powerful, allowing for faster inference speeds, increased privacy, and the ability to customize models for specific needs. However, it can also be challenging, with the computational demands of these models pushing even high-end hardware to its limits.

This article dives deep into the performance of the NVIDIA A100SXM80GB GPU, a popular choice for running LLMs locally, with a focus on the Llama3 8B model. We'll explore the token generation speeds achieved using various quantization methods and provide practical recommendations for developers who are keen to leverage this powerful combination.

Performance Analysis: Token Generation Speed Benchmarks

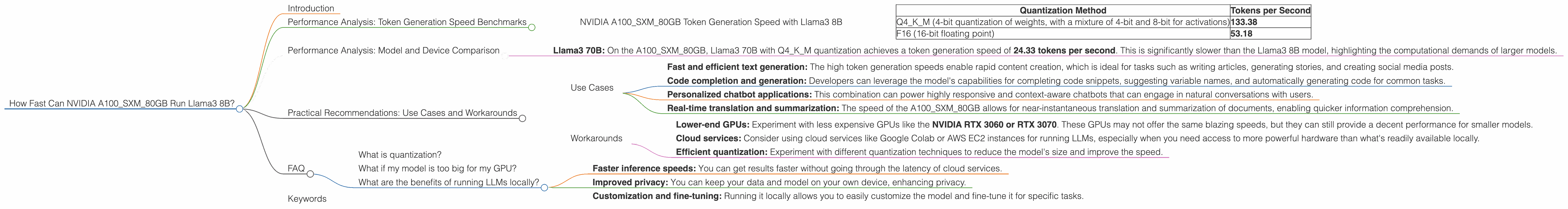

NVIDIA A100SXM80GB Token Generation Speed with Llama3 8B

Let's dive straight into the numbers! The NVIDIA A100SXM80GB GPU can generate tokens at a remarkable speed when running Llama3 8B. Here's a breakdown of the performance based on different quantization methods:

| Quantization Method | Tokens per Second |

|---|---|

| Q4KM (4-bit quantization of weights, with a mixture of 4-bit and 8-bit for activations) | 133.38 |

| F16 (16-bit floating point) | 53.18 |

Key takeaways:

- Q4KM reigns supreme: The Q4KM quantization method offers considerably faster speeds compared to F16, proving its efficiency in compressing the model while maintaining reasonable accuracy.

- Significant speedup: The A100SXM80GB with Q4KM can generate over 133 tokens per second, which is incredibly fast. To put this into perspective, imagine generating the text equivalent of a tweet (roughly 280 characters or about 100 tokens) in less than a second. That's blazing fast!

Performance Analysis: Model and Device Comparison

While we're focusing on the A100SXM80GB and Llama3 8B, let's briefly touch upon how they compare to other devices and models.

Note: The following data is incomplete and only reflects performance data available for the specified configurations.

- Llama3 70B: On the A100SXM80GB, Llama3 70B with Q4KM quantization achieves a token generation speed of 24.33 tokens per second. This is significantly slower than the Llama3 8B model, highlighting the computational demands of larger models.

Why is this important? Understanding the performance differences between models and devices is crucial for making informed decisions when choosing the right combination for your specific use case. Do you need the speed and efficiency of a smaller model like Llama3 8B, or do you require the increased capabilities of a larger model even with slower speeds?

Practical Recommendations: Use Cases and Workarounds

Use Cases

The NVIDIA A100SXM80GB and Llama3 8B combination offers significant advantages for a variety of use cases, including:

- Fast and efficient text generation: The high token generation speeds enable rapid content creation, which is ideal for tasks such as writing articles, generating stories, and creating social media posts.

- Code completion and generation: Developers can leverage the model's capabilities for completing code snippets, suggesting variable names, and automatically generating code for common tasks.

- Personalized chatbot applications: This combination can power highly responsive and context-aware chatbots that can engage in natural conversations with users.

- Real-time translation and summarization: The speed of the A100SXM80GB allows for near-instantaneous translation and summarization of documents, enabling quicker information comprehension.

Workarounds

While the A100SXM80GB is a powerful GPU, it's not always accessible or affordable for everyone. Here are some workarounds for those looking to run LLMs locally without breaking the bank:

- Lower-end GPUs: Experiment with less expensive GPUs like the NVIDIA RTX 3060 or RTX 3070. These GPUs may not offer the same blazing speeds, but they can still provide a decent performance for smaller models.

- Cloud services: Consider using cloud services like Google Colab or AWS EC2 instances for running LLMs, especially when you need access to more powerful hardware than what's readily available locally.

- Efficient quantization: Experiment with different quantization techniques to reduce the model's size and improve the speed.

FAQ

What is quantization?

Quantization is a technique used to reduce the size of a model by representing its weights and activations using fewer bits. This results in smaller models that require less memory and can be run on less powerful hardware.

What if my model is too big for my GPU?

If your model is too big, you need to either reduce the model size (e.g., through quantization) or use a more powerful GPU.

What are the benefits of running LLMs locally?

Running LLMs locally offers benefits such as:

- Faster inference speeds: You can get results faster without going through the latency of cloud services.

- Improved privacy: You can keep your data and model on your own device, enhancing privacy.

- Customization and fine-tuning: Running it locally allows you to easily customize the model and fine-tune it for specific tasks.

Keywords

NVIDIA A100SXM80GB, Llama3 8B, Llama3 70B, Large Language Model, LLM, Token Generation, GPU, Performance, Quantization, Q4KM, F16, Text Generation, Code Completion, Chatbot, Translation, Summarization, Cloud Services, Local Inference, Hardware Requirements, Model Size, Memory, Optimization, Efficiency, Speed, Developer, Use Cases, Practical Recommendations, Workarounds.