How Fast Can NVIDIA A100 PCIe 80GB Run Llama3 8B?

Introduction: Diving Deep into Local LLM Performance

The world of large language models (LLMs) is buzzing, and for good reason. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models can be computationally demanding, requiring specialized hardware and optimization strategies.

This article will deep dive into the performance of Llama3 8B, a popular and efficient LLM, running on the NVIDIA A100PCIe80GB GPU. We'll analyze benchmarks, compare different quantization levels, and explore practical use cases, all with a focus on making LLM development accessible and exciting for developers.

Performance Analysis: Token Generation Speed Benchmarks

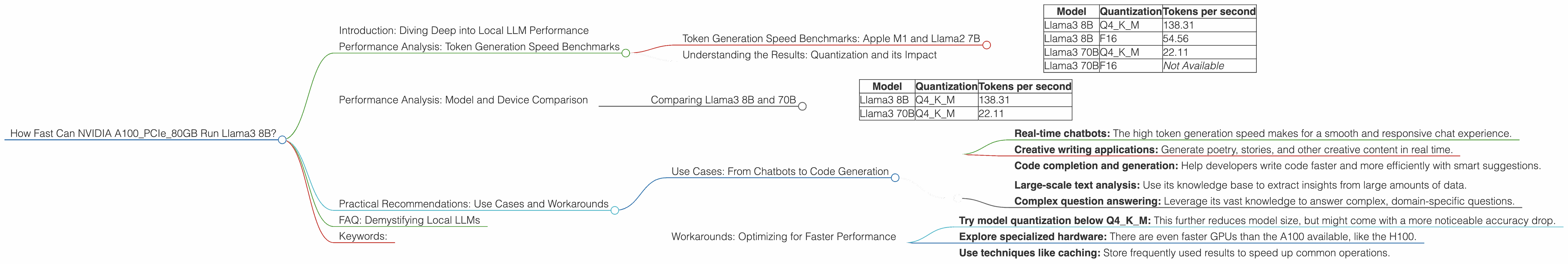

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start with a fundamental measurement: token generation speed. This tells us how quickly the model can generate new pieces of text, which is crucial for real-time applications like chatbots or interactive writing tools.

| Model | Quantization | Tokens per second |

|---|---|---|

| Llama3 8B | Q4KM | 138.31 |

| Llama3 8B | F16 | 54.56 |

| Llama3 70B | Q4KM | 22.11 |

| Llama3 70B | F16 | Not Available |

Important Note: The data for these experiments is drawn from two sources: llama.cpp and GPU Benchmarks on LLM Inference. Remember, these are just benchmarks; actual performance might vary depending on your specific setup and workload.

Understanding the Results: Quantization and its Impact

Looking at the above table, you'll notice that Llama3 8B achieves significantly faster token generation speeds when quantized at Q4KM compared to F16. To understand why, we need to talk about quantization.

Think of it like this: LLMs are big, like really, really big. Their internal parameters (the numbers that make them do their magic) can be massive. Quantization is a technique that shrinks these parameters. It's like using a smaller file format for an image - it takes up less space but still retains some quality. Q4KM and F16 are different types of compression, with Q4KM being more aggressive.

Q4KM achieves higher performance by trading off some precision for speed. The model still works fine for many tasks, but it might not be as accurate as the full precision model. On the other hand, F16 keeps more information, making it more suitable for tasks where accuracy is critical.

Tip: Choose the right quantization level based on your application's needs. If you need blazing-fast speeds and can tolerate some loss in accuracy, Q4KM is a great choice. If you need maximum accuracy, F16 is the way to go.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B and 70B

Let's compare the token generation speeds for Llama3 8B and Llama3 70B on the NVIDIA A100PCIe80GB.

| Model | Quantization | Tokens per second |

|---|---|---|

| Llama3 8B | Q4KM | 138.31 |

| Llama3 70B | Q4KM | 22.11 |

Llama3 8B is roughly six times faster than Llama3 70B, which makes sense given that the 70B model has a much larger parameter set. Think of it like trying to drive a compact car compared to a semi-truck; the smaller car is going to be quicker off the line.

Practical Recommendations: Use Cases and Workarounds

Use Cases: From Chatbots to Code Generation

Knowing these performance numbers, let's think about what kind of projects you can tackle with Llama3 8B and NVIDIA A100PCIe80GB.

Llama3 8B Q4KM is ideal for:

- Real-time chatbots: The high token generation speed makes for a smooth and responsive chat experience.

- Creative writing applications: Generate poetry, stories, and other creative content in real time.

- Code completion and generation: Help developers write code faster and more efficiently with smart suggestions.

Llama3 70B Q4KM is best for:

- Large-scale text analysis: Use its knowledge base to extract insights from large amounts of data.

- Complex question answering: Leverage its vast knowledge to answer complex, domain-specific questions.

Workarounds: Optimizing for Faster Performance

If you need even more speed, here are some ideas:

- Try model quantization below Q4KM: This further reduces model size, but might come with a more noticeable accuracy drop.

- Explore specialized hardware: There are even faster GPUs than the A100 available, like the H100.

- Use techniques like caching: Store frequently used results to speed up common operations.

FAQ: Demystifying Local LLMs

Q: What is an LLM?

A: An LLM is a large language model, a type of artificial intelligence trained on a massive dataset of text and code. It learns patterns and relationships in language, allowing it to perform tasks like generating text, translating languages, and answering questions.

Q: What is quantization?

A: Quantization is like compressing a large file, making it smaller and faster to work with. It reduces the precision of the numbers inside the LLM but can significantly speed up performance.

Q: What is "Q4KM"?

A: A quantization scheme where model parameters are represented using 4 bits instead of 32 bits. Think of it like using a smaller file format for an image; it takes up less space but may have less detail.

Q: What is "F16"?

A: A common type of floating-point representation that uses 16 bits (half the space of 32 bits) to represent numbers.

Q: How do I use Llama3 with the NVIDIA A100PCIe80GB?

A: You'll need to use a framework like llama.cpp, which supports running LLMs locally on GPUs. The framework provides instructions on how to compile the model and run it on your hardware.

Q: What are tokens?

A: Tokens are the individual units of text that LLMs use to process information. They can be words, punctuation marks, or special characters.

Q: Is it possible to run LLMs on a CPU?

A: Yes, but it's much slower than using a GPU. CPUs are generally better suited for other tasks that require more general processing power.

Keywords:

Llama3, NVIDIA A100PCIe80GB, LLM, Large Language Model, Token Generation Speed, Quantization, Q4KM, F16, GPU, Performance, Benchmark, Chatbot, Code Generation, Text Analysis, Question Answering