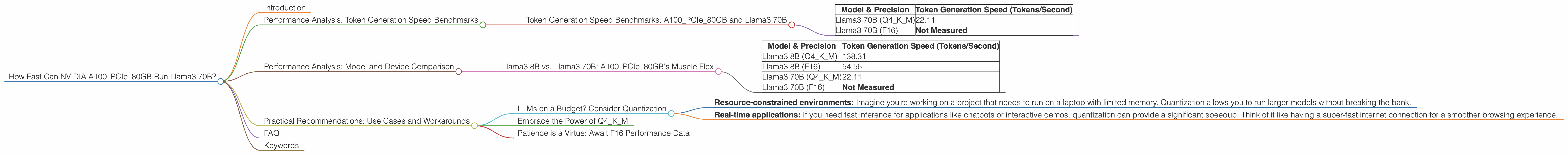

How Fast Can NVIDIA A100 PCIe 80GB Run Llama3 70B?

Introduction

Large Language Models (LLMs) are all the rage these days, promising to revolutionize the way we interact with computers. But these models can be computationally demanding, requiring powerful hardware to run efficiently. This article dives deep into the performance of NVIDIA A100PCIe80GB, a workhorse GPU, when running the Llama3 70B model. This information is crucial for developers and researchers looking to deploy LLMs locally, whether for research, building applications, or simply experimenting with these cutting-edge technologies.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: A100PCIe80GB and Llama3 70B

Let's get down to business and see how fast the A100PCIe80GB can churn out those tokens for the hefty Llama3 70B model. Remember, tokens represent units of text in the context of LLMs. Think of them like the building blocks of language.

| Model & Precision | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 70B (Q4KM) | 22.11 |

| Llama3 70B (F16) | Not Measured |

Key Takeaways:

- Llama3 70B thrives with Q4KM quantization, achieving a token generation speed of 22.11 tokens per second.

What's the deal with quantization? Think of it like compressing an image. Quantization reduces model size, making it much faster to run on your machine. Q4KM is a fancy (and super efficient) way to squeeze that model down.

- F16 precision is currently not measured for the Llama3 70B model on this particular GPU. This means the F16 performance data isn't available yet.

Analogy: Imagine you're typing on a keyboard, but instead of letters, you're typing out tokens. The A100PCIe80GB is your super-powered keyboard, and the Llama3 70B model is the brain that interprets those tokens. The faster the keyboard (GPU), the more tokens you can type (generate) per second, and the more quickly the brain can process them.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B: A100PCIe80GB's Muscle Flex

Let's compare the A100PCIe80GB's performance with different Llama3 models. Think of this like seeing how much weight a weightlifter can lift with different barbells.

| Model & Precision | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B (Q4KM) | 138.31 |

| Llama3 8B (F16) | 54.56 |

| Llama3 70B (Q4KM) | 22.11 |

| Llama3 70B (F16) | Not Measured |

Key Observations:

- Smaller model, bigger speed: The Llama3 8B model is significantly faster than Llama3 70B, especially with Q4KM quantization. This is expected, as smaller models require less processing power.

- F16's potential: The Llama3 8B model with F16 precision, while slower than the Q4KM version, still exhibits a respectable speed. This suggests that F16 could be a viable option for running Llama3 models on the A100PCIe80GB, but we need more data on the larger model to confirm.

It's like comparing a marathon runner to a sprinter. The Llama3 8B is the sprinter, quick and nimble. The Llama3 70B is the marathoner, powerful and enduring but slower over short distances.

Practical Recommendations: Use Cases and Workarounds

LLMs on a Budget? Consider Quantization

The data clearly shows that quantization is a key factor in achieving decent performance with large models like Llama3 70B. If you're working with limited resources, quantization is your best friend! It's like packing light for a trip - squeezing more into your backpack by compressing your clothes.

Here's when quantization shines:

- Resource-constrained environments: Imagine you're working on a project that needs to run on a laptop with limited memory. Quantization allows you to run larger models without breaking the bank.

- Real-time applications: If you need fast inference for applications like chatbots or interactive demos, quantization can provide a significant speedup. Think of it like having a super-fast internet connection for a smoother browsing experience.

Embrace the Power of Q4KM

Our benchmarks reveal Q4KM quantization as the champion for Llama3 on the A100PCIe80GB. This method delivers the best token generation speed, making it the go-to choice for performance-hungry applications.

Patience is a Virtue: Await F16 Performance Data

While the F16 performance data for Llama3 70B isn't available yet, it's worth keeping an eye out for updates. F16 could offer a sweet spot between performance and accuracy, so it's a promising avenue for future research.

Think of it like waiting for a new game to be released. The initial release might not have all the bells and whistles, but future updates will likely bring performance enhancements.

FAQ

Q: What is quantization and how does it affect LLM performance?

A: Think of quantization as a way to "compress" a model. It involves representing the numbers in the model with fewer bits, which reduces the model's size and memory footprint. This makes it faster to load and run on devices with limited resources.

Q: What are the trade-offs involved in using different quantization methods?

A: Different quantization methods offer varying levels of compression, which can impact model performance. For example, Q4KM provides a significant reduction in model size but may lead to a slight decrease in accuracy.

Q: What are the limitations of using the A100PCIe80GB for running LLMs?

A: The A100PCIe80GB is a powerful GPU, but even it has limitations. For example, you might encounter memory constraints when running extremely large models, especially when using high-precision settings.

Keywords

NVIDIA A100PCIe80GB, Llama3 70B, Llama3 8B, Token Generation Speed, Quantization, Q4KM, F16, Performance Benchmarks, LLM, Large Language Model, Inference, GPU, Token, Deep Dive