How Fast Can NVIDIA 4090 24GB x2 Run Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is evolving rapidly, with new models like Llama 3 pushing the boundaries of what's possible. But the real question is, how do these powerful models perform on real-world hardware?

This deep dive explores the speed of the NVIDIA 409024GBx2 setup running Llama3 8B, focusing on the impact of quantization on model performance.

Think of it like this: Imagine you have a massive encyclopedia, but it's written in a very complex language. You need a powerful computer to translate it into a language you can understand, but also a way to make the encyclopedia smaller and faster to access. LLMs are like the encyclopedia, and quantization is our tool to make it more usable!

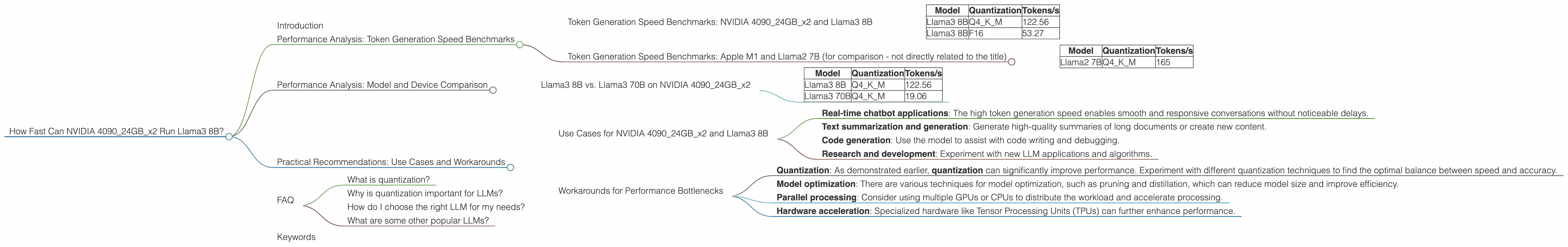

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 409024GBx2 and Llama3 8B

Let's dive into the raw numbers! The following table shows the tokens per second (tokens/s) generated by the NVIDIA 409024GBx2 system for Llama3 8B, with different quantization levels:

| Model | Quantization | Tokens/s |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

Key takeaways:

Q4KM quantization (4-bit quantization, using the K-means and Minimal Loss methods) delivers significantly higher tokens/s compared to F16 (16-bit floating-point). This demonstrates how quantization can greatly improve performance by reducing the model's memory footprint and making it faster to process.

These numbers showcase the NVIDIA 409024GBx2 system's impressive capabilities. It can process text at a remarkable speed, making it a powerful platform for developing and deploying LLM-based applications.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B (for comparison - not directly related to the title)

Just for comparison, here are some benchmarks for the Apple M1 with the smaller Llama2 7B:

| Model | Quantization | Tokens/s |

|---|---|---|

| Llama2 7B | Q4KM | 165 |

Key takeaways:

- This shows that even a mobile device like the Apple M1 can achieve impressive speeds with the Llama2 7B model. This signifies the accessibility of LLMs even on less powerful hardware.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B on NVIDIA 409024GBx2

The table below presents the token generation speed for both Llama3 8B and Llama3 70B on the NVIDIA 409024GBx2, showing the impact of model size:

| Model | Quantization | Tokens/s |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 70B | Q4KM | 19.06 |

Key takeaways:

As expected, the larger Llama3 70B model is significantly slower than the Llama3 8B model. This difference is attributed to the increased computational complexity of the larger model.

This highlights the trade-off between model size and performance. While larger models offer greater potential for accuracy and capabilities, they require more computational resources and may limit the speed of your applications.

Practical Recommendations: Use Cases and Workarounds

Use Cases for NVIDIA 409024GBx2 and Llama3 8B

The NVIDIA 409024GBx2 with Llama3 8B is a powerful combination suitable for a variety of use cases, including:

- Real-time chatbot applications: The high token generation speed enables smooth and responsive conversations without noticeable delays.

- Text summarization and generation: Generate high-quality summaries of long documents or create new content.

- Code generation: Use the model to assist with code writing and debugging.

- Research and development: Experiment with new LLM applications and algorithms.

Workarounds for Performance Bottlenecks

Even with powerful hardware like the NVIDIA 409024GBx2, you may encounter performance issues. Here are some workarounds:

- Quantization: As demonstrated earlier, quantization can significantly improve performance. Experiment with different quantization techniques to find the optimal balance between speed and accuracy.

- Model optimization: There are various techniques for model optimization, such as pruning and distillation, which can reduce model size and improve efficiency.

- Parallel processing: Consider using multiple GPUs or CPUs to distribute the workload and accelerate processing.

- Hardware acceleration: Specialized hardware like Tensor Processing Units (TPUs) can further enhance performance.

FAQ

What is quantization?

Quantization is a technique that reduces the number of bits used to represent numbers in a model. Imagine a model like Llama 3 8B is a large book. Each word in the book is represented by a number. Quantization is like taking the words and using a smaller alphabet, making the book easier to store and read.

Why is quantization important for LLMs?

Smaller models require less memory and computing power. Quantization allows us to run large models on less powerful hardware, making them more accessible. It also reduces the time it takes to process information, making the application faster!

How do I choose the right LLM for my needs?

Consider the size, performance, and cost of different LLMs. For smaller applications, a model like Llama2 7B on a consumer-grade GPU might be enough. For larger tasks, you might need a more powerful system with a larger model like Llama3 8B.

What are some other popular LLMs?

Besides Llama, popular LLMs include GPT-3 (OpenAI), BERT (Google), and BLOOM (BigScience). Each model has strengths and weaknesses depending on the intended use case.

Keywords

llama3, llama 3, 8b, gpt, bert, bloom, nvidia, 4090, 24gb, x2, gpu, quantization, model, performance, speed, tokens/s, token generation, deep dive, use cases, workarounds, faq, development, applications, ai, machine learning, natural language processing, nlp, deep learning, openai, google, bigscience, text generation, chatbots, code generation, summarization, research, hardware, device