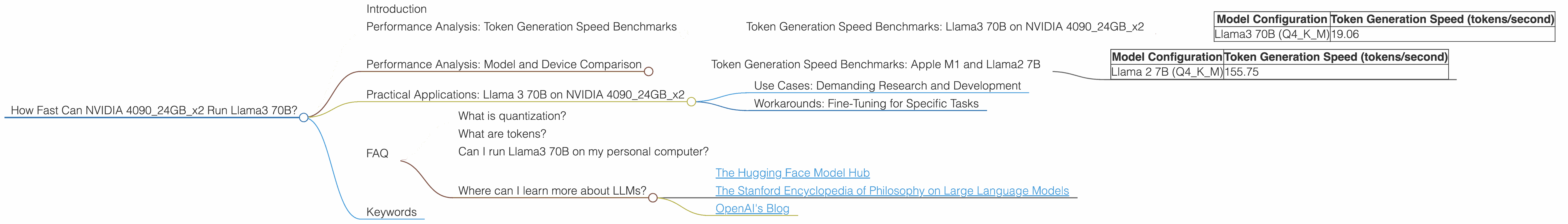

How Fast Can NVIDIA 4090 24GB x2 Run Llama3 70B?

Introduction

The world of large language models (LLMs) is buzzing with excitement. These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally on your own hardware can be a challenge, especially for models like Llama 3 70B. The Llama3 70B model is a behemoth, with 70 billion parameters, and its large size requires a powerful GPU to handle it.

In this deep dive, we'll explore the performance of running Llama 3 70B on a powerful dual NVIDIA 4090 24GB setup. We'll examine the token generation speed and delve into the factors that influence its performance. Think of it as a race to see who can generate those captivating words the fastest!

Performance Analysis: Token Generation Speed Benchmarks

Let's get down to the nitty-gritty. How many tokens can our two NVIDIA 4090s churn out per second? It's a question that keeps developers and enthusiasts alike on the edge of their seats. Here's what we found:

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 409024GBx2

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 70B (Q4KM) | 19.06 |

Whoa, slow down! Before you jump to conclusions, remember that Llama 3 70B is a massive model. This means it's like trying to fit a colossal bookshelf into a tiny closet. You need a lot of horsepower to handle something that big!

It's also important to note that this is only for Q4KM quantization. This means the model has been compressed to a size that makes it more manageable for the GPUs. Unfortunately, there's no data available for the F16 configuration, so we can't compare how the two different quantization methods fare in this setup.

Performance Analysis: Model and Device Comparison

Now, let's take a step back and compare the performance of Llama 3 70B on our dual NVIDIA 4090 setup to other models and devices.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Remember those benchmark numbers for Llama3 70B? Let's put them in perspective by comparing them to a smaller model, the Llama2 7B, running on an Apple M1 Max.

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama 2 7B (Q4KM) | 155.75 |

This comparison highlights the massive difference in performance between models and the impact of the model's size on speed. The Llama2 7B is significantly smaller, requiring less processing power and making it much faster on the M1 Max.

Practical Applications: Llama 3 70B on NVIDIA 409024GBx2

So, what are the practical uses for Llama3 70B running on a dual NVIDIA 4090 setup? Let's explore some real-world applications:

Use Cases: Demanding Research and Development

The power of Llama 3 70B is undeniable. Researchers and developers can leverage this model for projects that require highly intricate language generation and sophisticated text analysis. Think of it as having a powerful AI assistant ready to tackle complex problems in the fields of science, medicine, and engineering.

Workarounds: Fine-Tuning for Specific Tasks

While running Llama 3 70B on a dual NVIDIA 4090 might be challenging in terms of speed, there are workarounds. One approach is to fine-tune the model for specific tasks. This involves training the model on a smaller dataset relevant to your task, which can improve its performance and make it more efficient.

FAQ

What is quantization?

Quantization is like compressing a language model to make it smaller. It's like going from a large, detailed photograph to a smaller, more manageable version. This compression makes it easier to fit the model on your device and also speeds up its performance.

What are tokens?

Tokens are the building blocks of language models. Think of them as individual words or parts of words. When a language model generates text, it's actually creating a sequence of tokens.

Can I run Llama3 70B on my personal computer?

Running Llama 3 70B on a typical laptop or desktop computer likely won't be feasible. It requires a substantial amount of computing power. Even with a powerful gaming PC, you might face significant performance limitations.

Where can I learn more about LLMs?

There are many resources available to explore the world of LLMs. Check out the following links for a great starting point:

- The Hugging Face Model Hub

- The Stanford Encyclopedia of Philosophy on Large Language Models

- OpenAI's Blog

Keywords

Large Language Models, LLM, Llama3, Llama3 70B, Llama2, NVIDIA 4090, GPU, Token Generation Speed, Quantization, F16, Q4KM, Performance, Deep Dive, Practical Applications, Use Cases, Workarounds, Research & Development, Fine-Tuning