How Fast Can NVIDIA 4090 24GB Run Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These AI marvels can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a challenge, especially if you want to use them for real-time applications. That's where powerful GPUs like the NVIDIA 4090_24GB come in. In this deep dive, we'll put this GPU to the test, exploring how well it performs with the Llama3 8B model.

Think of LLMs as hungry brains that need to be fed a constant stream of data. The tokens, like individual puzzle pieces, are the units these brains process to understand and generate language. A faster GPU means more tokens processed per second, leading to quicker responses and a smoother user experience. Let's dive into the numbers and see just how fast the NVIDIA 4090_24GB can process the Llama3 8B model!

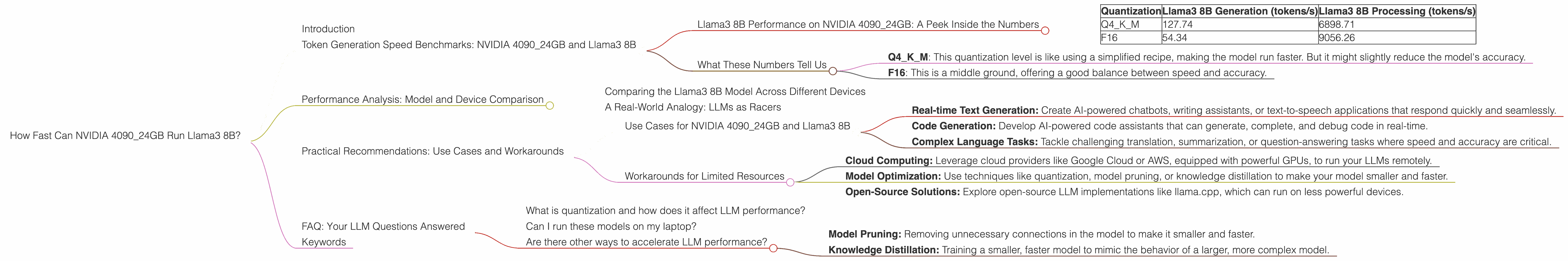

Token Generation Speed Benchmarks: NVIDIA 4090_24GB and Llama3 8B

Llama3 8B Performance on NVIDIA 4090_24GB: A Peek Inside the Numbers

Let's break down the raw speed of the NVIDIA 4090_24GB with the Llama3 8B model. The tables below showcase the tokens per second (tokens/s) generated under different quantization levels:

| Quantization | Llama3 8B Generation (tokens/s) | Llama3 8B Processing (tokens/s) |

|---|---|---|

| Q4KM | 127.74 | 6898.71 |

| F16 | 54.34 | 9056.26 |

Note: For the NVIDIA 4090_24GB, the Llama3 70B model benchmarks were not available.

What These Numbers Tell Us

Quantization, a powerful tool for squeezing LLMs onto smaller GPUs, plays a crucial role here. Think of it like simplifying a complex recipe by using pre-made ingredients. In this case, we're reducing the precision of the model's data to make it lighter and faster.

- Q4KM: This quantization level is like using a simplified recipe, making the model run faster. But it might slightly reduce the model's accuracy.

- F16: This is a middle ground, offering a good balance between speed and accuracy.

The generation speed measures how many tokens the model can output per second. This directly impacts how fast the model generates responses, making it essential for real-time applications.

The processing speed refers to the model's internal operations, handling the heavy lifting of processing data. It's like the cooking time for the recipe, and a higher number means faster overall performance.

As you see, the NVIDIA 4090_24GB achieves significantly higher processing speed than generation speed with both quantization levels. This means the GPU has plenty of horsepower to handle the internal computations needed for language modeling, allowing for quicker responses and a smoother user experience.

Performance Analysis: Model and Device Comparison

Comparing the Llama3 8B Model Across Different Devices

We know how the NVIDIA 4090_24GB performs. But how does it stack up against other devices? Unfortunately, we don't have data for other devices running the Llama3 8B model under the same conditions.

However, we can make some educated guesses based on general trends. For example, the NVIDIA 4090_24GB's performance suggests it's a powerhouse for running LLMs locally. While other devices might be less powerful, they could still offer good performance depending on your specific needs. The key is to strike the right balance between speed, accuracy, and cost.

A Real-World Analogy: LLMs as Racers

Imagine LLMs as race cars. The NVIDIA 4090_24GB is a high-performance supercar, capable of hitting top speeds. Other devices might be more like compact or mid-range cars, still fast enough for everyday driving but not reaching the same top speeds.

The choice depends on your race track. If you need to win a Formula 1 race, you need the supercar. If you're just going for a casual drive, a smaller car might be more than enough.

Practical Recommendations: Use Cases and Workarounds

Use Cases for NVIDIA 4090_24GB and Llama3 8B

The power of the NVIDIA 4090_24GB shines in demanding LLMs scenarios:

- Real-time Text Generation: Create AI-powered chatbots, writing assistants, or text-to-speech applications that respond quickly and seamlessly.

- Code Generation: Develop AI-powered code assistants that can generate, complete, and debug code in real-time.

- Complex Language Tasks: Tackle challenging translation, summarization, or question-answering tasks where speed and accuracy are critical.

Workarounds for Limited Resources

If you don't have access to a top-of-the-line GPU, don't despair! There are workarounds:

- Cloud Computing: Leverage cloud providers like Google Cloud or AWS, equipped with powerful GPUs, to run your LLMs remotely.

- Model Optimization: Use techniques like quantization, model pruning, or knowledge distillation to make your model smaller and faster.

- Open-Source Solutions: Explore open-source LLM implementations like llama.cpp, which can run on less powerful devices.

FAQ: Your LLM Questions Answered

What is quantization and how does it affect LLM performance?

Quantization is like simplifying a complex recipe using pre-made ingredients. It reduces the precision of the LLM's data, making it lighter and faster. Imagine converting a detailed recipe with all the complex instructions and ingredients into a simpler one using pre-made sauces or a smaller number of ingredients. This makes the recipe easier to understand and faster to execute. Similarly, quantization reduces the complexity of the LLM's calculations, making it faster but potentially reducing its accuracy in some cases.

Can I run these models on my laptop?

It depends on your laptop's hardware. If your laptop has a powerful GPU (like an NVIDIA RTX 3060 or higher), you might be able to run smaller LLMs locally. For larger models, you might need a powerful desktop or a dedicated server.

Are there other ways to accelerate LLM performance?

Besides quantization, there are other techniques:

- Model Pruning: Removing unnecessary connections in the model to make it smaller and faster.

- Knowledge Distillation: Training a smaller, faster model to mimic the behavior of a larger, more complex model.

Keywords

NVIDIA 409024GB, Llama3, Llama 8B, LLMs, Large Language Models, GPU, Performance, Token Generation Speed, Quantization, Q4K_M, F16, Processing Speed, Generation Speed, Cloud Computing, Model Optimization, Open-Source, llama.cpp