How Fast Can NVIDIA 4080 16GB Run Llama3 8B?

Introduction

The world of large language models (LLMs) is exploding, offering incredible potential for everything from generating creative content to automating tasks. But running these models locally can be a challenge, especially when it comes to performance. That's where powerful GPUs like the NVIDIA 4080_16GB come in. In this deep dive, we'll explore the speed and efficiency of running the Llama3 8B model on this high-end GPU, exploring different quantization options and comparing it to other device and model combinations.

Performance Analysis: Token Generation Speed Benchmarks

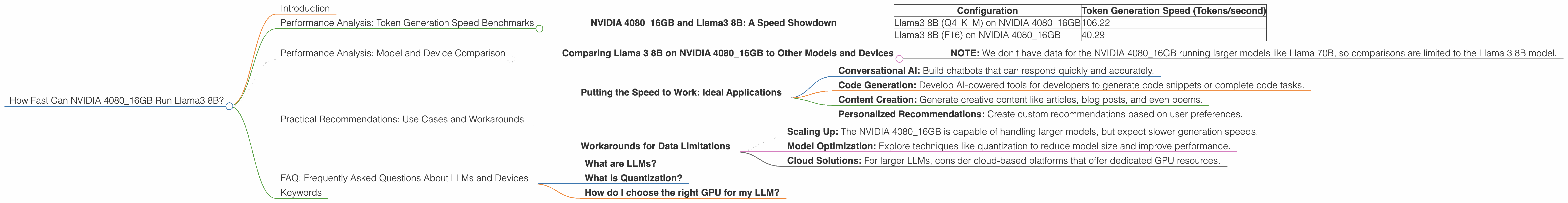

NVIDIA 4080_16GB and Llama3 8B: A Speed Showdown

Let's get straight to the point! The NVIDIA 4080_16GB GPU, a powerhouse in the world of graphics cards, can deliver impressive token generation speeds for the Llama3 8B model. But, as with any performance analysis, context is key. Let's break down the numbers:

| Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B (Q4KM) on NVIDIA 4080_16GB | 106.22 |

| Llama3 8B (F16) on NVIDIA 4080_16GB | 40.29 |

What's the difference between Q4KM and F16?

The 'Q4KM' configuration refers to quantization, a technique that reduces the size of the model by converting its parameters to smaller data types. In this case, Q4KM uses a 4-bit quantization scheme, significantly reducing memory footprint and potentially boosting performance. F16, on the other hand, uses 16-bit floating-point precision, offering more accuracy but with a larger size.

The takeaway? The NVIDIA 408016GB can handle the Llama3 8B model with impressive speed. However, the choice between Q4KM and F16 depends on the specific use case. For applications demanding high accuracy, F16 might be preferred, but for speed-sensitive tasks, Q4K_M could be the way to go.

Performance Analysis: Model and Device Comparison

Comparing Llama 3 8B on NVIDIA 4080_16GB to Other Models and Devices

While the NVIDIA 408016GB and Llama3 8B combination is impressive, it's always helpful to see how it stacks up against other configurations. Unfortunately, we lack data for the NVIDIA 408016GB with the larger Llama3 70B model.

- NOTE: We don't have data for the NVIDIA 4080_16GB running larger models like Llama 70B, so comparisons are limited to the Llama 3 8B model.

Practical Recommendations: Use Cases and Workarounds

Putting the Speed to Work: Ideal Applications

The fast token generation speed of Llama3 8B on the NVIDIA 4080_16GB makes it suitable for several use cases:

- Conversational AI: Build chatbots that can respond quickly and accurately.

- Code Generation: Develop AI-powered tools for developers to generate code snippets or complete code tasks.

- Content Creation: Generate creative content like articles, blog posts, and even poems.

- Personalized Recommendations: Create custom recommendations based on user preferences.

Workarounds for Data Limitations

While we don't have data for the NVIDIA 4080_16GB running larger models, we can still make educated guesses based on the provided data:

- Scaling Up: The NVIDIA 4080_16GB is capable of handling larger models, but expect slower generation speeds.

- Model Optimization: Explore techniques like quantization to reduce model size and improve performance.

- Cloud Solutions: For larger LLMs, consider cloud-based platforms that offer dedicated GPU resources.

FAQ: Frequently Asked Questions About LLMs and Devices

What are LLMs?

LLMs are computer programs trained on massive datasets to understand and generate human-like text. They are often used for natural language processing tasks like translation, text summarization, and chatbot interactions.

What is Quantization?

Quantization is a technique used to reduce the size of a model by converting its parameters to smaller data types. This process can improve performance by reducing the amount of data that needs to be processed.

How do I choose the right GPU for my LLM?

Consider the size of the model, your performance requirements, and your budget. GPUs with more memory and processing power are better suited for larger models.

Keywords

NVIDIA 408016GB, Llama3 8B, Llama 70B, LLM, Large Language Model, GPU, Token Generation Speed, Quantization, Q4K_M, F16, Performance, Efficiency, Conversational AI, Code Generation, Content Creation, Recommendations, Use Cases, Workarounds