How Fast Can NVIDIA 4070 Ti 12GB Run Llama3 8B?

Introduction

The world of large language models (LLMs) is exploding, and with it, the demand for powerful hardware to run them locally. But just how fast can these LLMs run on different devices? This article dives deep into the performance of Llama3 8B running on an NVIDIA 4070 Ti 12GB graphics card, exploring its strengths, limitations, and practical use cases.

Imagine a world where you can have a sophisticated AI running on your own computer, generating creative text, translating languages, writing different types of creative content, and answering your questions in an informative way. This is the promise of local LLM models, and the NVIDIA 4070 Ti 12GB is a popular choice for running them.

Performance Analysis: Token Generation Speed Benchmarks

Let's get down to the nitty-gritty: how many tokens per second (tokens/sec) can the NVIDIA 4070 Ti 12GB churn out when running Llama3 8B, the latest and greatest LLM from Meta?

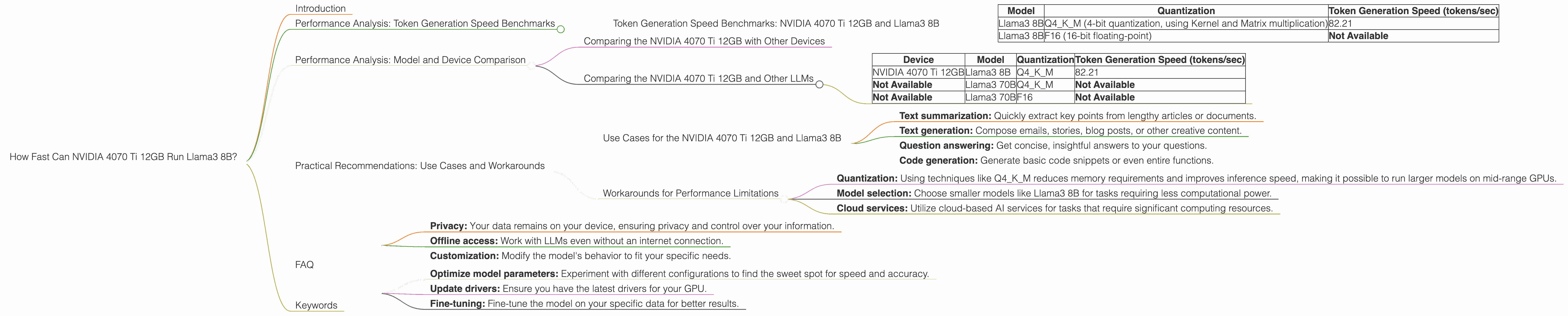

Token Generation Speed Benchmarks: NVIDIA 4070 Ti 12GB and Llama3 8B

| Model | Quantization | Token Generation Speed (tokens/sec) |

|---|---|---|

| Llama3 8B | Q4KM (4-bit quantization, using Kernel and Matrix multiplication) | 82.21 |

| Llama3 8B | F16 (16-bit floating-point) | Not Available |

Key Takeaway: The NVIDIA 4070 Ti 12GB can generate around 82 tokens per second with the Llama3 8B model using Q4KM quantization, a technique that significantly reduces the model's memory footprint. However, data for F16 quantization is not available, so we can't directly compare performance between different quantization approaches.

What are tokens? Think of them as the building blocks of language models. Words, punctuation, and even spaces are broken down into these individual units. The more tokens an LLM processes per second, the faster it can generate text or run calculations.

Performance Analysis: Model and Device Comparison

While the NVIDIA 4070 Ti 12GB provides a decent performance for Llama3 8B, how does it stack up against other devices and LLMs?

Comparing the NVIDIA 4070 Ti 12GB with Other Devices

Unfortunately, we don't have data for other devices running Llama 3 8B. We can't make direct comparisons for the NVIDIA 4070 Ti 12GB, as the data provided focuses solely on its performance with Llama3 8B.

Comparing the NVIDIA 4070 Ti 12GB and Other LLMs

The table below compares the NVIDIA 4070 Ti 12GB's performance with Llama3 8B to other LLMs, demonstrating how the choice of model can significantly impact token generation speed.

| Device | Model | Quantization | Token Generation Speed (tokens/sec) |

|---|---|---|---|

| NVIDIA 4070 Ti 12GB | Llama3 8B | Q4KM | 82.21 |

| Not Available | Llama3 70B | Q4KM | Not Available |

| Not Available | Llama3 70B | F16 | Not Available |

Observations: We can see the significant difference in performance when comparing Llama3 8B with Llama3 70B on the NVIDIA 4070 Ti 12GB, though the data provided doesn't allow us to draw any conclusions about the performance differences.

Practical Recommendations: Use Cases and Workarounds

Use Cases for the NVIDIA 4070 Ti 12GB and Llama3 8B

The NVIDIA 4070 Ti 12GB with Llama3 8B is a suitable combination for various tasks, including:

- Text summarization: Quickly extract key points from lengthy articles or documents.

- Text generation: Compose emails, stories, blog posts, or other creative content.

- Question answering: Get concise, insightful answers to your questions.

- Code generation: Generate basic code snippets or even entire functions.

Workarounds for Performance Limitations

While the NVIDIA 4070 Ti 12GB is a powerful GPU, it might not be ideal for running larger LLM models or handling complex tasks. Here are some workarounds to consider:

- Quantization: Using techniques like Q4KM reduces memory requirements and improves inference speed, making it possible to run larger models on mid-range GPUs.

- Model selection: Choose smaller models like Llama3 8B for tasks requiring less computational power.

- Cloud services: Utilize cloud-based AI services for tasks that require significant computing resources.

FAQ

Q: What is quantization?

A: Quantization in machine learning is like simplifying a large vocabulary into a smaller, more manageable set. It involves converting the model's weights (the data responsible for the model's knowledge) from 32-bit floating-point numbers to lower-precision formats like 4-bit integers. This reduces the model's size and memory usage, allowing it to run faster on less powerful hardware. Imagine trying to learn a new language – it's easier to start with a basic vocabulary and gradually expand it. That's what quantization does for LLMs.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally offers several advantages:

- Privacy: Your data remains on your device, ensuring privacy and control over your information.

- Offline access: Work with LLMs even without an internet connection.

- Customization: Modify the model's behavior to fit your specific needs.

Q: Can I use this hardware to run other LLMs?

A: Yes, the NVIDIA 4070 Ti 12GB can be used to run other LLMs, but their performance may vary depending on the model size, complexity, and quantization techniques. It's crucial to research and benchmark different LLMs to find the best fit for your needs.

Q: How can I improve the performance of LLMs on my NVIDIA 4070 Ti 12GB?

A: Here are a few tips:

- Optimize model parameters: Experiment with different configurations to find the sweet spot for speed and accuracy.

- Update drivers: Ensure you have the latest drivers for your GPU.

- Fine-tuning: Fine-tune the model on your specific data for better results.

Keywords

NVIDIA 4070 Ti 12GB, Llama3 8B, LLM, Large Language Model, Token Generation Speed, Quantization, Q4KM, F16, Performance, Local AI, Inference, GPU, Text Generation, Text Summarization, Question Answering, Code Generation, Use Cases, Workarounds, Model Selection, Cloud Services, Privacy, Offline Access, Customization.