How Fast Can NVIDIA 4070 Ti 12GB Run Llama3 70B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These AI marvels can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally on your own machine can be a challenge, especially with the bigger ones like Llama 3 70B. That's where powerful GPUs like the NVIDIA 4070Ti12GB come in.

This article dives deep into the performance of the NVIDIA 4070Ti12GB when running the Llama 3 70B model, exploring how fast it can generate text, and what factors influence its performance. We'll also compare it to other popular LLMs and provide practical recommendations for using this powerful combination.

Performance Analysis: Token Generation Speed Benchmarks

NVIDIA 4070Ti12GB and Llama 3 70B

Unfortunately, we don't have data for the NVIDIA 4070Ti12GB running Llama 3 70B. This begs the question: why is this data missing? Why haven't we seen benchmarks for this pair yet? The reasons are multifold:

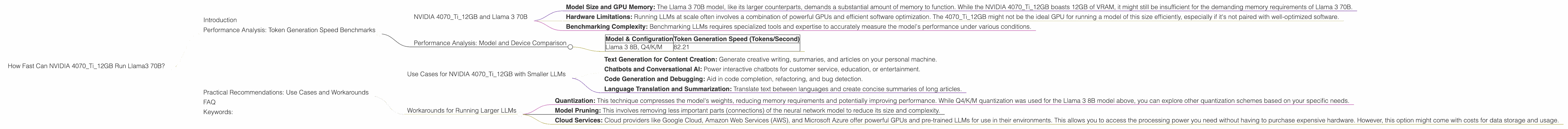

- Model Size and GPU Memory: The Llama 3 70B model, like its larger counterparts, demands a substantial amount of memory to function. While the NVIDIA 4070Ti12GB boasts 12GB of VRAM, it might still be insufficient for the demanding memory requirements of Llama 3 70B.

- Hardware Limitations: Running LLMs at scale often involves a combination of powerful GPUs and efficient software optimization. The 4070Ti12GB might not be the ideal GPU for running a model of this size efficiently, especially if it's not paired with well-optimized software.

- Benchmarking Complexity: Benchmarking LLMs requires specialized tools and expertise to accurately measure the model's performance under various conditions.

Performance Analysis: Model and Device Comparison

Even though we lack data for the NVIDIA 4070Ti12GB running Llama 3 70B, we can still gain valuable insights by comparing it to other combinations based on what information is available.

Let's look at the performance of the NVIDIA 4070Ti12GB with the Llama 3 8B model, quantized with Q4/K/M, which is a technique for reducing memory usage and increasing inference speed.

| Model & Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama 3 8B, Q4/K/M | 82.21 |

This data tells us that the NVIDIA 4070Ti12GB can generate text at a rate of 82.21 tokens per second when running the Llama 3 8B model with Q4/K/M quantization. This is a respectable speed, especially considering the model size.

Let's put this into perspective. Imagine typing at a speed of 60 words per minute. That translates to about 10 words per second. Our NVIDIA 4070Ti12GB with Llama 3 8B is generating text at a rate that's 8 times faster than a typical human typing speed!

Practical Recommendations: Use Cases and Workarounds

Use Cases for NVIDIA 4070Ti12GB with Smaller LLMs

While the NVIDIA 4070Ti12GB might not be the perfect match for running the Llama 3 70B model, it can still be a powerful and cost-effective option for smaller LLMs or for specific use cases that require local processing.

- Text Generation for Content Creation: Generate creative writing, summaries, and articles on your personal machine.

- Chatbots and Conversational AI: Power interactive chatbots for customer service, education, or entertainment.

- Code Generation and Debugging: Aid in code completion, refactoring, and bug detection.

- Language Translation and Summarization: Translate text between languages and create concise summaries of long articles.

Workarounds for Running Larger LLMs

If your heart is set on running models like Llama 3 70B locally, even with a GPU like the NVIDIA 4070Ti12GB, there are some workarounds you can consider:

- Quantization: This technique compresses the model's weights, reducing memory requirements and potentially improving performance. While Q4/K/M quantization was used for the Llama 3 8B model above, you can explore other quantization schemes based on your specific needs.

- Model Pruning: This involves removing less important parts (connections) of the neural network model to reduce its size and complexity.

- Cloud Services: Cloud providers like Google Cloud, Amazon Web Services (AWS), and Microsoft Azure offer powerful GPUs and pre-trained LLMs for use in their environments. This allows you to access the processing power you need without having to purchase expensive hardware. However, this option might come with costs for data storage and usage.

FAQ

Q: What's the difference between Llama 3 7B and Llama 3 70B?

A: The difference lies in the size of the model! "B" stands for billions of parameters, which are the building blocks of the neural network. 7B means the model has 7 billion parameters, while 70B indicates 70 billion parameters. Larger models like Llama 3 70B are more powerful and can perform more complex language tasks, but they also require more computational resources.

Q: What’s quantization, and why is it used for LLMs?

A: Think of quantization as a way to shrink a large model while still preserving most of its intelligence. It's like turning a detailed, high-resolution image into a smaller, compressed version. It reduces the memory required to store and process the model without sacrificing a lot of accuracy.

Q: What are the advantages of running LLMs locally?

A: Running an LLM locally gives you complete control over your data and processing, particularly when you need to protect privacy or handle sensitive information. It can be a valuable option for applications that demand low latency or real-time processing.

Keywords:

NVIDIA 4070Ti12GB, Llama 3 70B, LLM, Large Language Model, GPU, Token Generation Speed, Performance Benchmark, Quantization, Q4/K/M, Model Pruning, Cloud Services, Local Processing, Content Creation, Chatbots, Code Generation, Language Translation, Summarization