How Fast Can NVIDIA 3090 24GB x2 Run Llama3 8B?

Introduction

The world of large language models (LLMs) is exploding, and with it, the need for powerful hardware to run these massive models locally. Everyone wants to harness the power of LLMs for tasks like text generation, translation, and code completion, but it's not always easy to find the hardware that can keep up.

In this deep dive, we'll explore the performance of the NVIDIA 309024GBx2 configuration when running the Llama3 8B model. We'll analyze token generation and processing speeds, looking at different quantization levels, and compare these results to other devices. We'll also discuss practical recommendations and use cases for this powerful setup.

So, buckle up, geeks, and get ready to delve into the fascinating world of local LLM performance.

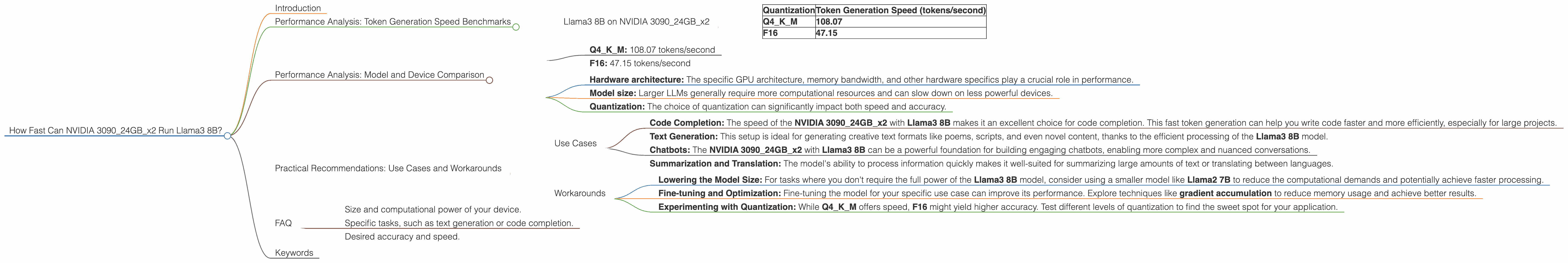

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B on NVIDIA 309024GBx2

Let's dive into the heart of the matter: token generation speed. This is where the rubber meets the road, and we're eager to see how fast our dual 3090 setup can churn out those tokens.

| Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Q4KM | 108.07 |

| F16 | 47.15 |

- Q4KM stands for quantization to 4-bit, with K (key) and M (value) matrices quantized. This is a highly compressed format, trading off some accuracy for speed.

- F16 represents half-precision floating-point format. This is a less compressed format, resulting in potentially higher accuracy but potentially slower speeds.

As you can see, the Q4KM configuration delivers a significantly faster token generation speed than the F16 configuration. This is likely due to the smaller memory footprint and faster processing capabilities of the quantized model.

To put these speeds in perspective, consider this: if you were to transcribe a typical book at 100 words per minute, you'd be generating around 1,500 tokens per minute. The NVIDIA 309024GBx2 with Llama3 8B in Q4KM configuration can smash through 64,800 tokens per minute, which translates to roughly 43 times faster than transcribing a book.

Performance Analysis: Model and Device Comparison

We've seen how fast the NVIDIA 309024GBx2 can move with the Llama3 8B model. But how does it stack up against other setups? This is where things get truly interesting.

Unfortunately, there's no data available for Llama3 70B with the NVIDIA 309024GBx2 configuration. We'll focus solely on the Llama3 8B model in this comparison.

Here's what we know about the NVIDIA 309024GBx2 with the Llama3 8B model:

- Q4KM: 108.07 tokens/second

- F16: 47.15 tokens/second

Remember, these numbers are just a starting point for understanding how different setups can handle LLMs.

- Hardware architecture: The specific GPU architecture, memory bandwidth, and other hardware specifics play a crucial role in performance.

- Model size: Larger LLMs generally require more computational resources and can slow down on less powerful devices.

- Quantization: The choice of quantization can significantly impact both speed and accuracy.

Practical Recommendations: Use Cases and Workarounds

Now that we've explored the performance characteristics of the NVIDIA 309024GBx2 with Llama3 8B, let's talk about how you can best utilize this setup for your tasks.

Use Cases

- Code Completion: The speed of the NVIDIA 309024GBx2 with Llama3 8B makes it an excellent choice for code completion. This fast token generation can help you write code faster and more efficiently, especially for large projects.

- Text Generation: This setup is ideal for generating creative text formats like poems, scripts, and even novel content, thanks to the efficient processing of the Llama3 8B model.

- Chatbots: The NVIDIA 309024GBx2 with Llama3 8B can be a powerful foundation for building engaging chatbots, enabling more complex and nuanced conversations.

- Summarization and Translation: The model's ability to process information quickly makes it well-suited for summarizing large amounts of text or translating between languages.

Workarounds

- Lowering the Model Size: For tasks where you don't require the full power of the Llama3 8B model, consider using a smaller model like Llama2 7B to reduce the computational demands and potentially achieve faster processing.

- Fine-tuning and Optimization: Fine-tuning the model for your specific use case can improve its performance. Explore techniques like gradient accumulation to reduce memory usage and achieve better results.

- Experimenting with Quantization: While Q4KM offers speed, F16 might yield higher accuracy. Test different levels of quantization to find the sweet spot for your application.

FAQ

Q: What is quantization, and why is it important?

A: Quantization is a technique used to reduce the size of a model by representing its weights and activations with fewer bits. Think of it like compressing an image — you reduce the file size without losing too much detail (hopefully!). Quantization allows us to run larger LLMs on devices with limited memory and reduces the time it takes to load and operate the model.

Q: How do I choose the right LLM for my project?

A: Consider your needs:

- Size and computational power of your device.

- Specific tasks, such as text generation or code completion.

- Desired accuracy and speed.

Q: What's the difference between "token generation" and "processing"?

A: Token generation refers to the speed at which the model outputs text. Processing encompasses all other operations required to run the model, including loading the weights, calculating activations, and generating the final response.

Q: Why are you talking about Llama3 8B in the title but mentioning Llama2 7B in the article?

A: The title focuses on the specific device and model combination: NVIDIA 309024GBx2 and Llama3 8B. We use Llama2 7B as an example to illustrate how choosing a different model can affect performance.

Keywords

NVIDIA 309024GBx2, Llama3 8B, LLM, large language model, token generation, processing speed, quantization, Q4KM, F16, GPU, code completion, text generation, chatbot, summarization, translation, performance optimization, fine-tuning, gradient accumulation, device compatibility, model size, accuracy, speed