How Fast Can NVIDIA 3090 24GB x2 Run Llama3 70B?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and rightfully so. These AI powerhouses are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But powering these models is no small feat! It requires serious computational muscle, especially when running them locally. So, if you're a developer or a tech enthusiast looking to harness the power of LLMs on your own machines, you'll want to know how fast your hardware can handle the workload.

This deep dive focuses on the NVIDIA 309024GBx2 setup and its performance with the Llama3 70B model, exploring the speed and limitations of this combination. We'll uncover token generation speeds, delve into comparisons with other models, and provide practical recommendations for how to manage these powerful models on your system.

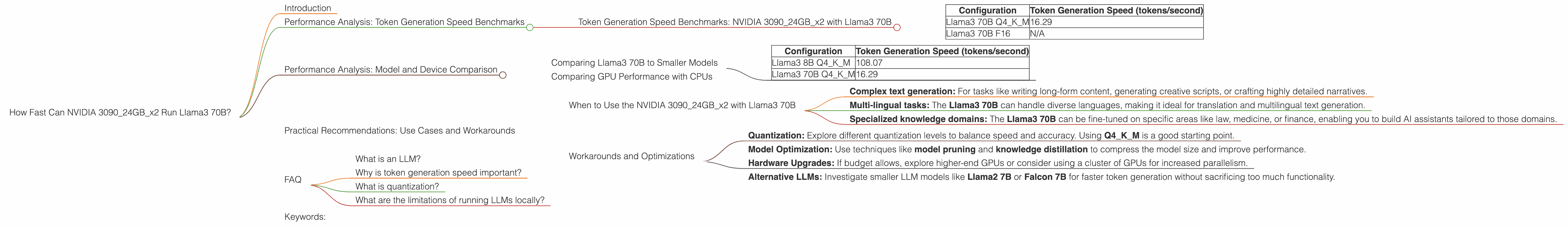

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 309024GBx2 with Llama3 70B

Let's cut to the chase: how fast is the NVIDIA 309024GBx2 setup with the Llama3 70B model?

Token generation speed refers to how quickly the model can produce new text. More tokens per second mean faster text generation. Here's a breakdown of the stats:

| Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 70B Q4KM | 16.29 |

| Llama3 70B F16 | N/A |

Important Note: There's no data available for the Llama3 70B F16 configuration on the NVIDIA 309024GBx2 setup.

Token generation speed is influenced by several factors including model size, quantization, and the specific hardware used. Let's break down these concepts to get a better understanding.

Quantization: Think of quantization as a way to shrink the model's size. It involves converting the model's parameters from 32-bit floating point numbers to smaller representations, like 16-bit or 8-bit integers. This reduces the memory footprint and enhances performance.

Q4KM is quantization using 4-bit quantized weights, followed by K (kernel) and M (matrix) operations for optimized performance. The F16 configuration refers to the 16-bit floating point representation of the model parameters, leading to a larger memory footprint but potentially higher precision in some cases.

Performance Analysis: Model and Device Comparison

Comparing Llama3 70B to Smaller Models

How does the Llama3 70B perform compared to its smaller sibling, the Llama3 8B on the NVIDIA 309024GBx2 setup?

| Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 108.07 |

| Llama3 70B Q4KM | 16.29 |

As you can see, the Llama3 70B is significantly slower than the Llama3 8B model, even with the Q4KM quantization. This is expected as the larger model requires more resources and time to process each generated token.

Imagine trying to write a novel on a tiny typewriter versus a powerful computer. The computer can generate text at a much faster pace thanks to its processing power, just like the Llama3 8B model outruns the Llama3 70B in token generation.

Comparing GPU Performance with CPUs

How does the NVIDIA 309024GBx2 setup with the Llama3 70B model compare to CPUs in terms of token generation?

We lack data for CPU-based token generation speed with the Llama3 70B model. However, it's generally understood that GPUs excel at parallel processing tasks, making them ideal for large language models, especially when dealing with bigger models like the Llama3 70B. CPUs tend to be slower for these tasks, but they may offer more versatility for certain applications.

Practical Recommendations: Use Cases and Workarounds

When to Use the NVIDIA 309024GBx2 with Llama3 70B

The NVIDIA 309024GBx2 setup is a powerhouse capable of running the Llama3 70B model, although its token generation speed is not as rapid as smaller models. Consider using it if you need the capabilities of a large model for:

- Complex text generation: For tasks like writing long-form content, generating creative scripts, or crafting highly detailed narratives.

- Multi-lingual tasks: The Llama3 70B can handle diverse languages, making it ideal for translation and multilingual text generation.

- Specialized knowledge domains: The Llama3 70B can be fine-tuned on specific areas like law, medicine, or finance, enabling you to build AI assistants tailored to those domains.

Workarounds and Optimizations

If the token generation speed of Llama3 70B on the NVIDIA 309024GBx2 setup is a concern, consider these strategies:

- Quantization: Explore different quantization levels to balance speed and accuracy. Using Q4KM is a good starting point.

- Model Optimization: Use techniques like model pruning and knowledge distillation to compress the model size and improve performance.

- Hardware Upgrades: If budget allows, explore higher-end GPUs or consider using a cluster of GPUs for increased parallelism.

- Alternative LLMs: Investigate smaller LLM models like Llama2 7B or Falcon 7B for faster token generation without sacrificing too much functionality.

FAQ

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence that excels at understanding and generating human-like text. Imagine a super-smart AI that can write stories, translate languages, summarize articles, and even answer your questions in a coherent way. LLMs are trained on massive amounts of text data, allowing them to learn patterns and generate text that's often indistinguishable from human writing.

Why is token generation speed important?

Token generation speed determines how quickly an LLM can produce text. The faster the speed, the more efficient and responsive the model's interactions with users. Think of it like a fast typing speed – the quicker you can generate words, the smoother the conversation flows.

What is quantization?

Quantization is a technique used to compress the size of an LLM. It involves converting the model's parameters from large 32-bit floating-point numbers to smaller representations like 16-bit or 8-bit integers. This significantly reduces the memory footprint and can improve performance, but it may come at the cost of slightly reduced accuracy.

What are the limitations of running LLMs locally?

Running LLMs on a local machine can be challenging due to their size and computational demands. You might experience slow response times, limited memory, and potential compatibility issues depending on your setup. However, the benefits of having a local LLM include privacy and control over your data.

Keywords:

NVIDIA 309024GBx2, Llama3 70B, Token Generation Speed, LLMs, Large Language Models, GPU, CPU, Quantization, Q4KM, F16, Q4KMGeneration, Q4KMProcessing, F16Generation, F16Processing, Performance Benchmark, Practical Recommendations, Use Cases, Workarounds, Model Optimization, Model Pruning, Knowledge Distillation, Hardware Upgrades.