How Fast Can NVIDIA 3090 24GB Run Llama3 8B?

Introduction: Diving Deep into Local LLMs with NVIDIA 3090_24GB

The world of Large Language Models (LLMs) is buzzing. From generating creative content to translating languages and answering complex questions, LLMs are revolutionizing how we interact with technology. But, running these powerful models locally can be resource-intensive, requiring beefy hardware to handle the processing demands.

This article aims to demystify the world of local LLM performance by focusing on a specific powerhouse: the NVIDIA 3090_24GB and its interaction with the Llama3 8B model. We'll dive deep into benchmarks, compare performance across different quantizations, and provide practical recommendations for developers looking to harness the power of LLMs locally.

Performance Analysis: Token Generation Speed Benchmarks with NVIDIA 3090_24GB and Llama3 8B

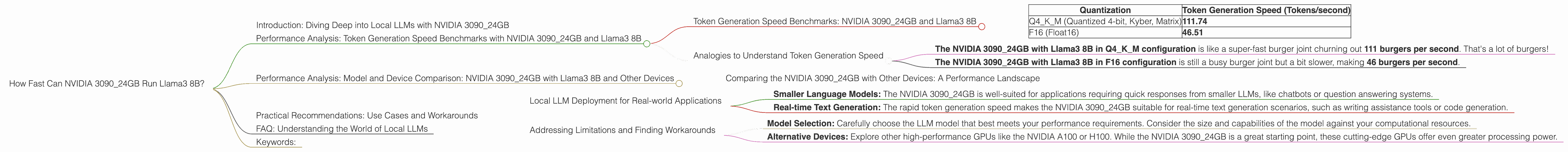

Token Generation Speed Benchmarks: NVIDIA 3090_24GB and Llama3 8B

The speed at which a model generates tokens (words) directly impacts the user experience. A faster token generation rate means quicker responses and a smoother interaction with the LLM. Let's see how the NVIDIA 3090_24GB performs with Llama3 8B in different quantization configurations:

| Quantization | Token Generation Speed (Tokens/second) |

|---|---|

| Q4KM (Quantized 4-bit, Kyber, Matrix) | 111.74 |

| F16 (Float16) | 46.51 |

These numbers are impressive! The NVIDIA 309024GB can generate over 111 tokens per second with the Llama3 8B model in the Q4K_M quantization, which is highly efficient for local inference.

Analogies to Understand Token Generation Speed

Imagine a fast food chain where each token is a burger you order.

- The NVIDIA 309024GB with Llama3 8B in Q4K_M configuration is like a super-fast burger joint churning out 111 burgers per second. That's a lot of burgers!

- The NVIDIA 3090_24GB with Llama3 8B in F16 configuration is still a busy burger joint but a bit slower, making 46 burgers per second.

This analogy highlights the significance of token generation speed, impacting how fast and efficiently you can run your LLM applications.

Performance Analysis: Model and Device Comparison: NVIDIA 3090_24GB with Llama3 8B and Other Devices

Comparing the NVIDIA 3090_24GB with Other Devices: A Performance Landscape

While we are focusing on the NVIDIA 309024GB, it's helpful to understand its performance relative to other devices. Unfortunately, we lack data on the NVIDIA 309024GB performance with the Llama3 70B model.

Note: We don't have performance data for the Llama3 70B model on the NVIDIA 3090_24GB in this specific dataset.

Practical Recommendations: Use Cases and Workarounds

Local LLM Deployment for Real-world Applications

The NVIDIA 309024GB delivers excellent performance with the Llama3 8B model, particularly in the Q4K_M quantization. This makes it ideal for a variety of local applications:

- Smaller Language Models: The NVIDIA 3090_24GB is well-suited for applications requiring quick responses from smaller LLMs, like chatbots or question answering systems.

- Real-time Text Generation: The rapid token generation speed makes the NVIDIA 3090_24GB suitable for real-time text generation scenarios, such as writing assistance tools or code generation.

Addressing Limitations and Finding Workarounds

While the NVIDIA 3090_24GB delivers impressive performance with the Llama3 8B model, we lack data for the Llama3 70B model. This highlights the importance of exploring different options based on your application's needs:

- Model Selection: Carefully choose the LLM model that best meets your performance requirements. Consider the size and capabilities of the model against your computational resources.

- Alternative Devices: Explore other high-performance GPUs like the NVIDIA A100 or H100. While the NVIDIA 3090_24GB is a great starting point, these cutting-edge GPUs offer even greater processing power.

FAQ: Understanding the World of Local LLMs

Q1: What's Quantization and How Does it Affect Performance?

A1: Quantization is like a diet for your LLM. It shrinks the size of the model by using fewer bits to represent the data. This makes the model smaller and quicker to load and process, especially on devices with limited memory, like mobile phones.

Q2: What about Memory Usage and How Does It Impact Performance?

A2: Memory usage is like the storage space in your computer. A larger model requires more memory, which can impact speed. The NVIDIA 3090_24GB with its generous 24GB of RAM is a great choice for dealing with larger LLMs without running into memory limitations.

Q3: How Can I Optimize My LLM Performance Even Further?

A3: There are various ways to squeeze out more juice from your LLM. Explore: * Optimizing Model Architecture: Use techniques like pruning or knowledge distillation to reduce the model's size and complexity. * Hardware Acceleration: Leverage specialized hardware like Tensor Processing Units (TPUs) or Field Programmable Gate Arrays (FPGAs) for significant speedups.

Keywords:

NVIDIA 309024GB, Llama3 8B, Llama3 70B, Token Generation Speed, Quantization, Q4K_M, F16, LLM, Local Inference, Performance Benchmarks, GPU, GPU Performance, Large Language Models, Model Size, Model Complexity, Hardware Acceleration, Text Generation, Chatbots, Question Answering, Real-time Applications, Model Optimization, GPU Memory, Memory Usage