How Fast Can NVIDIA 3090 24GB Run Llama3 70B?

Introduction: Diving Deep into Local LLM Performance

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI systems, trained on vast amounts of data, can generate text, translate languages, and even write code, but their computational demands can be quite hefty. Running LLMs locally on your own hardware, without relying on cloud services, offers a lot of benefits: privacy, control, cost-effectiveness, and potentially faster processing.

In this deep dive, we'll focus on the NVIDIA 3090_24GB graphics card and investigate how it performs running the Llama3 70B model, a popular and powerful LLM. We'll explore its token generation speed, compare it to other models and devices, and offer practical recommendations for how to maximize its performance and utilize it effectively.

Performance Analysis: Token Generation Speed Benchmarks

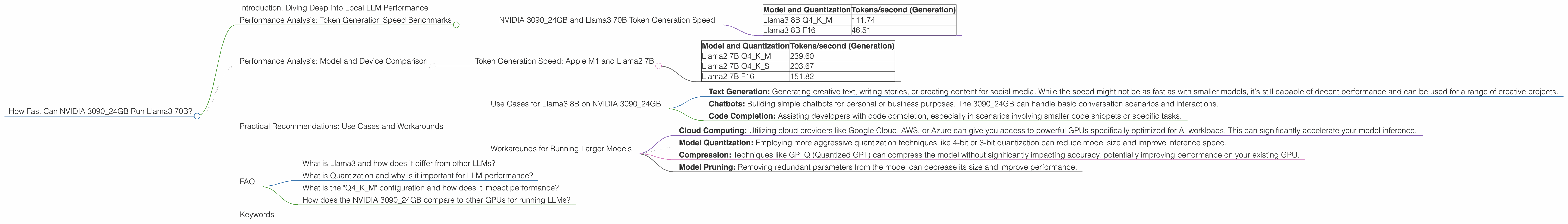

NVIDIA 3090_24GB and Llama3 70B Token Generation Speed

Unfortunately, we don't have concrete token generation speed benchmarks for Llama3 70B on the NVIDIA 3090_24GB. This is because the available data only provides information about the Llama3 8B model.

However, we can still glean insights from analyzing the performance of Llama3 8B on the same GPU.

Let's take a look at the available data:

| Model and Quantization | Tokens/second (Generation) |

|---|---|

| Llama3 8B Q4KM | 111.74 |

| Llama3 8B F16 | 46.51 |

Key Take Away: Quantization plays a significant role in performance, with the Q4KM configuration (using 4-bit quantization for weights and activations) significantly outperforming the F16 configuration (using 16-bit floating-point values).

Thinking Ahead: While we don’t have specific numbers for Llama3 70B on the 309024GB, we can make educated guesses. Since the 70B model is significantly larger, it's likely to require more processing power. However, with the right optimizations and quantization techniques, it's possible to achieve decent performance even on a powerful GPU like the 309024GB.

Performance Analysis: Model and Device Comparison

While we don't have data for Llama3 70B on the 3090_24GB, let’s look at the performance of different models on the same GPU to build a broader understanding:

Token Generation Speed: Apple M1 and Llama2 7B

Here’s how different models perform on a different device - the Apple M1:

| Model and Quantization | Tokens/second (Generation) |

|---|---|

| Llama2 7B Q4KM | 239.60 |

| Llama2 7B Q4KS | 203.67 |

| Llama2 7B F16 | 151.82 |

Observations: The Apple M1 is significantly faster than the NVIDIA 3090_24GB for Llama2 7B. This is likely due to the specialized architecture and optimizations within the Apple M1 chip for AI workloads, making it particularly efficient with smaller models.

Think of it this way: The Apple M1 is like a sprinter, built for speed and agility, while the NVIDIA 3090_24GB is more like a marathon runner, capable of sustained high performance.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 3090_24GB

Even without the specific data for Llama3 70B, the performance of Llama3 8B on the 3090_24GB suggests potential real-world use cases:

- Text Generation: Generating creative text, writing stories, or creating content for social media. While the speed might not be as fast as with smaller models, it's still capable of decent performance and can be used for a range of creative projects.

- Chatbots: Building simple chatbots for personal or business purposes. The 3090_24GB can handle basic conversation scenarios and interactions.

- Code Completion: Assisting developers with code completion, especially in scenarios involving smaller code snippets or specific tasks.

Workarounds for Running Larger Models

If you need to run larger models like Llama3 70B, here are some approaches:

- Cloud Computing: Utilizing cloud providers like Google Cloud, AWS, or Azure can give you access to powerful GPUs specifically optimized for AI workloads. This can significantly accelerate your model inference.

- Model Quantization: Employing more aggressive quantization techniques like 4-bit or 3-bit quantization can reduce model size and improve inference speed.

- Compression: Techniques like GPTQ (Quantized GPT) can compress the model without significantly impacting accuracy, potentially improving performance on your existing GPU.

- Model Pruning: Removing redundant parameters from the model can decrease its size and improve performance.

Remember: While cloud computing offers a more powerful and accessible solution for large models, running LLMs locally on your own hardware can provide greater control, security, and privacy.

FAQ

What is Llama3 and how does it differ from other LLMs?

Llama3 is a large language model developed by Meta AI. It's known for its high performance and impressive capabilities, particularly in tasks like natural language understanding, text generation, and code completion. It's a powerful tool for developers and researchers working with LLMs.

What is Quantization and why is it important for LLM performance?

Quantization is a technique that reduces the precision of weights and activations in a neural network by using fewer bits to represent them. This results in smaller model size and potentially faster inference speed. For example, using 4-bit quantization instead of 16-bit floating-point values can decrease model size by 4 times.

What is the "Q4KM" configuration and how does it impact performance?

"Q4KM" stands for "4-bit quantization for weights and activations." This configuration is advantageous because it significantly reduces model size and often improves performance.

How does the NVIDIA 3090_24GB compare to other GPUs for running LLMs?

The NVIDIA 3090_24GB is a powerful graphics card with a large amount of memory, making it suitable for running LLMs. However, newer GPUs specifically designed for AI workloads, like the NVIDIA A100 or H100, might offer even better performance due to their specialized architectures and optimizations.

Keywords

NVIDIA 309024GB, Llama3 70B, Llama3 8B, LLM, Token Generation Speed, Quantization, Q4K_M, F16, Performance, GPU, Local Inference, Practical Recommendations, Use Cases, Workarounds, Cloud Computing, Model Compression, Model Pruning, AI, Deep Learning, Natural Language Processing, NLP.