How Fast Can NVIDIA 3080 Ti 12GB Run Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI models are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But with great power comes the need for serious hardware, and that's where the NVIDIA 3080Ti12GB graphics card steps in.

If you're a developer or a data scientist looking to run LLMs locally, you’ll want to know how your hardware stacks up against the demands of these complex models. This article dives into the performance of the NVIDIA 3080Ti12GB GPU running the Llama3 8B model. We'll uncover the token generation speed benchmarks, compare different configurations, and explore practical recommendations for your individual needs.

Let's dive in and see how fast we can get those tokens flowing!

Performance Analysis: Token Generation Speed Benchmarks

NVIDIA 3080Ti12GB and Llama3 8B: A Match Made in Heaven?

The NVIDIA 3080Ti12GB is a powerhouse of a graphics card, known for its robust performance and ample memory. But how does it fare when tasked with running the Llama3 8B model?

Let's look at our benchmark data, which measures the tokens generated per second. Remember, a higher number means faster processing:

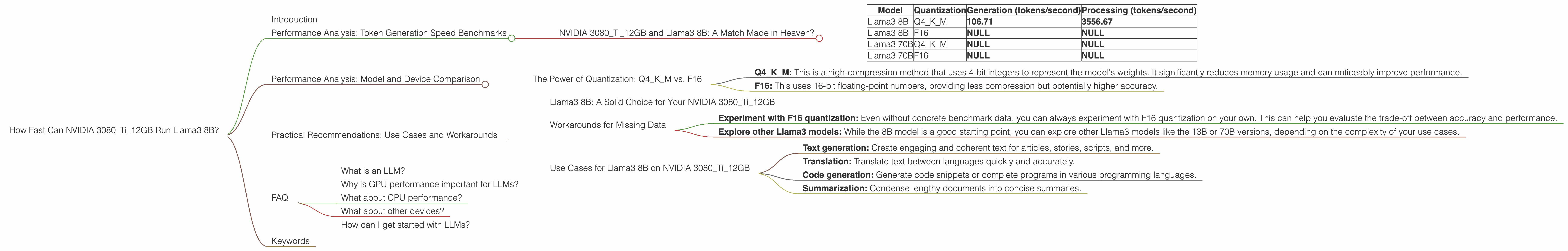

| Model | Quantization | Generation (tokens/second) | Processing (tokens/second) |

|---|---|---|---|

| Llama3 8B | Q4KM | 106.71 | 3556.67 |

| Llama3 8B | F16 | NULL | NULL |

| Llama3 70B | Q4KM | NULL | NULL |

| Llama3 70B | F16 | NULL | NULL |

Key Observations:

- Llama3 8B with Q4KM quantization: The NVIDIA 3080Ti12GB can generate 106.71 tokens per second and process 3556.67 tokens per second when running the Llama3 8B model with Q4KM quantization. This is a pretty impressive performance for a single GPU.

- Limited Data: We unfortunately lack data for the Llama3 8B model with F16 quantization. This means we can't compare the performance difference between the two quantization methods on the 3080Ti12GB. Similarly, data for the Llama3 70B model is also not available.

Performance Analysis: Model and Device Comparison

The Power of Quantization: Q4KM vs. F16

You might be wondering what "quantization" means. Think of it as a way to make the LLM model more compact and efficient. Imagine trying to squeeze an entire movie onto a tiny thumb drive. Quantization lets you compress the data and fit more information into a smaller space.

- Q4KM: This is a high-compression method that uses 4-bit integers to represent the model's weights. It significantly reduces memory usage and can noticeably improve performance.

- F16: This uses 16-bit floating-point numbers, providing less compression but potentially higher accuracy.

In the case of the Llama3 8B model on the NVIDIA 3080Ti12GB, we only have data for Q4KM quantization. While F16 might offer slightly better accuracy in some cases, the lack of data prevents us from evaluating performance differences.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B: A Solid Choice for Your NVIDIA 3080Ti12GB

The NVIDIA 3080Ti12GB is a great option for running the Llama3 8B model with Q4KM quantization. It's capable of handling the model's workload relatively smoothly, whether for text generation, translation, or other tasks.

Workarounds for Missing Data

- Experiment with F16 quantization: Even without concrete benchmark data, you can always experiment with F16 quantization on your own. This can help you evaluate the trade-off between accuracy and performance.

- Explore other Llama3 models: While the 8B model is a good starting point, you can explore other Llama3 models like the 13B or 70B versions, depending on the complexity of your use cases.

Use Cases for Llama3 8B on NVIDIA 3080Ti12GB

- Text generation: Create engaging and coherent text for articles, stories, scripts, and more.

- Translation: Translate text between languages quickly and accurately.

- Code generation: Generate code snippets or complete programs in various programming languages.

- Summarization: Condense lengthy documents into concise summaries.

FAQ

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence trained on a massive amount of text data. They can understand and generate human-like text, perform various language-related tasks, and even exhibit a surprising level of creativity.

Why is GPU performance important for LLMs?

LLMs are computationally intensive, requiring a lot of processing power. GPUs are specialized processors designed for parallel computing tasks, making them ideal for handling the complex calculations involved in LLM inference.

What about CPU performance?

While CPUs are essential for overall system operation, GPUs handle the heavy lifting of running LLMs. A powerful CPU can help with data loading and other auxiliary tasks, but the core LLM inference process is primarily driven by the GPU.

What about other devices?

This article focuses on the NVIDIA 3080Ti12GB, but other GPUs and even specialized LLM accelerators exist. The best choice for you will depend on your specific needs and budget.

How can I get started with LLMs?

There are many resources available online to help you get started with LLMs, including tutorials, pre-trained models, and libraries. You can explore platforms like Hugging Face, which offer a vast collection of open-source LLMs and tools.

Keywords

NVIDIA 3080Ti12GB, Llama3 8B, LLM, Large Language Model, GPU, Token Generation Speed, Quantization, Q4KM, F16, Performance Benchmarks, Text Generation, Translation, Code Generation, Summarization, Inference, GPU Performance, AI