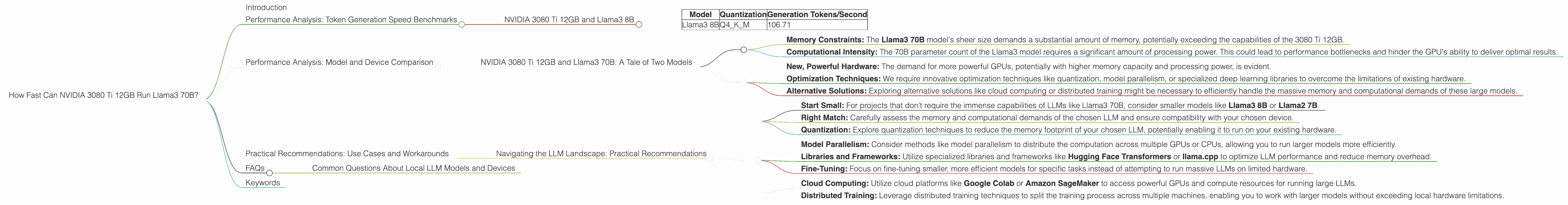

How Fast Can NVIDIA 3080 Ti 12GB Run Llama3 70B?

Introduction

The world of large language models (LLMs) is evolving at a breakneck speed, and with it, the demand for powerful hardware to run these complex models locally is growing rapidly. If you're a data scientist, developer, or simply an AI enthusiast, you’re probably curious about the performance of different GPUs when handling LLMs.

This article dives deep into the performance of NVIDIA GeForce RTX 3080 Ti 12GB with Llama 3 70B model, exploring its token generation speed and comparing it to other configurations. Understanding the relationship between hardware and LLM performance can help you select the right setup for your specific needs and unlock the full potential of these powerful AI models.

Performance Analysis: Token Generation Speed Benchmarks

NVIDIA 3080 Ti 12GB and Llama3 8B

Our journey begins with a performance analysis of the NVIDIA 3080 Ti 12GB running Llama 3 8B. The results are based on real-world benchmarks, offering valuable insights for developers and enthusiasts alike.

Token Generation Speed Benchmarks

| Model | Quantization | Generation Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 106.71 |

Key Observations:

- The NVIDIA 3080 Ti 12GB achieves a respectable 106.71 tokens per second when running Llama3 8B quantized with Q4KM.

- This indicates the GPU's capability to handle this smaller model with reasonable efficiency, particularly for tasks involving quick generation of text fragments.

Practical Implications:

These results are encouraging for developers using Llama 3 8B for tasks requiring fast text generation, such as interactive chatbots, real-time content summarization, or rapid experimentation with different prompts. However, as we move to larger models like Llama3 70B, the performance landscape changes significantly.

Performance Analysis: Model and Device Comparison

NVIDIA 3080 Ti 12GB and Llama3 70B: A Tale of Two Models

Scaling up to Llama3 70B poses a significant challenge for even powerful GPUs like the 3080 Ti 12GB. Unfortunately, the current data doesn't provide benchmark results for this specific model-device combination. This absence of data highlights the limits of current GPU performance, especially when working with massive LLMs. As the size of LLMs explodes, the need for more powerful hardware solutions becomes increasingly apparent.

Why the Missing Data?

- Memory Constraints: The Llama3 70B model's sheer size demands a substantial amount of memory, potentially exceeding the capabilities of the 3080 Ti 12GB.

- Computational Intensity: The 70B parameter count of the Llama3 model requires a significant amount of processing power. This could lead to performance bottlenecks and hinder the GPU's ability to deliver optimal results.

The Need for Adaptation:

The absence of data for Llama3 70B on the 3080 Ti 12GB underscores the need for:

- New, Powerful Hardware: The demand for more powerful GPUs, potentially with higher memory capacity and processing power, is evident.

- Optimization Techniques: We require innovative optimization techniques like quantization, model parallelism, or specialized deep learning libraries to overcome the limitations of existing hardware.

- Alternative Solutions: Exploring alternative solutions like cloud computing or distributed training might be necessary to efficiently handle the massive memory and computational demands of these large models.

Practical Recommendations: Use Cases and Workarounds

Navigating the LLM Landscape: Practical Recommendations

As we navigate the ever-evolving LLM landscape, we must understand the limitations of current hardware and adapt our strategies accordingly.

1. Choose the Right LLM and Device:

- Start Small: For projects that don't require the immense capabilities of LLMs like Llama3 70B, consider smaller models like Llama3 8B or Llama2 7B.

- Right Match: Carefully assess the memory and computational demands of the chosen LLM and ensure compatibility with your chosen device.

- Quantization: Explore quantization techniques to reduce the memory footprint of your chosen LLM, potentially enabling it to run on your existing hardware.

2. Embrace Optimization Techniques:

- Model Parallelism: Consider methods like model parallelism to distribute the computation across multiple GPUs or CPUs, allowing you to run larger models more efficiently.

- Libraries and Frameworks: Utilize specialized libraries and frameworks like Hugging Face Transformers or llama.cpp to optimize LLM performance and reduce memory overhead.

- Fine-Tuning: Focus on fine-tuning smaller, more efficient models for specific tasks instead of attempting to run massive LLMs on limited hardware.

3. Exploring Alternative Solutions:

- Cloud Computing: Utilize cloud platforms like Google Colab or Amazon SageMaker to access powerful GPUs and compute resources for running large LLMs.

- Distributed Training: Leverage distributed training techniques to split the training process across multiple machines, enabling you to work with larger models without exceeding local hardware limitations.

FAQs

Common Questions About Local LLM Models and Devices

Q: What is model quantization?

A: Model quantization is a technique used to reduce the size of large language models by converting their floating-point weights to lower-precision data types, like integers. This can significantly reduce the memory footprint of the model, allowing it to run on devices with less memory. Think of it like compressing a large image file - you lose some detail, but you can significantly reduce the file size.

Q: How does model parallelism help run LLMs?

*A: * Model parallelism involves splitting the LLM's computation across multiple devices, like GPUs or CPUs. Think of it like dividing a large task among several workers. By distributing the workload, you can effectively handle larger models that would otherwise strain a single device.

Q: Is cloud computing the only option for running LLMs?

A: While cloud computing offers immense power and flexibility, it's not the only solution. Local resources can handle smaller models and specific tasks. The key is to assess your needs and choose the most cost-effective and efficient approach.

Keywords

LLMs, Llama3, Llama3 70B, Llama3 8B, NVIDIA 3080 Ti 12GB, Token Generation Speed, GPU Performance, Quantization, Model Parallelism, Memory Constraints, Processing Power, Practical Recommendations, Use Cases, Workarounds, Cloud Computing, Distributed Training, Optimization Techniques, Hugging Face Transformers, llama.cpp, Deep Learning Libraries