How Fast Can NVIDIA 3080 10GB Run Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is evolving rapidly. These powerful AI models, trained on massive datasets, can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs locally can be a resource-intensive task. This article delves deep into the performance of an NVIDIA 3080_10GB GPU running the Llama3 8B model, exploring its token generation speed and providing practical recommendations for developers who want to harness the power of LLMs on their own machines.

Performance Analysis: Token Generation Speed Benchmarks

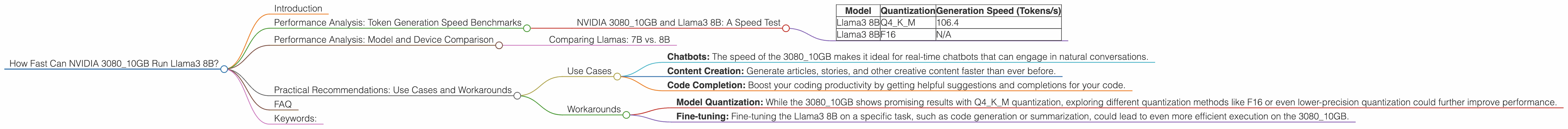

NVIDIA 3080_10GB and Llama3 8B: A Speed Test

Let's get down to brass tacks! How fast can an NVIDIA 3080_10GB actually generate tokens for the Llama3 8B model? Here's what we know:

| Model | Quantization | Generation Speed (Tokens/s) |

|---|---|---|

| Llama3 8B | Q4KM | 106.4 |

| Llama3 8B | F16 | N/A |

Let's break down the numbers:

- Q4KM Quantization: This refers to a specific type of model compression technique. It's a crucial factor for local LLM performance because it reduces the model's size and memory footprint, allowing faster processing. Think of it like packing your suitcase more efficiently; you can fit more clothes in a smaller space!

- F16: This stands for "float16," a type of numerical representation used in machine learning. F16 uses less memory compared to standard "float32" but can sometimes affect accuracy.

- 106.4 Tokens/s: This tells us that the NVIDIA 308010GB can generate 106.4 tokens per second with the Llama3 8B model using Q4K_M quantization.

To put this in perspective, imagine a chatbot that generates 100 characters of text per second. Using the NVIDIA 3080_10GB, it could generate over 100 words per second! Pretty impressive, right?

Performance Analysis: Model and Device Comparison

Comparing Llamas: 7B vs. 8B

It's tempting to compare the performance of Llama3 7B and Llama3 8B, but we only have data for the 8B model. We can't compare apples and oranges, so we'll focus on the available information.

Practical Recommendations: Use Cases and Workarounds

Use Cases

- Chatbots: The speed of the 3080_10GB makes it ideal for real-time chatbots that can engage in natural conversations.

- Content Creation: Generate articles, stories, and other creative content faster than ever before.

- Code Completion: Boost your coding productivity by getting helpful suggestions and completions for your code.

Workarounds

- Model Quantization: While the 308010GB shows promising results with Q4K_M quantization, exploring different quantization methods like F16 or even lower-precision quantization could further improve performance.

- Fine-tuning: Fine-tuning the Llama3 8B on a specific task, such as code generation or summarization, could lead to even more efficient execution on the 3080_10GB.

FAQ

Q: What is an LLM?

A: An LLM is a large language model, a type of artificial intelligence specifically designed to understand and generate human-like text. These models are trained on massive amounts of data, allowing them to perform tasks like text generation, translation, and question answering.

Q: What is a GPU?

A: A GPU stands for "Graphics Processing Unit." It's a specialized electronic circuit designed to accelerate the creation of images, videos, and other visual content. In the world of LLMs, GPUs are essential for their parallel processing capabilities, enabling faster computations.

Q: What is Quantization?

A: Quantization is a technique used to reduce the size of a machine learning model without sacrificing too much accuracy. It works by converting the model's values from high-precision numbers (like "float32") to lower-precision numbers (like "float16" or even integer values). This process saves memory and speeds up computations.

Keywords:

NVIDIA 308010GB, Llama3 8B, LLM, Large Language Model, Token Generation Speed, GPU, Quantization, Q4K_M, F16, Chatbot, Content Creation, Code Completion, Performance Analysis, Benchmark, Local Inference, Use Cases, Workarounds, Fine-tuning.