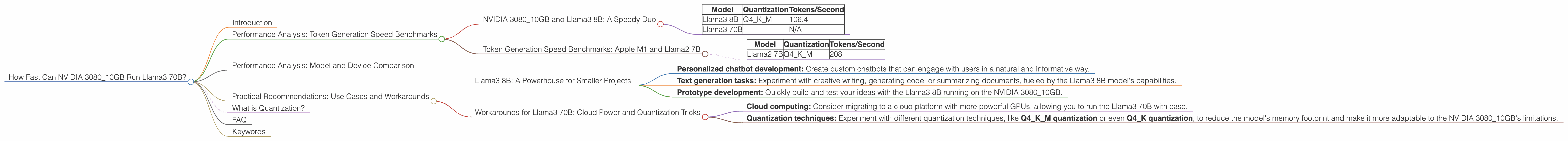

How Fast Can NVIDIA 3080 10GB Run Llama3 70B?

Introduction

The world of large language models (LLMs) is expanding at an exhilarating pace, with new models and advancements popping up seemingly every day. These LLMs are capable of generating human-like text, translating languages, writing different kinds of creative content, and even answering your questions in an informative way. But for developers and enthusiasts looking to run these models locally, the question of hardware performance becomes crucial.

In this deep dive, we'll focus on a popular GPU, the NVIDIA 3080_10GB, and explore how it tackles running the impressive Llama3 70B model. We'll analyze its performance in token generation and see how it stacks up against other models and devices.

Performance Analysis: Token Generation Speed Benchmarks

NVIDIA 3080_10GB and Llama3 8B: A Speedy Duo

Let's start with the smaller Llama3 8B model. The NVIDIA 308010GB delivers a remarkable 106.4 tokens per second when using the Q4K_M quantization technique. This means the GPU can churn out over 100 text units every single second.

Table 1: Token Generation Speed on NVIDIA 3080_10GB

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 106.4 |

| Llama3 70B | N/A |

Note: We don't have data for Llama3 70B on the NVIDIA 3080_10GB, likely because its memory capacity may be insufficient for efficiently handling the model's massive size.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

For comparison, let's look at another powerful device, the Apple M1, and a different LLM, Llama2 7B. The Apple M1 with its integrated GPU manages to generate around 208 tokens/second when running Llama2 7B with Q4KM quantization. Just like a turbocharged engine, the M1 can quickly churn out text, making it a viable option for local LLM deployments.

Table 2: Token Generation Speed on Apple M1

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama2 7B | Q4KM | 208 |

Note: This data is purely for comparison and the Apple M1 CPU is not necessarily a direct competitor to the NVIDIA 3080_10GB.

Performance Analysis: Model and Device Comparison

The NVIDIA 3080_10GB shows remarkable performance in token generation for the smaller Llama3 8B model. It's important to remember that we don't have data for the larger Llama3 70B on this specific GPU, so we can't directly compare their performance.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B: A Powerhouse for Smaller Projects

The NVIDIA 3080_10GB proves to be a solid choice for running the Llama3 8B model. This combination is ideal for developers working on smaller projects, such as:

- Personalized chatbot development: Create custom chatbots that can engage with users in a natural and informative way.

- Text generation tasks: Experiment with creative writing, generating code, or summarizing documents, fueled by the Llama3 8B model's capabilities.

- Prototype development: Quickly build and test your ideas with the Llama3 8B running on the NVIDIA 3080_10GB.

Workarounds for Llama3 70B: Cloud Power and Quantization Tricks

Running the Llama3 70B on an NVIDIA 3080_10GB directly might not be feasible due to memory limitations. But, we can explore workarounds:

- Cloud computing: Consider migrating to a cloud platform with more powerful GPUs, allowing you to run the Llama3 70B with ease.

- Quantization techniques: Experiment with different quantization techniques, like Q4KM quantization or even Q4K quantization, to reduce the model's memory footprint and make it more adaptable to the NVIDIA 308010GB's limitations.

What is Quantization?

Imagine trying to fit a whole library of books into a small bookshelf. Quantization in the LLM world is like reducing the number of pages in each book without losing too much information – it makes the model lighter and easier to fit into your GPU's memory.

FAQ

Q: What's the difference between "generation" and "processing" in the performance data? A: "Generation" captures the speed of generating new text, while "processing" refers to how quickly the model can handle the input to understand it and produce an output.

Q: If I don't have an NVIDIA 3080_10GB, what are some alternatives for running LLMs locally? A: There are plenty of options! Consider CPUs, GPUs, or even specialized AI accelerators like TPUs depending on your budget and specific requirements.

Q: Should I focus on the "tokens/second" or "processing speed?" A: It depends on your use case! If you're building an interactive chatbot, generation speed matters. If you're running batch processing, processing speed becomes more important.

Keywords

NVIDIA 3080_10GB, Llama3 70B, Llama3 8B, LLM, Token Generation, Quantization, GPU, Performance, Benchmark, Deep Dive, Local Inference, Cloud Computing, AI Accelerator, TPUs