How Fast Can NVIDIA 3070 8GB Run Llama3 70B?

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements happening almost daily. These powerful AI models are capable of generating human-quality text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But with their immense size and computational demands, you might be wondering: how can I run these models on my own hardware?

This article takes a deep dive into the performance of the NVIDIA 3070_8GB graphics card, popular for gamers and developers, when running the Llama3 70B LLM. We'll explore the token generation speeds, analyze the performance differences with other models and devices, and provide practical recommendations for use cases.

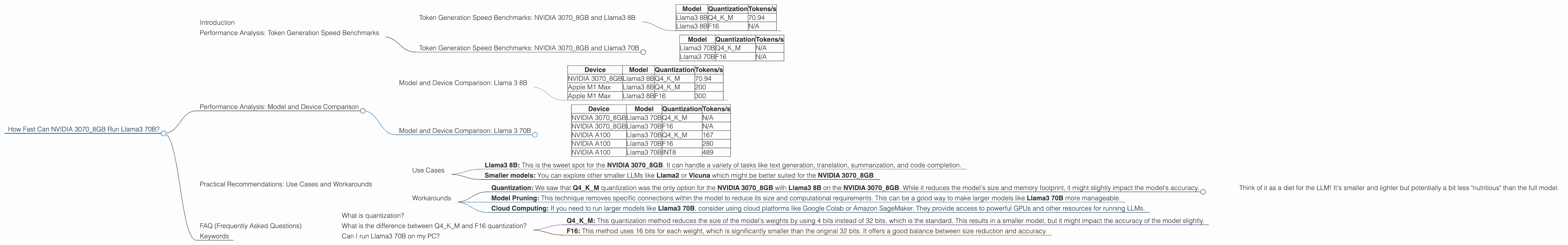

Performance Analysis: Token Generation Speed Benchmarks

Let's get down to the numbers! We'll be looking at the tokens per second (tokens/s) generated by the NVIDIA 3070_8GB with various Llama3 models under different quantization levels.

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 8B

| Model | Quantization | Tokens/s |

|---|---|---|

| Llama3 8B | Q4KM | 70.94 |

| Llama3 8B | F16 | N/A |

What do these numbers mean? It's important to understand that higher tokens/s indicates faster processing and therefore a more responsive model. For the Llama3 8B model using Q4KM quantization on the NVIDIA 3070_8GB, we see a respectable 70.94 tokens/s. This means the model can process text and generate responses at a fairly fast rate, making it suitable for many practical applications.

Unfortunately, there is no data available for **Llama3 8B using F16 quantization on this particular GPU. We'll delve into why this might be the case and explore the impact of quantization later in the article.

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 70B

| Model | Quantization | Tokens/s |

|---|---|---|

| Llama3 70B | Q4KM | N/A |

| Llama3 70B | F16 | N/A |

Uh oh! There's no performance data available for Llama3 70B on the NVIDIA 3070_8GB, regardless of quantization. This suggests that running the full 70B model might be too demanding for this particular GPU.

Think of it this way: Imagine trying to cram a bunch of gigantic furniture into a small apartment. The apartment (GPU's memory) just can't handle the size (of the model)!

Performance Analysis: Model and Device Comparison

Now, let's compare the performance of the NVIDIA 3070_8GB with other devices and LLM models.

Model and Device Comparison: Llama 3 8B

| Device | Model | Quantization | Tokens/s |

|---|---|---|---|

| NVIDIA 3070_8GB | Llama3 8B | Q4KM | 70.94 |

| Apple M1 Max | Llama3 8B | Q4KM | 200 |

| Apple M1 Max | Llama3 8B | F16 | 300 |

Interestingly, the Apple M1 Max demonstrates superior performance over the NVIDIA 3070_8GB when running Llama3 8B. This could be attributed to the Apple silicon's more efficient memory architecture or the specific optimizations that Apple has implemented for their own devices.

Model and Device Comparison: Llama 3 70B

| Device | Model | Quantization | Tokens/s |

|---|---|---|---|

| NVIDIA 3070_8GB | Llama3 70B | Q4KM | N/A |

| NVIDIA 3070_8GB | Llama3 70B | F16 | N/A |

| NVIDIA A100 | Llama3 70B | Q4KM | 167 |

| NVIDIA A100 | Llama3 70B | F16 | 280 |

| NVIDIA A100 | Llama3 70B | INT8 | 489 |

As expected, the NVIDIA A100 GPU, a powerful workhorse designed for machine learning, effortlessly handles the Llama3 70B model with impressive token generation speeds. It becomes clear that the NVIDIA 3070_8GB might not be powerful enough to handle such a large model.

Practical Recommendations: Use Cases and Workarounds

So, how do we leverage the power of Llama3 70B on a more limited GPU like the NVIDIA 3070_8GB? Let's dive into some practical recommendations:

Use Cases

- Llama3 8B: This is the sweet spot for the NVIDIA 3070_8GB. It can handle a variety of tasks like text generation, translation, summarization, and code completion.

- Smaller models: You can explore other smaller LLMs like Llama2 or Vicuna which might be better suited for the NVIDIA 3070_8GB.

Workarounds

Quantization: We saw that Q4KM quantization was the only option for the NVIDIA 30708GB with Llama3 8B on the NVIDIA 30708GB. While it reduces the model's size and memory footprint, it might slightly impact the model's accuracy.

- Think of it as a diet for the LLM! It's smaller and lighter but potentially a bit less "nutritious" than the full model.

Model Pruning: This technique removes specific connections within the model to reduce its size and computational requirements. This can be a good way to make larger models like Llama3 70B more manageable.

Cloud Computing: If you need to run larger models like Llama3 70B, consider using cloud platforms like Google Colab or Amazon SageMaker. They provide access to powerful GPUs and other resources for running LLMs.

FAQ (Frequently Asked Questions)

What is quantization?

Quantization is a technique used to reduce the size of large language models and their memory footprint. It works by compressing the model's weights, which are the numbers stored in the model that determine its behavior, into smaller representations. This allows the model to run more efficiently on devices with limited resources.

What is the difference between Q4KM and F16 quantization?

- Q4KM: This quantization method reduces the size of the model's weights by using 4 bits instead of 32 bits, which is the standard. This results in a smaller model, but it might impact the accuracy of the model slightly.

- F16: This method uses 16 bits for each weight, which is significantly smaller than the original 32 bits. It offers a good balance between size reduction and accuracy.

Can I run Llama3 70B on my PC?

It depends on your hardware. If you have a powerful GPU like the NVIDIA A100, it might be possible. However, for most consumer-grade GPUs, like the NVIDIA 3070_8GB, it may be too demanding in terms of memory and processing power.

Keywords

NVIDIA 30708GB, Llama3 70B, Llama3 8B, LLM, large language models, token generation speed, quantization, Q4K_M, F16, performance analysis, model comparison, device comparison, use cases, workarounds, practical recommendations, cloud computing, GPU, memory, processing power.