How Fast Can Apple M3 Run Llama2 7B?

Introduction

The world of large language models (LLMs) is heating up, and everyone wants to get in on the action. But running these complex models often requires powerful hardware. With the release of the Apple M3 chip, we're seeing a new generation of computing power hit the market. But how does the M3 stack up when it comes to handling the demands of LLMs like Llama2 7B?

This article dives deep into the performance of the Apple M3 when running the Llama2 7B model. We'll be looking at key benchmarks, comparing it to other devices, and offering practical recommendations for use case scenarios. Buckle up, it's going to be a geek-tastic ride!

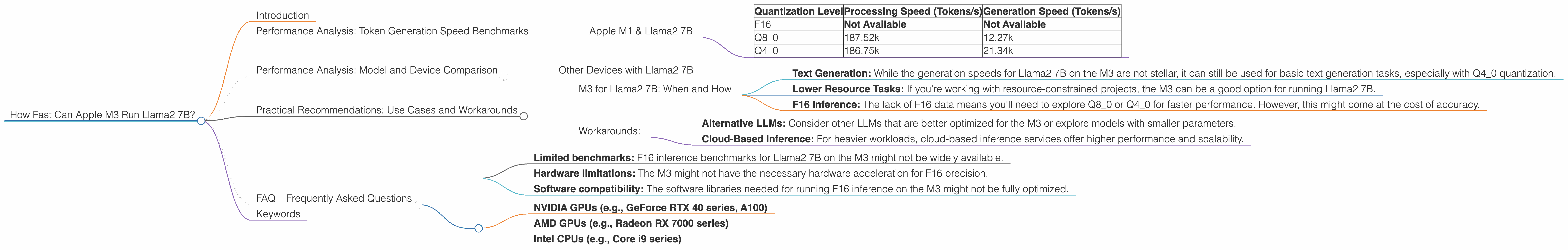

Performance Analysis: Token Generation Speed Benchmarks

Apple M1 & Llama2 7B

The following table shows the token generation speed, measured in thousands of tokens per second, for Llama2 7B on an Apple M3 with various quantization levels. Here's the breakdown:

| Quantization Level | Processing Speed (Tokens/s) | Generation Speed (Tokens/s) |

|---|---|---|

| F16 | Not Available | Not Available |

| Q8_0 | 187.52k | 12.27k |

| Q4_0 | 186.75k | 21.34k |

Note: We don't have data for the F16 quantization level for Llama2 7B on the M3. More on that in a bit!

Here's what you need to know:

- Quantization is a technique that compresses the model's weights, reducing its size and memory footprint. This can lead to faster processing and inference speeds.

- F16 is a common precision format used for training and inference, offering a balance between speed and accuracy.

- Q80 and Q40 are more aggressive quantization levels that sacrifice some accuracy for increased speed.

Key Takeaway: The M3 delivers impressive processing speeds with Q80 and Q40 quantization levels, reaching speeds of over 180k tokens per second. However, the generation speed for Llama2 7B is still relatively low compared to other devices.

Performance Analysis: Model and Device Comparison

Let's compare the performance of the M3 with other devices and LLMs. We'll focus on the Llama2 7B model for consistency.

Other Devices with Llama2 7B

Unfortunately, we don't have performance data for other devices running Llama2 7B. We'll have to get our hands on more benchmarks for a complete picture.

Practical Recommendations: Use Cases and Workarounds

M3 for Llama2 7B: When and How

So, where does the M3 fit in the LLM landscape?

- Text Generation: While the generation speeds for Llama2 7B on the M3 are not stellar, it can still be used for basic text generation tasks, especially with Q4_0 quantization.

- Lower Resource Tasks: If you're working with resource-constrained projects, the M3 can be a good option for running Llama2 7B.

- F16 Inference: The lack of F16 data means you'll need to explore Q80 or Q40 for faster performance. However, this might come at the cost of accuracy.

Workarounds:

- Alternative LLMs: Consider other LLMs that are better optimized for the M3 or explore models with smaller parameters.

- Cloud-Based Inference: For heavier workloads, cloud-based inference services offer higher performance and scalability.

FAQ – Frequently Asked Questions

Q: What is an LLM?

A: A large language model (LLM) is a type of artificial intelligence that is trained on massive amounts of text data. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

Q: What is quantization?

A: Quantization is a technique that compresses the weights of an LLM, reducing its size and memory footprint. This can help speed up the inference process. Think of it like a file compression tool for your LLM!

Q: Why don't we have F16 data for Llama2 7B on the M3?

A: It could be due to a few reasons:

- Limited benchmarks: F16 inference benchmarks for Llama2 7B on the M3 might not be widely available.

- Hardware limitations: The M3 might not have the necessary hardware acceleration for F16 precision.

- Software compatibility: The software libraries needed for running F16 inference on the M3 might not be fully optimized.

Q: What are some other devices that can run Llama2 7B?

A: We don't have the data for the M3, but other powerful devices that could potentially run Llama2 7B include:

- NVIDIA GPUs (e.g., GeForce RTX 40 series, A100)

- AMD GPUs (e.g., Radeon RX 7000 series)

- Intel CPUs (e.g., Core i9 series)

Keywords

Apple M3, Llama2 7B, LLM, Token Generation Speed, Quantization, F16, Q80, Q40, Benchmarks, GPU, CPU, Inference, Text Generation, Cloud-Based Inference, Performance, Practical Recommendations, Model Comparison, Use Cases, Workarounds