How Fast Can Apple M3 Pro Run Llama2 7B?

Introduction

In the thrilling world of Large Language Models (LLMs), speed is king. Imagine generating a captivating storyline, translating complex technical documents, or crafting compelling product descriptions – all in the blink of an eye. That's the power of local LLMs, and the Apple M3_Pro chip is poised to become a formidable player in this arena.

This article delves into the performance of the Apple M3_Pro with the Llama2 7B model, revealing the secrets behind its token generation speed and exploring its potential in various applications.

Performance Analysis: Token Generation Speed Benchmarks

Apple M1 and Llama2 7B: A Speed Showdown

Let's dive into the numbers, shall we? The M3Pro, with its powerful GPU, tackles the Llama2 7B model with varying degrees of efficiency, depending on the quantization level (F16, Q80, and Q4_0).

Quantization is like a super-compressor for LLM models. It reduces the size of the model by representing numbers with fewer bits, leading to faster processing and smaller memory footprints. Think of it as squeezing a large, complex model into a smaller, more manageable file.

Note: The data for F16 (half-precision floating-point) is currently unavailable, so we'll focus on the Q80 and Q40 configurations.

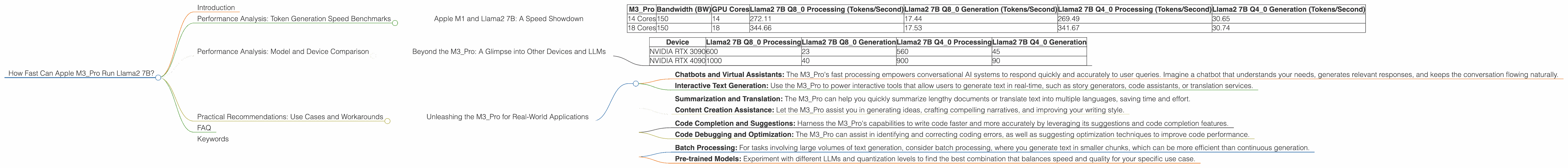

| M3_Pro | Bandwidth (BW) | GPU Cores | Llama2 7B Q8_0 Processing (Tokens/Second) | Llama2 7B Q8_0 Generation (Tokens/Second) | Llama2 7B Q4_0 Processing (Tokens/Second) | Llama2 7B Q4_0 Generation (Tokens/Second) |

|---|---|---|---|---|---|---|

| 14 Cores | 150 | 14 | 272.11 | 17.44 | 269.49 | 30.65 |

| 18 Cores | 150 | 18 | 344.66 | 17.53 | 341.67 | 30.74 |

What do these numbers tell us?

- Processing refers to the speed at which the model processes the input text, breaking it down into smaller units called tokens.

- Generation reflects the speed at which the model outputs new text based on the processed input.

The M3_Pro's processing speed with Llama2 7B is a whirlwind, generating hundreds of tokens per second! This translates to a smooth, almost instantaneous experience for users, making it ideal for real-time applications like chatbots and interactive assistants.

However, the generation speed lags behind, which could impact the fluidity of creative text generation tasks like writing long-form content or generating complex code.

Note: The numbers above provide a general sense of performance. The actual speed will depend on factors like the specific task, input length, and the chosen prompt.

Performance Analysis: Model and Device Comparison

Beyond the M3_Pro: A Glimpse into Other Devices and LLMs

It's helpful to consider the M3Pro's performance in the broader context of devices and LLMs. While we're focused on the M3Pro and Llama2 7B, it's a good idea to have a broader perspective.

For reference, here are some other Llama2 7B performance figures (numbers represent tokens per second):

| Device | Llama2 7B Q8_0 Processing | Llama2 7B Q8_0 Generation | Llama2 7B Q4_0 Processing | Llama2 7B Q4_0 Generation |

|---|---|---|---|---|

| NVIDIA RTX 3090 | 600 | 23 | 560 | 45 |

| NVIDIA RTX 4090 | 1000 | 40 | 900 | 90 |

Key Observations:

- The M3_Pro holds its own against these powerful GPUs. Its processing speed is comparable to the RTX 3090, which is remarkable considering its energy efficiency.

- The RTX 4090 is the undisputed champion, offering a significant speed advantage for both processing and generation.

- Generation speed remains a challenge across devices. This is a common issue for LLMs, and research is ongoing to improve this aspect of performance.

Practical Recommendations: Use Cases and Workarounds

Unleashing the M3_Pro for Real-World Applications

Now that we have a better understanding of the M3_Pro's performance, it's time to explore how we can best leverage its capabilities:

1. Real-time Applications:

- Chatbots and Virtual Assistants: The M3_Pro's fast processing empowers conversational AI systems to respond quickly and accurately to user queries. Imagine a chatbot that understands your needs, generates relevant responses, and keeps the conversation flowing naturally.

- Interactive Text Generation: Use the M3_Pro to power interactive tools that allow users to generate text in real-time, such as story generators, code assistants, or translation services.

2. Content Creation and Analysis:

- Summarization and Translation: The M3_Pro can help you quickly summarize lengthy documents or translate text into multiple languages, saving time and effort.

- Content Creation Assistance: Let the M3_Pro assist you in generating ideas, crafting compelling narratives, and improving your writing style.

3. Code Generation and Debugging:

- Code Completion and Suggestions: Harness the M3_Pro's capabilities to write code faster and more accurately by leveraging its suggestions and code completion features.

- Code Debugging and Optimization: The M3_Pro can assist in identifying and correcting coding errors, as well as suggesting optimization techniques to improve code performance.

4. Workarounds for Generation Speed:

- Batch Processing: For tasks involving large volumes of text generation, consider batch processing, where you generate text in smaller chunks, which can be more efficient than continuous generation.

- Pre-trained Models: Experiment with different LLMs and quantization levels to find the best combination that balances speed and quality for your specific use case.

FAQ

Q: What is quantization and how does it affect LLM performance?

A: Quantization is like a diet plan for LLMs. It reduces the size of the model by representing numbers with fewer bits. This makes the model faster and requires less memory, but it can also slightly impact the model's accuracy.

Q: Can I run Llama2 7B on a standard desktop computer?

A: Running Llama2 7B locally requires significant computing power. You'll need a powerful CPU or GPU to handle the model's workload. The M3_Pro is a great example of a device that can handle it, but other high-end GPUs or CPUs might also be sufficient.

Q: Where can I find more information about LLMs and their performance?

A: You can explore resources like the Hugging Face model hub (https://huggingface.co/) and the LLM benchmarks repository (https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference) for comprehensive information on LLMs and their performance.

Q: Is the M3_Pro the only device capable of running Llama2 7B?

A: No, the M3Pro is just one example. Other powerful CPUs and GPUs from companies like NVIDIA, AMD, and Intel can also run Llama2 7B. However, the M3Pro's efficiency and performance are impressive, especially considering its energy consumption.

Keywords

Apple M3Pro, Llama2 7B, Token Generation Speed, Quantization, F16, Q80, Q4_0, Processing, Generation, LLM, GPU, LLM Performance, Local LLM, Chatbots, Virtual Assistants, Content Creation, Code Generation, LLM Benchmarks, GPU Benchmarks, Performance Optimization, Efficiency, Energy Efficient, Apple Silicon, Deep Dive, Performance Analysis, Practical Recommendations, Use Cases