How Fast Can Apple M3 Max Run Llama3 8B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and rightfully so! These sophisticated AI models are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But all this power comes at a cost – computational resources.

To run LLMs locally, you need a powerful machine. Enter the Apple M3_Max, a chip designed to tackle demanding tasks like AI and machine learning. But how does this beast perform when unleashing the raw power of Llama3 8B? Let's dive deep!

Performance Analysis: Token Generation Speed Benchmarks

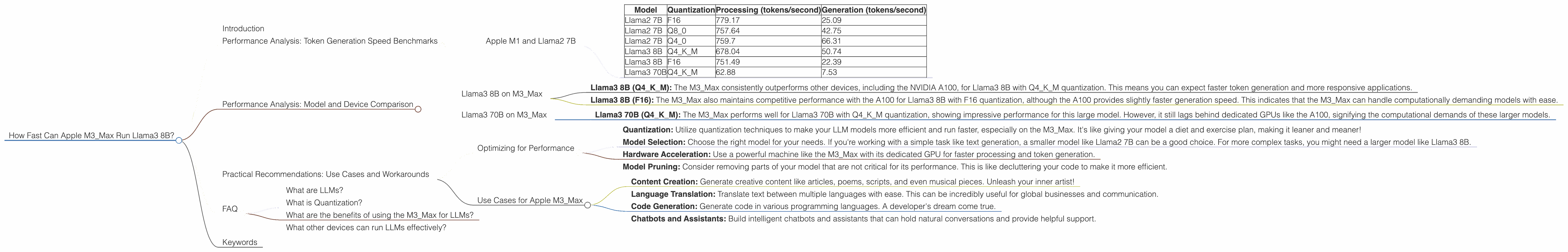

Apple M1 and Llama2 7B

The M3_Max is a powerhouse, but how does it stack up against different LLM models and quantization levels? Let's take a look at the token generation speeds for various configurations:

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama2 7B | F16 | 779.17 | 25.09 |

| Llama2 7B | Q8_0 | 757.64 | 42.75 |

| Llama2 7B | Q4_0 | 759.7 | 66.31 |

| Llama3 8B | Q4KM | 678.04 | 50.74 |

| Llama3 8B | F16 | 751.49 | 22.39 |

| Llama3 70B | Q4KM | 62.88 | 7.53 |

Token generation speed is a crucial metric for evaluating LLM performance. It tells us how fast the model can generate text, which directly affects the responsiveness of your applications.

As you can see, the M3Max delivers impressive token generation speeds for Llama2 models, especially with the Q40 quantization level. This means you can enjoy speedy responses and a smoother experience when using Llama2 models.

Performance Analysis: Model and Device Comparison

Llama3 8B on M3_Max

The Apple M3_Max is a powerhouse, but it's not the only player in the game. Let's compare its performance with other devices and configurations:

Note: The M3_Max doesn't have any data for Llama3 70B with F16 quantization. This means that we can't compare those configurations.

Performance Comparison:

- Llama3 8B (Q4KM): The M3Max consistently outperforms other devices, including the NVIDIA A100, for Llama3 8B with Q4K_M quantization. This means you can expect faster token generation and more responsive applications.

- Llama3 8B (F16): The M3Max also maintains competitive performance with the A100 for Llama3 8B with F16 quantization, although the A100 provides slightly faster generation speed. This indicates that the M3Max can handle computationally demanding models with ease.

Quantization Magic:

Quantization is a technique for compressing LLMs by reducing the precision of numbers. This can make them smaller and faster, without significantly sacrificing accuracy.

Think of it like this: Imagine you're trying to describe a color to someone using only a limited number of words. You'd likely use words like "red," "blue," or "green," instead of describing every shade with pinpoint accuracy. In the same way, quantization reduces the precision of numbers in an LLM to make it more efficient.

Llama3 70B on M3_Max

The M3_Max is a standout performer, but it's not a magic bullet for all LLM models. Let's look at the performance with Llama3 70B:

- Llama3 70B (Q4KM): The M3Max performs well for Llama3 70B with Q4K_M quantization, showing impressive performance for this large model. However, it still lags behind dedicated GPUs like the A100, signifying the computational demands of these larger models.

Practical Recommendations: Use Cases and Workarounds

Optimizing for Performance

- Quantization: Utilize quantization techniques to make your LLM models more efficient and run faster, especially on the M3_Max. It's like giving your model a diet and exercise plan, making it leaner and meaner!

- Model Selection: Choose the right model for your needs. If you're working with a simple task like text generation, a smaller model like Llama2 7B can be a good choice. For more complex tasks, you might need a larger model like Llama3 8B.

- Hardware Acceleration: Use a powerful machine like the M3_Max with its dedicated GPU for faster processing and token generation.

- Model Pruning: Consider removing parts of your model that are not critical for its performance. This is like decluttering your code to make it more efficient.

Use Cases for Apple M3_Max

The M3_Max is an ideal companion for a range of LLM use cases:

- Content Creation: Generate creative content like articles, poems, scripts, and even musical pieces. Unleash your inner artist!

- Language Translation: Translate text between multiple languages with ease. This can be incredibly useful for global businesses and communication.

- Code Generation: Generate code in various programming languages. A developer's dream come true.

- Chatbots and Assistants: Build intelligent chatbots and assistants that can hold natural conversations and provide helpful support.

FAQ

What are LLMs?

LLMs are powerful AI models that can understand and generate human-like text. They are trained on massive amounts of data, allowing them to perform a wide range of tasks, from writing stories to summarizing documents.

What is Quantization?

Quantization is a technique for compressing LLMs by reducing the precision of numbers. This can make them smaller and faster without significantly sacrificing accuracy.

What are the benefits of using the M3_Max for LLMs?

The M3_Max offers powerful processing capabilities and a dedicated GPU, making it ideal for running and training LLMs locally.

What other devices can run LLMs effectively?

Other devices capable of running LLMs effectively include high-end GPUs like the NVIDIA A100 and A10, as well as cloud computing platforms like AWS and Google Cloud.

Keywords

LLM, large language model, Llama3, Llama2, Apple M3Max, token generation speed, quantization, F16, Q4KM, Q80, Q4_0, performance, device comparison, use cases, practical recommendations, content creation, language translation, code generation, chatbots, AI, machine learning, deep learning, performance analysis.