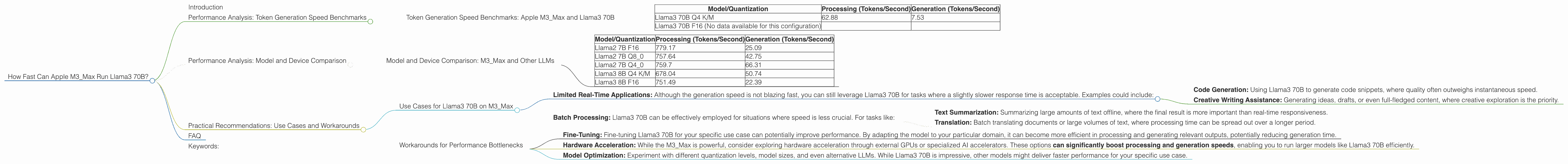

How Fast Can Apple M3 Max Run Llama3 70B?

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with new models and applications emerging constantly. One of the key factors determining LLM performance is the hardware it runs on. For developers looking to leverage the power of LLMs locally, understanding how different devices handle various models is crucial. This article delves into the performance of the Apple M3_Max chip specifically focusing on its ability to run the impressive Llama3 70B model. Get ready to dive into the world of token generation speeds, quantization, and the potential of local LLMs.

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a critical metric for evaluating LLM performance, especially in the context of real-time applications. Let's see how the M3_Max handles Llama3 70B.

Token Generation Speed Benchmarks: Apple M3_Max and Llama3 70B

The data we'll analyze comes from the M3_Max configurations, focusing on the performance of Llama3 70B. We'll examine two key areas: processing and text generation.

Processing quantifies how fast the LLM can process the input prompt. Generation measures how quickly the model creates the output text.

| Model/Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama3 70B Q4 K/M | 62.88 | 7.53 |

| Llama3 70B F16 (No data available for this configuration) |

Analyzing the Data

Llama3 70B Q4 K/M: The M3_Max is able to process around 62.88 tokens per second, but the generation speed is significantly slower at 7.53 tokens per second. This discrepancy highlights a common challenge in LLM inference: the generation process often lags behind the model's internal processing.

Llama3 70B F16 (No data available): Unfortunately, we don't have data for this specific configuration. This could be due to limitations in the available benchmarks or a lack of testing with this model.

Performance Analysis: Model and Device Comparison

While our focus remains on the M3Max and Llama3 70B, let's quickly glance at some other models and their performance on the M3Max. This comparison will provide a broader context for the challenges and opportunities in local LLM deployment.

Model and Device Comparison: M3_Max and Other LLMs

| Model/Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama2 7B F16 | 779.17 | 25.09 |

| Llama2 7B Q8_0 | 757.64 | 42.75 |

| Llama2 7B Q4_0 | 759.7 | 66.31 |

| Llama3 8B Q4 K/M | 678.04 | 50.74 |

| Llama3 8B F16 | 751.49 | 22.39 |

Interpreting the Comparison

Smaller Models: The M3_Max handles smaller models like Llama2 7B significantly faster than Llama3 70B, even with different quantization levels. This is because smaller models require less computational power for inference.

Quantization and Performance: Comparing processing speeds for Llama2 7B using different quantization levels (F16, Q80, Q40), we see an interesting trend. Q4_0 (4-bit quantization) provides slightly higher processing speed, but it's not a significant difference compared to F16. The tradeoffs between these levels will depend on your specific application and resource constraints.

Limitations of the Data: The benchmark data isn't exhaustive. The absence of specific configurations, like Llama3 70B F16, prevents a complete comparison.

Practical Recommendations: Use Cases and Workarounds

Armed with the available performance data, let's explore practical recommendations for deploying Llama3 70B locally. Remember, the real-world performance of an LLM depends heavily on the specific use case.

Use Cases for Llama3 70B on M3_Max

Limited Real-Time Applications: Although the generation speed is not blazing fast, you can still leverage Llama3 70B for tasks where a slightly slower response time is acceptable. Examples could include:

- Code Generation: Using Llama3 70B to generate code snippets, where quality often outweighs instantaneous speed.

- Creative Writing Assistance: Generating ideas, drafts, or even full-fledged content, where creative exploration is the priority.

Batch Processing: Llama3 70B can be effectively employed for situations where speed is less crucial. For tasks like:

- Text Summarization: Summarizing large amounts of text offline, where the final result is more important than real-time responsiveness.

- Translation: Batch translating documents or large volumes of text, where processing time can be spread out over a longer period.

Workarounds for Performance Bottlenecks

Fine-Tuning: Fine-tuning Llama3 70B for your specific use case can potentially improve performance. By adapting the model to your particular domain, it can become more efficient in processing and generating relevant outputs, potentially reducing generation time.

Hardware Acceleration: While the M3_Max is powerful, consider exploring hardware acceleration through external GPUs or specialized AI accelerators. These options can significantly boost processing and generation speeds, enabling you to run larger models like Llama3 70B efficiently.

Model Optimization: Experiment with different quantization levels, model sizes, and even alternative LLMs. While Llama3 70B is impressive, other models might deliver faster performance for your specific use case.

FAQ

Q: What is Quantization?

A: Imagine you have a large image file and you want to send it to a friend over a slow internet connection. You could compress the image file, reducing its size while maintaining most of the detail. Quantization works similarly for LLMs, reducing the size of model parameters without sacrificing too much accuracy. This can make the model much smaller and faster to process.

Q: How does M3_Max compare to other chips like GPUs?

*A: * M3Max is a powerful processor from Apple, specifically designed for demanding tasks like video editing and image processing. However, specialized GPUs, like those from Nvidia or AMD, are often better at handling the complex calculations involved in LLM inference. While M3Max can handle LLMs, for even better performance, consider using a specialized GPU.

Q: Can I run Llama3 70B on my laptop?

A: It depends! If your laptop has a capable GPU, especially a modern one with enough memory, you might be able to run Llama3 70B. However, it is more likely that you will need a more powerful desktop machine, especially if you are looking for fast generation times.

Q: Should I use Llama3 70B for everything?

A: While Llama3 70B is a remarkable model, it's not always the best choice. Consider the demands of your application and resource constraints. Smaller models with a faster generation speed might be more suitable for real-time interactions. For more complex tasks, like scientific research or specialized language-based applications, Llama3 70B can be a powerful asset.

Keywords:

LLM, Llama3 70B, Apple M3_Max, token generation speed, performance benchmarks, quantization, hardware acceleration, use cases, practical recommendations, local deployment, GPU, AI accelerator, model optimization, development, deep dive, geek, humor, tech, chatbot, AI, machine learning, natural language processing