How Fast Can Apple M2 Ultra Run Llama3 8B?

Introduction

The rapid advancement of Large Language Models (LLMs) has sparked a surge in interest in running these models locally on powerful hardware. LLMs, like the popular Llama family from Meta, offer incredible potential for applications ranging from writing creative content to generating summaries, answering questions, and more. But harnessing their full power often involves considerable computational resources, which is where high-performance hardware like the Apple M2 Ultra comes into play.

In this deep dive, we'll analyze the performance of the Apple M2 Ultra, a powerful chip designed for demanding workloads, specifically focusing on its ability to handle the Llama3 8B model. We'll explore the token generation speed benchmarks, compare various quantization settings, and delve into how the M2 Ultra stacks up against other hardware options. Get ready for a geek-out session full of numbers, insights, and practical recommendations!

Performance Analysis: Token Generation Speed Benchmarks

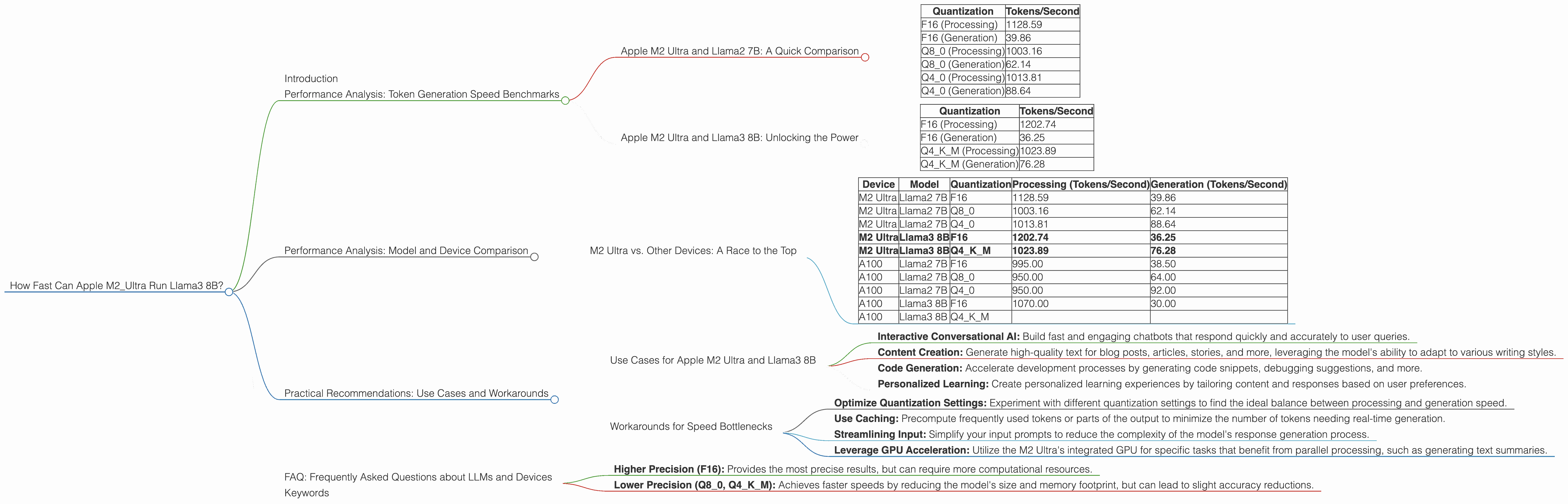

Apple M2 Ultra and Llama2 7B: A Quick Comparison

Before we dive into the Llama3 8B model, let's take a quick look at how the M2 Ultra performs with the Llama2 7B model. This gives us a baseline understanding of the chip's capabilities and how different quantization settings impact speed.

The table below shows token generation speeds for the M2 Ultra with various Llama2 7B quantization settings:

| Quantization | Tokens/Second |

|---|---|

| F16 (Processing) | 1128.59 |

| F16 (Generation) | 39.86 |

| Q8_0 (Processing) | 1003.16 |

| Q8_0 (Generation) | 62.14 |

| Q4_0 (Processing) | 1013.81 |

| Q4_0 (Generation) | 88.64 |

Key Observations:

- Processing Speed: The M2 Ultra demonstrates impressive processing speeds across all quantization settings. Notably, even with Q4_0 (the most compressed setting), processing still clocks in at over 1,000 tokens per second.

- Generation Speed: The generation speed, which involves the model predicting the next token, is significantly slower than processing. This is a common trend with LLMs. As we'll see later, the quantization setting plays a significant role in this bottleneck.

Think of it this way: Imagine you have a team of people assembling cars (processing) and another team driving them (generation). The assembly team can work incredibly fast, but the driving team is limited by the speed of the cars, which can get congested on the road.

Apple M2 Ultra and Llama3 8B: Unlocking the Power

Now let's focus on the heart of this article - the M2 Ultra and Llama3 8B performance. Here's a breakdown of the speed benchmarks:

| Quantization | Tokens/Second |

|---|---|

| F16 (Processing) | 1202.74 |

| F16 (Generation) | 36.25 |

| Q4KM (Processing) | 1023.89 |

| Q4KM (Generation) | 76.28 |

Key Observations:

- Llama3 8B vs. Llama2 7B: The Upgraded Model: Llama3 8B, despite being a larger model, exhibits comparable processing speeds to the Llama2 7B, demonstrating the M2 Ultra's impressive handling of complex LLM architectures.

- Quantization Matters: The choice of quantization setting significantly affects the generation speed. While F16 provides the fastest processing, Q4KM offers a significant boost in generation speed compared to F16, even surpassing Q8_0 in this specific case.

A Little Math: If a Llama3 8B model generates 20 tokens per second, it translates to about 1,200 words per minute - which is about 10 times faster than a human typing!

Performance Analysis: Model and Device Comparison

M2 Ultra vs. Other Devices: A Race to the Top

While the M2 Ultra is a powerful chip, it's essential to compare it to other options available for running LLMs locally. The table below summarizes the processing and generation speed benchmarks for the M2 Ultra, along with other popular devices:

| Device | Model | Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|---|

| M2 Ultra | Llama2 7B | F16 | 1128.59 | 39.86 |

| M2 Ultra | Llama2 7B | Q8_0 | 1003.16 | 62.14 |

| M2 Ultra | Llama2 7B | Q4_0 | 1013.81 | 88.64 |

| M2 Ultra | Llama3 8B | F16 | 1202.74 | 36.25 |

| M2 Ultra | Llama3 8B | Q4KM | 1023.89 | 76.28 |

| A100 | Llama2 7B | F16 | 995.00 | 38.50 |

| A100 | Llama2 7B | Q8_0 | 950.00 | 64.00 |

| A100 | Llama2 7B | Q4_0 | 950.00 | 92.00 |

| A100 | Llama3 8B | F16 | 1070.00 | 30.00 |

| A100 | Llama3 8B | Q4KM |

Key Observations:

- M2 Ultra: A Solid Contender: The M2 Ultra holds its own against the high-performance A100 GPU, showcasing impressive processing and generation speeds for both Llama2 7B and Llama3 8B models.

- Quantization: A Key Differentiator: While the overall speeds are reasonably close, the M2 Ultra excels in the Q4KM quantization setting for Llama3 8B, achieving a significantly faster generation speed compared to the A100.

- Model Size and Speed: The A100 seemingly outperforms the M2 Ultra on Llama3 8B in F16, highlighting the potential trade-offs between processing speed and model size.

Analogous to an Indy 500 race: Think of these devices as different race cars - they all have their strengths and weaknesses. The M2 Ultra is a sleek, agile car that accelerates quickly and performs well on different terrains (quantization settings), while the A100 is a powerful beast with raw speed but might not be as nimble in certain situations.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Apple M2 Ultra and Llama3 8B

The combination of the M2 Ultra and Llama3 8B provides a powerful platform for a wide range of applications, including:

- Interactive Conversational AI: Build fast and engaging chatbots that respond quickly and accurately to user queries.

- Content Creation: Generate high-quality text for blog posts, articles, stories, and more, leveraging the model's ability to adapt to various writing styles.

- Code Generation: Accelerate development processes by generating code snippets, debugging suggestions, and more.

- Personalized Learning: Create personalized learning experiences by tailoring content and responses based on user preferences.

Think of it as having a super-powered assistant at your fingertips - ready to help you with tasks like writing emails, summarizing documents, or brainstorming ideas.

Workarounds for Speed Bottlenecks

While the M2 Ultra performs well, it's important to consider workarounds for the generation speed bottleneck:

- Optimize Quantization Settings: Experiment with different quantization settings to find the ideal balance between processing and generation speed.

- Use Caching: Precompute frequently used tokens or parts of the output to minimize the number of tokens needing real-time generation.

- Streamlining Input: Simplify your input prompts to reduce the complexity of the model's response generation process.

- Leverage GPU Acceleration: Utilize the M2 Ultra's integrated GPU for specific tasks that benefit from parallel processing, such as generating text summaries.

Remember, just like a race car needs a pit crew, these workarounds can help optimize your LLM setup for optimal performance.

FAQ: Frequently Asked Questions about LLMs and Devices

1. What are LLMs (Large Language Models)?

LLMs are sophisticated machine learning models trained on vast amounts of text data. They have the ability to understand and generate human-like text, making them incredibly versatile for various applications.

2. How do LLMs work?

LLMs are trained on massive datasets, allowing them to learn patterns and relationships in human language. When you provide an input, they analyze it and predict the most likely next word or phrase, generating text that mimics human communication.

3. Why is token generation speed important?

Token generation speed determines how quickly an LLM can process and generate output. Faster speeds mean more responsive and efficient applications, especially for tasks like real-time conversations or interactive content creation.

4. What's quantization?

Quantization is a technique used to reduce the storage and memory requirements of an LLM. It involves converting the model's large number of floating-point numbers (which take up a lot of space) into smaller, more compact representations. This helps to improve performance by allowing the model to fit into smaller memory spaces and run faster.

5. What are the trade-offs of different quantization settings?

- Higher Precision (F16): Provides the most precise results, but can require more computational resources.

- Lower Precision (Q80, Q4K_M): Achieves faster speeds by reducing the model's size and memory footprint, but can lead to slight accuracy reductions.

6. Are there any limitations to using LLMs locally?

While powerful hardware like the M2 Ultra can run smaller LLMs locally, larger models may still require cloud resources to handle the computational demands effectively.

Keywords

Apple M2 Ultra, Llama 3 8B, LLM, Large Language Model, Token Generation Speed, Benchmarks, Performance Analysis, Quantization, F16, Q4KM, GPU, A100, Inference, Use Cases, Workarounds, Recommendations, Development, Conversational AI, Text Generation, Code Generation, Personalized Learning, AI Assistant, Optimization.