How Fast Can Apple M2 Ultra Run Llama3 70B?

Introduction

In the fast-paced world of Large Language Models (LLMs), performance is king. As models grow more complex, the ability to run them locally becomes even more crucial for developers and researchers. But how fast can a powerful machine like the Apple M2 Ultra handle the demands of a behemoth like the Llama3 70B model? This article dives deep into the world of local LLM performance, exploring the speed of Apple's latest chip in running the popular open-source Llama3 70B model. We'll examine token generation speed and compare performance across different Llama3 model sizes and quantization levels. This information will be helpful for anyone looking to utilize LLMs directly on their machine and decide whether the M2 Ultra is the right choice. Buckle up, it's time for some serious geekery!

Performance Analysis: Token Generation Speed Benchmarks

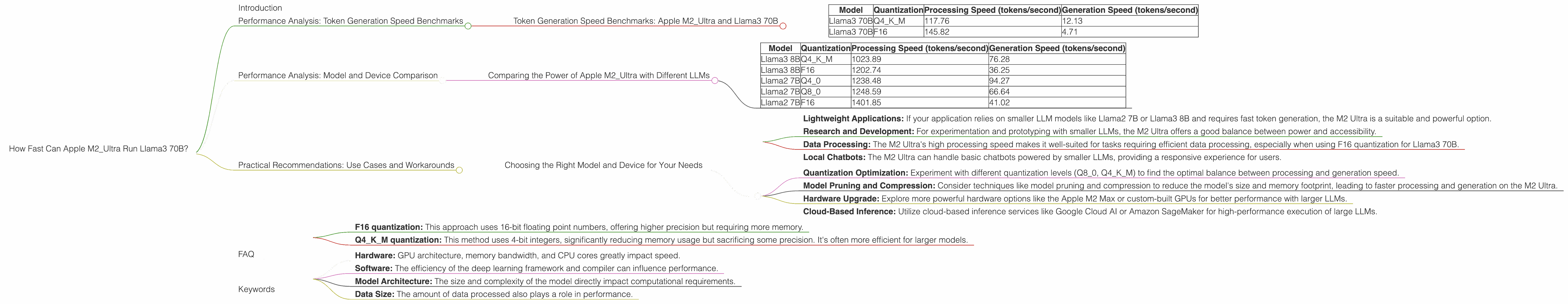

Token Generation Speed Benchmarks: Apple M2_Ultra and Llama3 70B

The M2 Ultra, Apple's most powerful chip yet, boasts impressive performance, but how does it fare with the Llama3 70B model? Let's dive into the numbers:

Table 1. Token Generation Speed Benchmarks (tokens/second) for Apple M2 Ultra and Llama3 70B

| Model | Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama3 70B | Q4KM | 117.76 | 12.13 |

| Llama3 70B | F16 | 145.82 | 4.71 |

Observations:

- The M2_Ultra shows decent processing speed for the Llama3 70B model, especially when using F16 quantization. However, the generation speed is significantly lower, indicating potential limitations in real-time applications with high demands.

- The Q4KM quantization offers slightly lower processing speed compared to F16 but delivers a more balanced overall performance with a higher generation speed.

What does this mean?

While the M2 Ultra can process Llama3 70B data quickly, it struggles to generate text responses at a rapid pace. This could be a bottleneck for applications requiring quick text generation. You might find F16 quantization suitable for processing massive amounts of data but using Q4KM quantization might be better when quick text generation is required.

Performance Analysis: Model and Device Comparison

Comparing the Power of Apple M2_Ultra with Different LLMs

How does the performance of the M2 Ultra change with different LLM sizes? Let's take a look at some benchmark numbers for Llama2 7B and Llama3 8B:

Table 2. Token Generation Speed Benchmarks (tokens/second) for Apple M2 Ultra and Llama3 8B & Llama2 7B Models

| Model | Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama3 8B | Q4KM | 1023.89 | 76.28 |

| Llama3 8B | F16 | 1202.74 | 36.25 |

| Llama2 7B | Q4_0 | 1238.48 | 94.27 |

| Llama2 7B | Q8_0 | 1248.59 | 66.64 |

| Llama2 7B | F16 | 1401.85 | 41.02 |

Observations:

- The M2_Ultra performs significantly better with smaller models like Llama2 7B and Llama3 8B compared to the 70B model.

- The processing speed for Llama2 7B and Llama3 8B is considerably faster than for Llama3 70B.

- Generation speed is also higher for the smaller models, particularly for Llama2 7B with Q4_0 quantization.

Takeaways:

- The M2 Ultra is more suited for running smaller LLM models, achieving faster processing and generation speeds.

- Larger models like Llama3 70B may require more powerful hardware or optimization techniques to improve performance.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model and Device for Your Needs

Based on the benchmarks, we can identify some potential use cases and practical recommendations for using the M2 Ultra and LLMs:

Use Cases (M2 Ultra):

- Lightweight Applications: If your application relies on smaller LLM models like Llama2 7B or Llama3 8B and requires fast token generation, the M2 Ultra is a suitable and powerful option.

- Research and Development: For experimentation and prototyping with smaller LLMs, the M2 Ultra offers a good balance between power and accessibility.

- Data Processing: The M2 Ultra's high processing speed makes it well-suited for tasks requiring efficient data processing, especially when using F16 quantization for Llama3 70B.

- Local Chatbots: The M2 Ultra can handle basic chatbots powered by smaller LLMs, providing a responsive experience for users.

Workarounds (for Llama3 70B):

- Quantization Optimization: Experiment with different quantization levels (Q80, Q4K_M) to find the optimal balance between processing and generation speed.

- Model Pruning and Compression: Consider techniques like model pruning and compression to reduce the model's size and memory footprint, leading to faster processing and generation on the M2 Ultra.

- Hardware Upgrade: Explore more powerful hardware options like the Apple M2 Max or custom-built GPUs for better performance with larger LLMs.

- Cloud-Based Inference: Utilize cloud-based inference services like Google Cloud AI or Amazon SageMaker for high-performance execution of large LLMs.

An analogy:

Think of the M2 Ultra as a powerful sports car. It can handle smaller and faster cars like Llama2 7B and Llama3 8B with ease and speed. But when it comes to a gigantic truck like Llama3 70B, the M2 Ultra might be a bit sluggish. To handle that truck effectively, you might need a bigger rig or some optimization techniques to maximize performance.

FAQ

Q: What is quantization?

A: Quantization is a technique used in machine learning to reduce the size and memory footprint of models, often sacrificing a small amount of accuracy for a significant boost in speed and efficiency. Think of it like compressing a large image file to reduce its size without losing too much detail.

Q: What is the difference between F16 and Q4KM quantization?

A: Both F16 and Q4KM are quantization methods, but they differ in the level of precision they maintain:

- F16 quantization: This approach uses 16-bit floating point numbers, offering higher precision but requiring more memory.

- Q4KM quantization: This method uses 4-bit integers, significantly reducing memory usage but sacrificing some precision. It's often more efficient for larger models.

Q: What are some other factors that affect LLM performance?

A: Several factors aside from the model and device influence performance:

- Hardware: GPU architecture, memory bandwidth, and CPU cores greatly impact speed.

- Software: The efficiency of the deep learning framework and compiler can influence performance.

- Model Architecture: The size and complexity of the model directly impact computational requirements.

- Data Size: The amount of data processed also plays a role in performance.

Keywords

Apple M2 Ultra, Llama3 70B, Llama2 7B, Llama3 8B, LLM model, token generation speed, performance benchmarks, quantization, F16, Q4KM, processing speed, generation speed, local inference, cloud-based inference, practical recommendations, use cases, workarounds, deep learning, AI, machine learning.