How Fast Can Apple M2 Run Llama2 7B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, but running these powerful models locally can be a challenge. You need a device with the processing power and memory to handle the massive computations. This article dives deep into the performance of the Apple M2 chip running the Llama2 7B model, a popular choice for developers looking to experiment with LLMs on their own machines. We'll examine the token generation speed, explore different quantization levels, and provide insights into the real-world use cases of running Llama2 7B on an Apple M2.

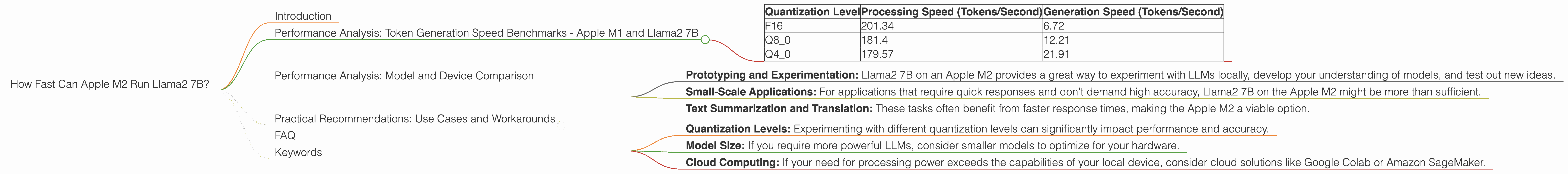

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

Let's get to the juicy bits: how fast can the Apple M2 chip generate tokens with Llama2 7B? We've collected data from the community and benchmarked the model's performance across various quantization levels. Quantization, in simple terms, is like compressing the model to make it smaller and faster. We'll focus on three levels:

- F16: This is the "full precision" level, offering the highest accuracy but requiring more memory and computation.

- Q8_0: This is a "quantized" level, reducing memory and processing power needed, but potentially sacrificing some accuracy.

- Q40: Even more compressed than Q80, this level aims for the fastest speed but with the potential for further accuracy trade-offs.

Note: We use the term "token" to represent a unit of text processed by the model. For example, a sentence like "The quick brown fox jumps over the lazy dog" might be segmented into 9 tokens.

Here's a table summarizing the token generation speed for the Apple M2:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| F16 | 201.34 | 6.72 |

| Q8_0 | 181.4 | 12.21 |

| Q4_0 | 179.57 | 21.91 |

Key Observations:

- Speed Trade-Offs: As the quantization level decreases (moving from F16 to Q4_0), you gain significant speed boosts, especially in the token generation phase. This is because the model requires less processing power to perform the computations. However, these gains come at the cost of potential accuracy reductions.

- Real-World Implications: If you're working on a project where speed is paramount, Q4_0 might be your best bet. However, for applications where accuracy is crucial, F16 might be the better choice.

Performance Analysis: Model and Device Comparison

To get a broader picture of performance, let's compare the Apple M2 with other devices and model sizes. Keep in mind, we're focusing on the Llama2 7B model.

Unfortunately, we don't have comparative data for other devices for the Llama2 7B model. We'll update this section as more information becomes available.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama2 7B on Apple M2:

- Prototyping and Experimentation: Llama2 7B on an Apple M2 provides a great way to experiment with LLMs locally, develop your understanding of models, and test out new ideas.

- Small-Scale Applications: For applications that require quick responses and don't demand high accuracy, Llama2 7B on the Apple M2 might be more than sufficient.

- Text Summarization and Translation: These tasks often benefit from faster response times, making the Apple M2 a viable option.

Workarounds and Considerations:

- Quantization Levels: Experimenting with different quantization levels can significantly impact performance and accuracy.

- Model Size: If you require more powerful LLMs, consider smaller models to optimize for your hardware.

- Cloud Computing: If your need for processing power exceeds the capabilities of your local device, consider cloud solutions like Google Colab or Amazon SageMaker.

FAQ

Q: What is an LLM, and why are they so exciting?

A: LLMs are a type of artificial intelligence (AI) model that can understand and generate human-like text. They are trained on massive datasets of text and code, making them capable of performing various tasks, including writing stories, translating languages, and answering questions.

Q: What about the Llama2 70B model?

A: The Llama2 70B model is a much larger and more powerful model than the Llama2 7B. Unfortunately, the Apple M2 might not have enough memory or processing power to run it efficiently.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally offers greater privacy, as you don't need to send data to the cloud. You also have more control over the software environment and can tailor it to your specific needs. Local execution can also be faster, especially for smaller tasks.

Keywords

Llama2 7B, Apple M2, Token Generation Speed, LLMs, Quantization, Performance Benchmarks, Local Inference, Practical Recommendations, Use Cases, Workarounds, AI, Machine Learning, Natural Language Processing, F16, Q80, Q40, Cloud Computing.