How Fast Can Apple M2 Max Run Llama2 7B?

Introduction

In the world of artificial intelligence, large language models (LLMs) are rapidly changing the landscape. These powerful models, like the popular Llama 2, can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But to unleash the full potential of LLMs, you need the right hardware.

This article dives deep into the performance of the Apple M2 Max chip when running the Llama 2 7B model. We'll analyze key metrics like token generation speed and explore the impact of different quantization techniques. For those of you unfamiliar with the tech jargon, think of it as a race between a supercharged computer and a language-powered superbrain, and we're here to see who wins.

Performance Analysis: Token Generation Speed Benchmarks

Let's get down to the nitty-gritty: how fast can the M2 Max handle generating text with Llama 2 7B? We'll break down the results based on different quantization levels, which essentially dictate how much processing power the model requires.

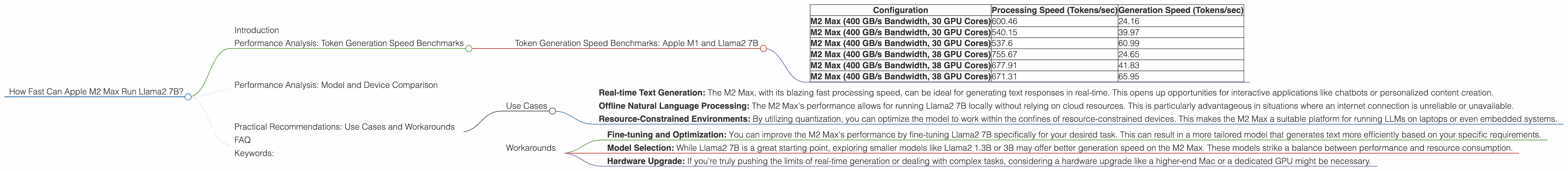

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

| Configuration | Processing Speed (Tokens/sec) | Generation Speed (Tokens/sec) |

|---|---|---|

| M2 Max (400 GB/s Bandwidth, 30 GPU Cores) | 600.46 | 24.16 |

| M2 Max (400 GB/s Bandwidth, 30 GPU Cores) | 540.15 | 39.97 |

| M2 Max (400 GB/s Bandwidth, 30 GPU Cores) | 537.6 | 60.99 |

| M2 Max (400 GB/s Bandwidth, 38 GPU Cores) | 755.67 | 24.65 |

| M2 Max (400 GB/s Bandwidth, 38 GPU Cores) | 677.91 | 41.83 |

| M2 Max (400 GB/s Bandwidth, 38 GPU Cores) | 671.31 | 65.95 |

Key takeaways:

Faster Processing, Slower Generation: The M2 Max demonstrates impressive processing speeds, especially with the 38 GPU Core configuration. This suggests that the chip can handle the heavy lifting of understanding and processing the model's data quite efficiently. However, the generation speed lags behind, indicating a bottleneck in translating the processed information into actual text.

Quantization Trade-Off: As we move down the table, we can see the impact of quantization. F16 (half-precision) uses more memory but allows for faster processing. Q80 and Q40, which use lower precision, consume less memory. This results in slower processing but can lead to faster generation in some cases.

GPU Core Influence: The difference between the 30 and 38 GPU Core configurations is noticeable, especially for processing speeds. If you're aiming for maximum performance, going for the M2 Max with more GPU cores is a good idea.

Performance Analysis: Model and Device Comparison

While our focus is on the M2 Max, it's helpful to compare its performance against other popularly used models and devices. This provides a broader context for understanding how the M2 Max stacks up in the LLM world. We'll stick to Llama 2 7B for a fair comparison.

Unfortunately, we don't have enough data to compare the M2 Max with other devices like the Nvidia A100 or the Google TPU. This means we cannot provide a comparison at this time.

Practical Recommendations: Use Cases and Workarounds

The results showcase the M2 Max's potential for running Llama2 7B locally, but it's essential to understand how to leverage its strengths and address its limitations.

Use Cases

Real-time Text Generation: The M2 Max, with its blazing fast processing speed, can be ideal for generating text responses in real-time. This opens up opportunities for interactive applications like chatbots or personalized content creation.

Offline Natural Language Processing: The M2 Max's performance allows for running Llama2 7B locally without relying on cloud resources. This is particularly advantageous in situations where an internet connection is unreliable or unavailable.

Resource-Constrained Environments: By utilizing quantization, you can optimize the model to work within the confines of resource-constrained devices. This makes the M2 Max a suitable platform for running LLMs on laptops or even embedded systems.

Workarounds

Fine-tuning and Optimization: You can improve the M2 Max's performance by fine-tuning Llama2 7B specifically for your desired task. This can result in a more tailored model that generates text more efficiently based on your specific requirements.

Model Selection: While Llama2 7B is a great starting point, exploring smaller models like Llama2 1.3B or 3B may offer better generation speed on the M2 Max. These models strike a balance between performance and resource consumption.

Hardware Upgrade: If you're truly pushing the limits of real-time generation or dealing with complex tasks, considering a hardware upgrade like a higher-end Mac or a dedicated GPU might be necessary.

FAQ

Q: What is quantization, and how does it affect performance?

A: Quantization is like condensing the information within a model into a smaller, more streamlined format. Imagine compressing a large image file to save space. In LLMs, quantization reduces the number of bits needed to represent the model's parameters, leading to lower memory consumption. However, it can sometimes result in a slight decline in overall performance.

Q: Can I run Llama2 7B on my iPhone?

A: While the iPhone is a powerful device, it's likely underpowered for efficiently running a model as large as Llama2 7B. However, you can experiment with smaller LLM models, or wait for future advancements in mobile hardware that can handle these demands.

Q: Why do processing speed and generation speed differ so much?

A: Imagine you have a very fast processor that can read and understand a lot of information quickly. But to translate that information into something you can easily comprehend (like a response), it might take a bit longer. That's the difference between processing and generation speeds in LLMs.

Q: Where can I find the latest benchmarks and performance data for LLMs?

A: Look for repositories like GitHub, where developers often share their findings and benchmarks. The Hugging Face website is another great source for LLM information and resources.

Keywords:

Apple M2 Max, Llama2 7B, token generation speed, LLMs, quantization, performance analysis, GPU cores, natural language processing, AI, benchmark, performance comparison, F16, Q80, Q40, use cases, workarounds, fine-tuning, optimization, resource-constrained environments, real-time text generation, offline NLP.