How Fast Can Apple M1 Ultra Run Llama2 7B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

But there's a catch: LLMs require a lot of computing power. Running these models on your local machine can be a challenge, especially if you're working with large models like Llama2 7B. That's why we're diving deep into the performance of the Apple M1 Ultra chip, a powerful beast known for its prowess in handling demanding tasks.

Let's explore how this silicon titan stacks up against the Llama2 7B model and see what kind of speeds we can achieve.

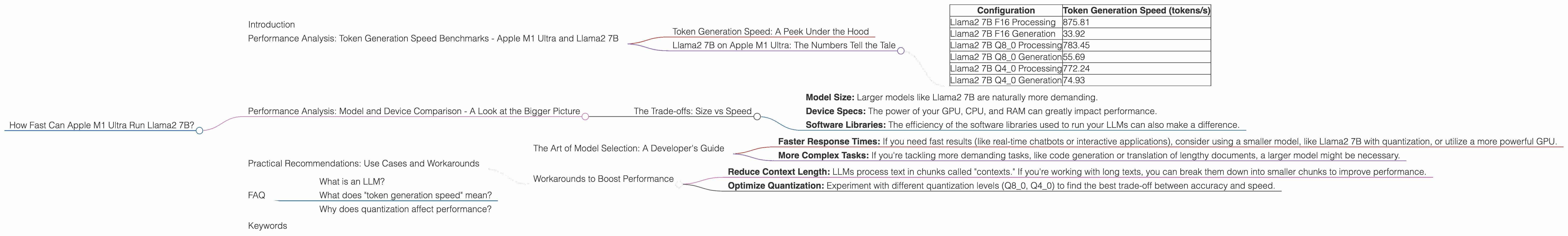

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 Ultra and Llama2 7B

Token Generation Speed: A Peek Under the Hood

Before we dive into the numbers, let's understand what "token generation speed" means. Think of a token as a building block of language, like a word or a punctuation mark. LLMs process text by breaking it down into tokens. Token generation speed measures how quickly a model can process these tokens and produce output.

Llama2 7B on Apple M1 Ultra: The Numbers Tell the Tale

We're going to focus on the Apple M1 Ultra, a powerful machine with 48 GPU cores. The numbers we'll see below are in tokens per second (tokens/s), which essentially tells us how many tokens the model can process every second.

| Configuration | Token Generation Speed (tokens/s) |

|---|---|

| Llama2 7B F16 Processing | 875.81 |

| Llama2 7B F16 Generation | 33.92 |

| Llama2 7B Q8_0 Processing | 783.45 |

| Llama2 7B Q8_0 Generation | 55.69 |

| Llama2 7B Q4_0 Processing | 772.24 |

| Llama2 7B Q4_0 Generation | 74.93 |

Key Observations:

- Quantization Matters: Looking at the table, we see a significant boost in generation speed when using quantization techniques (Q80 and Q40).

- Quantization: Think of quantization as a way to compress the model's data, making it more compact and efficient. It's like using a smaller vocabulary to convey the same meaning.

- F16 vs Quantized: F16 (16-bit floating point) is like the default setting, while Q80 and Q40 are more efficient but can sometimes slightly affect accuracy.

Big Picture: While the Apple M1 Ultra is an impressive machine, we witness that the token generation speed is significantly slower for generating text compared to text processing (also known as inference). This is a trend that we see across many devices and LLMs.

Performance Analysis: Model and Device Comparison - A Look at the Bigger Picture

The Trade-offs: Size vs Speed

The Apple M1 Ultra is undeniably a powerful machine, but it's not the only player in the game. There are other exciting devices and LLMs out there, each with its own set of strengths and limitations.

While we're focusing on the Apple M1 Ultra and Llama2 7B, it's important to remember that the performance of LLMs on different devices can vary depending on factors like:

- Model Size: Larger models like Llama2 7B are naturally more demanding.

- Device Specs: The power of your GPU, CPU, and RAM can greatly impact performance.

- Software Libraries: The efficiency of the software libraries used to run your LLMs can also make a difference.

The Key Takeaway: The choice of device and model boils down to a trade-off between speed, model size, and cost. Sometimes, you might want to prioritize a larger model, even if it means a slower response. Other times, you might prefer a smaller model that runs faster on your device.

Practical Recommendations: Use Cases and Workarounds

The Art of Model Selection: A Developer's Guide

Let's get practical. How can you make the most of LLMs on your M1 Ultra? It depends on what you're trying to achieve.

- Faster Response Times: If you need fast results (like real-time chatbots or interactive applications), consider using a smaller model, like Llama2 7B with quantization, or utilize a more powerful GPU.

- More Complex Tasks: If you're tackling more demanding tasks, like code generation or translation of lengthy documents, a larger model might be necessary.

Workarounds to Boost Performance

- Reduce Context Length: LLMs process text in chunks called "contexts." If you're working with long texts, you can break them down into smaller chunks to improve performance.

- Optimize Quantization: Experiment with different quantization levels (Q80, Q40) to find the best trade-off between accuracy and speed.

FAQ

What is an LLM?

An LLM is a type of AI model that learns to understand and generate human-like text from a massive amount of data. Think of it as a super-powered language guru that can generate creative content like poems, code, and stories, or answer your questions in a helpful and informative way.

What does "token generation speed" mean?

It's a measure of how fast a model can process and produce text. Imagine you have a machine that can process a certain number of words (tokens) every second. That's your token generation speed!

Why does quantization affect performance?

Quantization is like using a smaller vocabulary to convey the same meaning. It makes the model more compact and efficient, leading to faster processing. However, it can sometimes affect accuracy, so you need to find the sweet spot for your specific use case.

Keywords

LLM, Llama2 7B, Apple M1 Ultra, token generation speed, quantization, GPU, performance, inference, model size, device specs, use cases, workarounds, practical recommendations, developer, AI, machine learning.