How Fast Can Apple M1 Run Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is abuzz with excitement, and for good reason! These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But unleashing the full potential of LLMs requires the right hardware.

This article dives deep into the performance of Apple's M1 chip when running the Llama3 8B model, exploring its token generation speeds and comparing it to other models. Whether you're a developer seeking optimal performance for your projects or a curious tech enthusiast, this guide provides insights into the exciting world of local LLM execution.

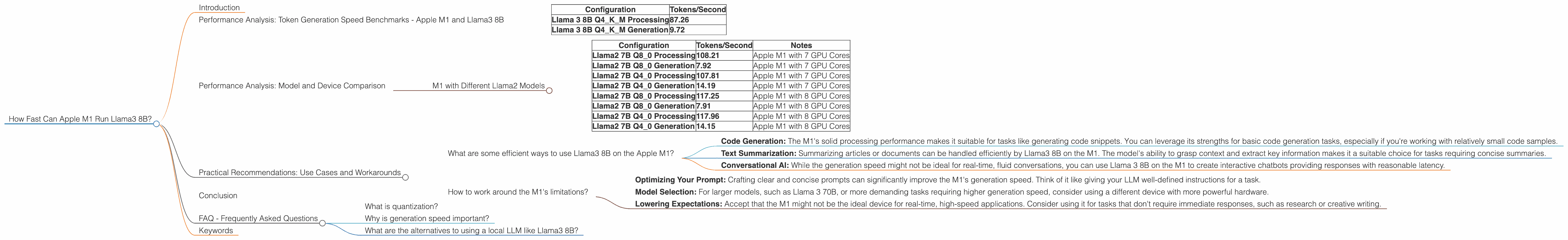

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama3 8B

Let's get down to the nitty-gritty! We're going to analyze the token generation speed of the Llama 3 8B model on the Apple M1 chip. Imagine tokens as the building blocks of text; they're like the individual Lego bricks that form a complex structure. The faster your device can process these tokens, the quicker your LLM can generate text.

Our benchmark focuses on the Llama 3 8B Q4KM configuration, meaning it's quantized (like compressing data for efficient storage) with 4 bits per token. This configuration strikes a balance between accuracy and performance, allowing the M1 chip to handle the model with reasonable efficiency.

Here's a breakdown of the key performance metrics:

| Configuration | Tokens/Second |

|---|---|

| Llama 3 8B Q4KM Processing | 87.26 |

| Llama 3 8B Q4KM Generation | 9.72 |

So, what do these numbers mean?

- Llama 3 8B Q4KM Processing: This figure represents the speed at which the M1 processes the model's internal calculations, akin to a racehorse warming up for a sprint.

- Llama 3 8B Q4KM Generation: This is a more practical measure, reflecting the rate at which the M1 can generate text based on the model's understanding. Think of it as the horse actually running the race.

Is the M1 a speed demon? While the processing speed is impressive, the generation speed is significantly lower. This is because generating text involves more than just calculating probabilities; it requires analyzing context and making choices.

Remember: These figures are for a specific configuration (Q4KM) and should be taken as a starting point for comparison, not definitive benchmarks.

Performance Analysis: Model and Device Comparison

To understand how the M1 stacks up against other devices and models, let's compare its performance to other popular LLMs running on different hardware. You'll notice some data is missing. Why? Because either the specific model or device combination wasn't benchmarked or there was no data available yet. Keep in mind this specific data is only for Llama 3 8B on Apple M1, not other devices or models.

M1 with Different Llama2 Models

| Configuration | Tokens/Second | Notes |

|---|---|---|

| Llama2 7B Q8_0 Processing | 108.21 | Apple M1 with 7 GPU Cores |

| Llama2 7B Q8_0 Generation | 7.92 | Apple M1 with 7 GPU Cores |

| Llama2 7B Q4_0 Processing | 107.81 | Apple M1 with 7 GPU Cores |

| Llama2 7B Q4_0 Generation | 14.19 | Apple M1 with 7 GPU Cores |

| Llama2 7B Q8_0 Processing | 117.25 | Apple M1 with 8 GPU Cores |

| Llama2 7B Q8_0 Generation | 7.91 | Apple M1 with 8 GPU Cores |

| Llama2 7B Q4_0 Processing | 117.96 | Apple M1 with 8 GPU Cores |

| Llama2 7B Q4_0 Generation | 14.15 | Apple M1 with 8 GPU Cores |

Observations:

- Smaller Models, Faster Generation: The smaller Llama2 7B model generally achieves faster generation speeds compared to Llama3 8B, likely due to its smaller size and reduced computational complexity.

- Quantization Impact: The Q80 and Q40 quantizations seem to have little impact on processing speeds, but can influence generation speeds. However, the difference is subtle and may not be noticeable in real-world use.

- GPU Cores: The M1 can have different numbers of GPU cores, slightly affecting the processing and generation speeds.

Practical Recommendations: Use Cases and Workarounds

Alright, now that we've seen the numbers, let's discuss how you can leverage the M1 effectively for your projects.

What are some efficient ways to use Llama3 8B on the Apple M1?

- Code Generation: The M1's solid processing performance makes it suitable for tasks like generating code snippets. You can leverage its strengths for basic code generation tasks, especially if you're working with relatively small code samples.

- Text Summarization: Summarizing articles or documents can be handled efficiently by Llama3 8B on the M1. The model's ability to grasp context and extract key information makes it a suitable choice for tasks requiring concise summaries.

- Conversational AI: While the generation speed might not be ideal for real-time, fluid conversations, you can use Llama 3 8B on the M1 to create interactive chatbots providing responses with reasonable latency.

How to work around the M1's limitations?

- Optimizing Your Prompt: Crafting clear and concise prompts can significantly improve the M1's generation speed. Think of it like giving your LLM well-defined instructions for a task.

- Model Selection: For larger models, such as Llama 3 70B, or more demanding tasks requiring higher generation speed, consider using a different device with more powerful hardware.

- Lowering Expectations: Accept that the M1 might not be the ideal device for real-time, high-speed applications. Consider using it for tasks that don't require immediate responses, such as research or creative writing.

Conclusion

The Apple M1 chip offers competitive performance for running the Llama 3 8B model. Its processing speed is impressive, but the generation speed might not be ideal for all applications. Understanding the strengths and limitations of the M1 and the Llama 3 8B model will allow you to make informed choices about your projects.

FAQ - Frequently Asked Questions

What is quantization?

Quantization is like compressing data for more efficient storage and processing. Imagine you have a high-resolution image; quantization would be like reducing the number of colors used, making the file smaller but maintaining the essence of the image. In LLMs, quantization reduces the number of bits used to represent the model's parameters, resulting in smaller model sizes and faster processing, but potentially with some loss of accuracy.

Why is generation speed important?

Generation speed refers to how quickly the LLM can produce text based on the model's understanding. Think of it as the speed at which a writer can form sentences and paragraphs. Faster generation speed means more responsive and interactive experiences in applications like chatbots, code generation, and creative writing tools.

What are the alternatives to using a local LLM like Llama3 8B?

You can also use cloud-based LLMs like those offered by Google, Microsoft, or Amazon. These services provide powerful LLMs with faster processing and generation speeds but require an internet connection and may incur costs.

Keywords

Apple M1, Llama3 8B, LLM, token generation speed, performance benchmarks, quantization, Q4KM, GPU Cores, processing, generation, practical recommendations, code generation, text summarization, conversational AI, limitations, workaround, prompt optimization, model selection, cloud-based LLMs.