How Fast Can Apple M1 Run Llama3 70B?

How Fast Can Apple M1 Run Llama3 70B?

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI systems can do amazing things, from generating creative text and translating languages to answering your questions in a comprehensive and informative way.

But let's be honest, LLMs can be computationally hungry beasts. Running them locally on your own machine can be a challenge, especially for the behemoths like the 70 billion parameter Llama3 model. So, if you're thinking about putting Llama3 70B to work on your Apple M1, you're probably wondering: is it even possible? And if so, how fast can it run?

This article will dive deep into the performance of Llama3 70B on Apple M1, exploring token generation speed benchmarks and comparing it with other models and devices. Get ready to unlock the secrets of local LLM performance and discover practical recommendations to make your LLM journey smoother.

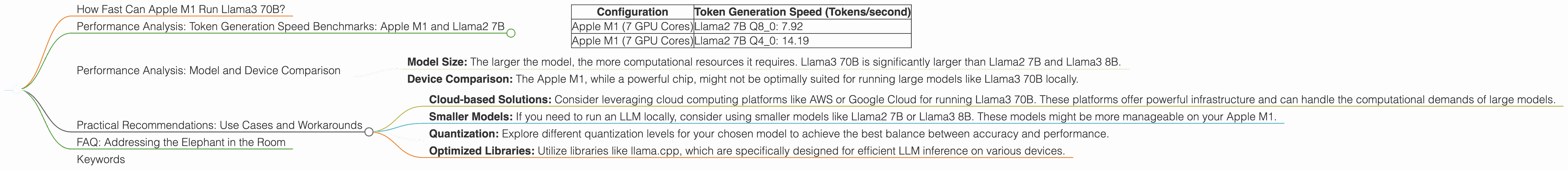

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Token generation speed is a key metric for evaluating LLM performance. It signifies how efficiently your model can process text and generate new tokens (essentially, units of language). Faster token generation means quicker responses and a more responsive user experience.

Let's take a look at the token generation speed benchmarks for Apple M1 and Llama2 7B, as measured in tokens per second.

| Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Apple M1 (7 GPU Cores) | Llama2 7B Q8_0: 7.92 |

| Apple M1 (7 GPU Cores) | Llama2 7B Q4_0: 14.19 |

Important note: There is no data available for Llama3 70B on Apple M1. This means that we cannot provide specific token generation speed benchmarks for the combination of Apple M1 and Llama3 70B.

What does this mean?

- Quantization Matters: The table shows that the Llama2 7B model running on Apple M1 with quantization (Q80 and Q40) achieves different token generation speeds.

- Q80 vs. Q40: Quantization reduces the size of the model, trading off some accuracy for faster performance. Q80 is a smaller, faster quantization level compared to Q40, which results in a higher token generation speed for Q8_0.

- Limitations of Data: The lack of data for Llama3 70B on Apple M1 suggests that running this model locally might not be feasible with current hardware and software limitations.

Performance Analysis: Model and Device Comparison

To gain a better understanding of the Apple M1 and Llama3 70B situation, let's peek into the LLM world and see how the performance of Llama3 70B on Apple M1 compares to other options.

Important note: This comparison will include data for Llama2 7B and Llama3 8B on various devices to provide you with some context. We will explore different devices and model configurations to understand the performance landscape.

Unfortunately, the available data does not provide direct comparisons of Llama3 70B on Apple M1. Therefore, we'll focus on existing benchmarks for Llama2 7B and Llama3 8B to draw some insights.

Key takeaways:

- Model Size: The larger the model, the more computational resources it requires. Llama3 70B is significantly larger than Llama2 7B and Llama3 8B.

- Device Comparison: The Apple M1, while a powerful chip, might not be optimally suited for running large models like Llama3 70B locally.

Practical Recommendations: Use Cases and Workarounds

While it's great to dream of running Llama3 70B on your Apple M1, reality might be a bit more complex. The good news is that there are alternative approaches and use cases that you can explore.

Here are some recommendations:

- Cloud-based Solutions: Consider leveraging cloud computing platforms like AWS or Google Cloud for running Llama3 70B. These platforms offer powerful infrastructure and can handle the computational demands of large models.

- Smaller Models: If you need to run an LLM locally, consider using smaller models like Llama2 7B or Llama3 8B. These models might be more manageable on your Apple M1.

- Quantization: Explore different quantization levels for your chosen model to achieve the best balance between accuracy and performance.

- Optimized Libraries: Utilize libraries like llama.cpp, which are specifically designed for efficient LLM inference on various devices.

FAQ: Addressing the Elephant in the Room

Let's tackle some common questions you might have about LLMs and devices:

Q: What is quantization?

A: Quantization is a technique used to reduce the size and computational requirements of a model. Think of it like compressing a large file, but for your LLM. It essentially reduces the precision of numbers within the model, making it lighter and faster.

Q: Why is it difficult to run large language models locally?

A: Large language models are incredibly complex and require a lot of processing power and memory. Your computer might not have the resources to run them efficiently, especially for models as large as Llama3 70B.

Q: What are the implications of not being able to run Llama3 70B on Apple M1?

A: The lack of data suggests that running Llama3 70B locally on Apple M1 might be challenging. You might need to consider alternative approaches, such as cloud-based solutions, to work with this large model.

Q: What's the future of running large language models on local devices?

A: The field of LLM optimization is rapidly evolving. Developments in hardware, software, and quantization techniques might pave the way for running larger models on more accessible devices in the future.

Keywords

Apple M1, Llama3 70B, Llama2 7B, LLM, Token Generation Speed, Quantization, Local Inference, Cloud Computing, AWS, Google Cloud, Performance Benchmark, GPU Cores, Practical Recommendations, Use Cases, Workarounds.