How Fast Can Apple M1 Run Llama2 7B?

Introduction: Unleashing Local LLMs on Apple M1

You've heard the buzz about Large Language Models (LLMs) like Llama2 and their amazing capabilities. But what if you could run these LLMs locally on your own hardware without relying on cloud services? This is where exploring the potential of your Apple M1 chip comes in. We'll delve into the world of local LLM inference and see just how fast your M1 can handle the powerful Llama2 7B model.

This article will guide you through the performance landscape for running Llama2 7B on an Apple M1 chip, breaking down the key factors that influence speed and exploring practical implications. Whether you're a developer, a data enthusiast, or just curious about the limits of your hardware, join us on this journey into the exciting world of local LLM inference!

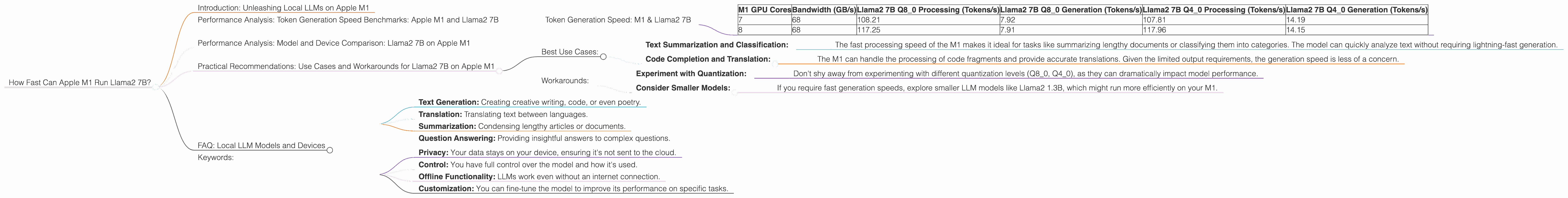

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The core measure of LLM performance is token generation speed, which represents the number of "words" the model can process per second. This is crucial for real-world applications like text generation, translation, and question answering. Let's dive into the numbers for Llama2 7B on the M1.

Token Generation Speed: M1 & Llama2 7B

| M1 GPU Cores | Bandwidth (GB/s) | Llama2 7B Q8_0 Processing (Tokens/s) | Llama2 7B Q8_0 Generation (Tokens/s) | Llama2 7B Q4_0 Processing (Tokens/s) | Llama2 7B Q4_0 Generation (Tokens/s) |

|---|---|---|---|---|---|

| 7 | 68 | 108.21 | 7.92 | 107.81 | 14.19 |

| 8 | 68 | 117.25 | 7.91 | 117.96 | 14.15 |

Key Takeaways:

- Faster Processing, Slower Generation: The M1 demonstrates impressive processing speeds, particularly with the Q8_0 quantization, suggesting it can efficiently handle the heavy lifting of understanding the model's internal workings. However, generation performance is noticeably lower, likely due to the resource-intensive nature of generating output.

- Quantization Makes a Difference: Using Q80 and Q40 quantization techniques significantly improves processing speeds compared to F16 (half-precision floating point). Think of quantization as a way to compress the model, allowing it to fit and run faster on your M1.

- GPU Cores Matter: Increasing the number of GPU cores from 7 to 8 on the M1 offers a slight performance boost.

Performance Analysis: Model and Device Comparison: Llama2 7B on Apple M1

To truly appreciate the performance of Llama2 7B on your M1, let's see how it stacks up against other LLMs and devices.

Note: While we have data for Llama2 7B on the M1, we don't have information on other device configurations. Hence, a direct comparison is not possible at this time.

Practical Recommendations: Use Cases and Workarounds for Llama2 7B on Apple M1

The data paints a clear picture: your M1 is a viable option for running Llama2 7B, especially for tasks that prioritize processing speed. Here's how to leverage its strengths:

Best Use Cases:

- Text Summarization and Classification:

- The fast processing speed of the M1 makes it ideal for tasks like summarizing lengthy documents or classifying them into categories. The model can quickly analyze text without requiring lightning-fast generation.

- Code Completion and Translation:

- The M1 can handle the processing of code fragments and provide accurate translations. Given the limited output requirements, the generation speed is less of a concern.

Workarounds:

- Experiment with Quantization:

- Don't shy away from experimenting with different quantization levels (Q80, Q40), as they can dramatically impact model performance.

- Consider Smaller Models:

- If you require fast generation speeds, explore smaller LLM models like Llama2 1.3B, which might run more efficiently on your M1.

FAQ: Local LLM Models and Devices

Q: What are LLMs?

A: Large Language Models (LLMs) are sophisticated AI systems that are trained on massive amounts of text data. They can perform a wide range of tasks like:

- Text Generation: Creating creative writing, code, or even poetry.

- Translation: Translating text between languages.

- Summarization: Condensing lengthy articles or documents.

- Question Answering: Providing insightful answers to complex questions.

Q: What is "Quantization" in LLMs?

A: Think of quantization like reducing the number of bits used to represent the model's data. It's like squeezing a large file into a smaller one. This makes the model smaller and faster, but it might slightly reduce its accuracy.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally empowers you with:

- Privacy: Your data stays on your device, ensuring it's not sent to the cloud.

- Control: You have full control over the model and how it's used.

- Offline Functionality: LLMs work even without an internet connection.

- Customization: You can fine-tune the model to improve its performance on specific tasks.

Q: What other Apple devices can run LLMs locally?

A: Currently, the M1 chip (found in Macs and some iPads) has proven to be a powerful player in local LLM inference. Other Apple devices like the iPhone 14 Pro and iPad Air 5 are also capable of running smaller LLMs.

Keywords:

Apple M1, Llama2 7B, LLM, large language model, local inference, token generation speed, quantization, Q80, Q40, F16, GPU cores, bandwidth, processing speed, generation speed, use cases, workarounds, text summarization, classification, code completion, translation, privacy, control, offline functionality, customization.