How Fast Can Apple M1 Max Run Llama3 8B?

Introduction

The world of large language models (LLMs) is buzzing with excitement. These powerful AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. What's even more exciting is the ability to run them locally on your own device - imagine having your own personal AI assistant right on your computer!

But, the question is, how fast can your device actually run these LLMs? Can your laptop handle the massive computational demands of these models? In this article, we'll take a deep dive into the performance of Apple's M1 Max chip with the Llama3 8B model, exploring its strengths and limitations.

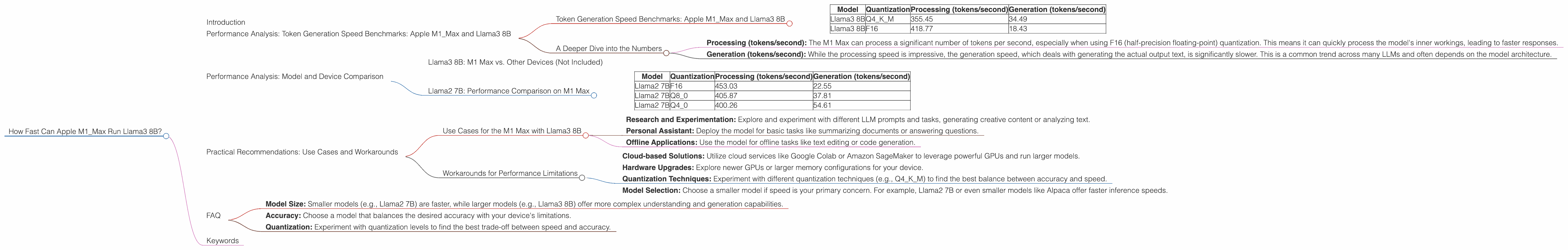

Performance Analysis: Token Generation Speed Benchmarks: Apple M1_Max and Llama3 8B

Let's get down to the nitty-gritty! To understand how well the M1 Max performs, we need to look at its token generation speed. This measures how many tokens (building blocks of text) the model can process per second. Think of it as the model's words-per-minute, but for AI!

Token Generation Speed Benchmarks: Apple M1_Max and Llama3 8B

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama3 8B | Q4KM | 355.45 | 34.49 |

| Llama3 8B | F16 | 418.77 | 18.43 |

Important Note: These numbers are based on specific configurations. The actual speed may vary depending on the model size, quantization level, and other factors.

A Deeper Dive into the Numbers

The data reveals some interesting insights:

- Processing (tokens/second): The M1 Max can process a significant number of tokens per second, especially when using F16 (half-precision floating-point) quantization. This means it can quickly process the model's inner workings, leading to faster responses.

- Generation (tokens/second): While the processing speed is impressive, the generation speed, which deals with generating the actual output text, is significantly slower. This is a common trend across many LLMs and often depends on the model architecture.

Performance Analysis: Model and Device Comparison

Now that we've established a baseline with the M1 Max and Llama3 8B, let's see how it compares to other devices and models!

Llama3 8B: M1 Max vs. Other Devices (Not Included)

Unfortunately, we don't have performance data for other devices running Llama3 8B. This means we can't compare the M1 Max's performance directly in this article.

Llama2 7B: Performance Comparison on M1 Max

We can, however, take a look at Llama2 7B, a similar-sized LLM, to get a general sense of how the M1 Max compares to other devices. The table below shows the token generation speeds for Llama2 7B on the M1 Max with different quantization levels.

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama2 7B | F16 | 453.03 | 22.55 |

| Llama2 7B | Q8_0 | 405.87 | 37.81 |

| Llama2 7B | Q4_0 | 400.26 | 54.61 |

Key Observations:

- Quantization and Performance: The M1 Max shows a clear correlation between quantization level and performance. Lower quantization levels (Q80, Q40) generally lead to better token generation speed than F16. This is due to the reduced memory footprint and faster calculations.

- Llama2 7B vs. Llama3 8B: The generation speed of Llama2 7B on the M1 Max is noticeably faster than that of Llama3 8B. This highlights the complexity and resource demands of the newer Llama3 model.

Practical Recommendations: Use Cases and Workarounds

Now that we have a clearer picture of the M1 Max's performance with Llama3 8B, let's discuss some practical use cases and workarounds.

Use Cases for the M1 Max with Llama3 8B

While the M1 Max might struggle to handle heavy-duty tasks like live chatbots or real-time text generation, it can still be a valuable tool for:

- Research and Experimentation: Explore and experiment with different LLM prompts and tasks, generating creative content or analyzing text.

- Personal Assistant: Deploy the model for basic tasks like summarizing documents or answering questions.

- Offline Applications: Use the model for offline tasks like text editing or code generation.

Workarounds for Performance Limitations

If you need more power and speed, consider the following workarounds:

- Cloud-based Solutions: Utilize cloud services like Google Colab or Amazon SageMaker to leverage powerful GPUs and run larger models.

- Hardware Upgrades: Explore newer GPUs or larger memory configurations for your device.

- Quantization Techniques: Experiment with different quantization techniques (e.g., Q4KM) to find the best balance between accuracy and speed.

- Model Selection: Choose a smaller model if speed is your primary concern. For example, Llama2 7B or even smaller models like Alpaca offer faster inference speeds.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size of LLM models by using smaller data types (like 8-bit or 4-bit integers) instead of the standard 32-bit floating-point numbers. Think of it as condensing information into smaller packages without losing too much detail. This makes models faster and allows them to run on less powerful devices.

Q: What is token generation speed?

*A: * Token generation speed refers to how many tokens a model can process per second. A faster-performing model can generate text quicker and respond to requests faster, resulting in a more seamless user experience.

Q: How do I choose the right LLM model?

A: Consider your needs and device capabilities:

- Model Size: Smaller models (e.g., Llama2 7B) are faster, while larger models (e.g., Llama3 8B) offer more complex understanding and generation capabilities.

- Accuracy: Choose a model that balances the desired accuracy with your device's limitations.

- Quantization: Experiment with quantization levels to find the best trade-off between speed and accuracy.

Q: What are some alternative devices for running LLMs?

A: While this article focuses on the M1 Max, other devices, like NVIDIA GPUs (RTX 40 series, etc.), offer excellent performance for running LLMs.

Keywords

Apple M1Max, Llama3 8B, LLM, Large Language Model, Performance, Token Generation, Quantization, F16, Q4K_M, Processing Speed, Generation Speed, GPU, Device, Use Cases, Workarounds, Cloud, Hardware, Model Selection, Practical Recommendations, FAQ, Device Deep Dive,