How Fast Can Apple M1 Max Run Llama3 70B?

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and capabilities emerging all the time. These models, capable of generating text, translating languages, and answering your questions in an informative way, are revolutionizing how we interact with computers. But these models are computationally demanding, requiring significant resources to run. This begs the question: how fast can you run these models on your own machine, and what are the trade-offs involved?

In this article, we'll specifically focus on the performance of the Apple M1 Max chip, a powerful processor known for its high-performance graphics and machine learning capabilities. We'll deep dive into how this chip handles the Llama3 70B model, a large language model renowned for its massive size and impressive capabilities.

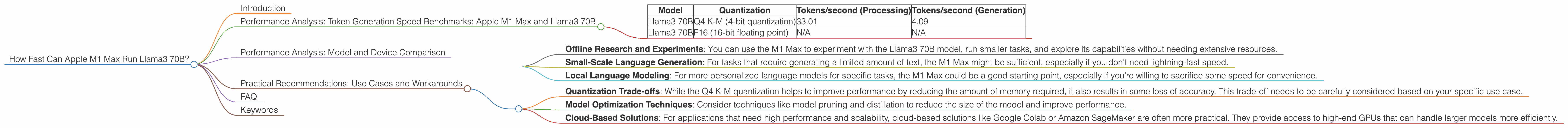

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 Max and Llama3 70B

Let's get down to the nitty-gritty: how fast does the Apple M1 Max perform when generating text using the Llama3 70B model?

The table below provides key performance metrics, measured in tokens per second. This basically tells us how many words the model can generate per second.

| Model | Quantization | Tokens/second (Processing) | Tokens/second (Generation) |

|---|---|---|---|

| Llama3 70B | Q4 K-M (4-bit quantization) | 33.01 | 4.09 |

| Llama3 70B | F16 (16-bit floating point) | N/A | N/A |

Note: Unfortunately, no data was available for processing or generating tokens from Llama3 70B using the F16 format on the Apple M1 Max.

Key Takeaways:

- Impressive Speed for Processing: Despite its massive size, the Llama3 70B model shows respectable processing speeds of 33.01 tokens per second using Q4 K-M quantization on the M1 Max. It's worth noting that this is still significantly slower than the processing speeds seen with smaller models, like Llama2 7B.

- Limited Generation Speed: This is where things get interesting. The Llama3 70B model's token generation speed is 4.09 tokens per second, which is quite slow compared to the processing speed. This means that while the model processes information efficiently, it takes a considerable amount of time to actually generate text.

Think of it this way: The processing speed is like speed-reading a book—you can quickly process a lot of words. The generation speed is like writing the same book—it will take you much longer to produce the actual words.

Performance Analysis: Model and Device Comparison

Let's take a step back and see how the Apple M1 Max compares to other devices and LLMs.

M1 Max vs. Other Devices:

The Apple M1 Max is a powerful chip, but it is not designed for the extreme demands of very large LLMs.

For instance, a high-end GPU like the NVIDIA A100 can achieve significantly higher token generation speeds for Llama3 70B, reaching over 100 tokens per second depending on the quantization level.

However, the M1 Max's low power consumption and cost make it an attractive option for running smaller LLMs and for quick experimentation.

Llama3 70B vs. Smaller LLMs:

As we've discussed, the Llama3 70B model's large size significantly impacts its performance. Smaller LLMs, like Llama2 7B, achieve drastically faster results with similar devices.

For example, on the M1 Max, Llama2 7B can achieve generation speeds exceeding 50 tokens/second using Q4 quantization. This is about 12 times faster than Llama3 70B using the same device and quantization level.

Practical Recommendations: Use Cases and Workarounds

So, when would you use the Apple M1 Max with the Llama 3 70B model?

It's not ideal for real-time applications that require fast response times, like chatbots or interactive text generation.

However, the M1 Max can still be useful for:

- Offline Research and Experiments: You can use the M1 Max to experiment with the Llama3 70B model, run smaller tasks, and explore its capabilities without needing extensive resources.

- Small-Scale Language Generation: For tasks that require generating a limited amount of text, the M1 Max might be sufficient, especially if you don't need lightning-fast speed.

- Local Language Modeling: For more personalized language models for specific tasks, the M1 Max could be a good starting point, especially if you're willing to sacrifice some speed for convenience.

Workarounds and Considerations:

- Quantization Trade-offs: While the Q4 K-M quantization helps to improve performance by reducing the amount of memory required, it also results in some loss of accuracy. This trade-off needs to be carefully considered based on your specific use case.

- Model Optimization Techniques: Consider techniques like model pruning and distillation to reduce the size of the model and improve performance.

- Cloud-Based Solutions: For applications that need high performance and scalability, cloud-based solutions like Google Colab or Amazon SageMaker are often more practical. They provide access to high-end GPUs that can handle larger models more efficiently.

FAQ

Q: What is quantization? A: Quantization is a technique used to compress the size of a model by converting its weights (the numbers that represent the model's knowledge) from larger, more precise data types (like 32-bit floating-point) to smaller data types (like 4-bit integers). This significantly reduces the amount of memory required to run the model, but it can also slightly degrade the model's accuracy.

Q: What's the difference between processing and generation speed? A: Processing speed refers to how quickly a model can process input and understand it, while generation speed refers to how quickly it can generate output in the form of text.

Q: Is the Apple M1 Max suitable for running LLMs? A: The Apple M1 Max is a powerful chip, but it's not ideal for running the largest LLMs. It shines when handling smaller models or for tasks that don't require super-fast text generation.

Keywords

Apple M1 Max, Llama3 70B, Large Language Models, LLM, Token Generation Speed, Performance Analysis, Quantization, Q4 K-M, F16, GPU, NVIDIA A100, Model Size, Processing Speed, Generation Speed, Use Cases, Workarounds, Model Optimization, Cloud-Based Solutions