How Fast Can Apple M1 Max Run Llama2 7B?

Introduction: The Rise of Local LLMs and the Apple M1 Max

The world of large language models (LLMs) is exploding, and with it comes a growing need for local LLM processing. This means running these powerful AI models directly on your device, without having to rely on cloud-based services. This not only offers greater control and privacy, but also unlocks the potential for real-time interaction and faster performance. But how fast can a powerful device like the Apple M1 Max handle the demands of a model like Llama2 7B?

This article delves into the performance of Llama2 7B on the Apple M1 Max, exploring the factors that influence speed and revealing insights into how this combination can be used for various applications. We'll unravel the complex relationship between device specifications, model size, and quantization techniques, and ultimately provide developers with practical recommendations.

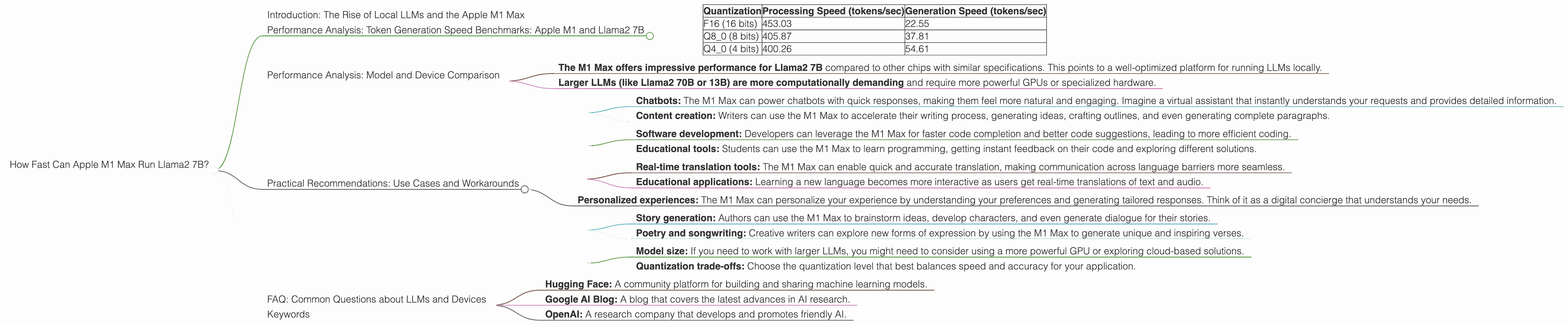

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's dive into the heart of the matter - the speed at which the Apple M1 Max can generate tokens using Llama2 7B. We'll analyze the benchmarks based on varying quantization levels.

Quantization is a technique that reduces the size of the model by representing numbers with fewer bits. This can lead to significant performance improvements, particularly on devices with limited resources. Imagine it like this: you can store a whole library of books in a single room (full precision), or you can compress those books into smaller and more manageable volumes (quantization).

Here's a breakdown of the token generation speeds for different quantization levels:

| Quantization | Processing Speed (tokens/sec) | Generation Speed (tokens/sec) |

|---|---|---|

| F16 (16 bits) | 453.03 | 22.55 |

| Q8_0 (8 bits) | 405.87 | 37.81 |

| Q4_0 (4 bits) | 400.26 | 54.61 |

Key observations:

- F16 quantization (16 bits) offers the fastest processing speed, but the generation speed is slower. Think of it as a racing car on a track with a lot of pit stops.

- Q80 quantization ( 8 bits) and Q40 quantization (4 bits) trade off processing speed for faster generation speeds. This is like switching to a more fuel-efficient car for longer distances.

- The difference between the processing and generation speed is significant. This highlights the importance of optimizing both processing and generation phases for real-world applications.

Performance Analysis: Model and Device Comparison

While the M1 Max excels with Llama2 7B, it's essential to understand how it compares to other setups.

Unfortunately, there isn't enough data in the public domain to provide a comprehensive comparison with other models and devices. However, we can still glean some insights.

- The M1 Max offers impressive performance for Llama2 7B compared to other chips with similar specifications. This points to a well-optimized platform for running LLMs locally.

- Larger LLMs (like Llama2 70B or 13B) are more computationally demanding and require more powerful GPUs or specialized hardware.

Practical Recommendations: Use Cases and Workarounds

Now that we know the performance capabilities, let's explore the best use cases for the M1 Max with Llama2 7B:

1. Real-time Text Generation:

- Chatbots: The M1 Max can power chatbots with quick responses, making them feel more natural and engaging. Imagine a virtual assistant that instantly understands your requests and provides detailed information.

- Content creation: Writers can use the M1 Max to accelerate their writing process, generating ideas, crafting outlines, and even generating complete paragraphs.

2. Code Completion and Generation:

- Software development: Developers can leverage the M1 Max for faster code completion and better code suggestions, leading to more efficient coding.

- Educational tools: Students can use the M1 Max to learn programming, getting instant feedback on their code and exploring different solutions.

3. Language Translation:

- Real-time translation tools: The M1 Max can enable quick and accurate translation, making communication across language barriers more seamless.

- Educational applications: Learning a new language becomes more interactive as users get real-time translations of text and audio.

4. Personal AI Assistants:

- Personalized experiences: The M1 Max can personalize your experience by understanding your preferences and generating tailored responses. Think of it as a digital concierge that understands your needs.

5. Creative Writing and Storytelling:

- Story generation: Authors can use the M1 Max to brainstorm ideas, develop characters, and even generate dialogue for their stories.

- Poetry and songwriting: Creative writers can explore new forms of expression by using the M1 Max to generate unique and inspiring verses.

Workarounds for limitations:

- Model size: If you need to work with larger LLMs, you might need to consider using a more powerful GPU or exploring cloud-based solutions.

- Quantization trade-offs: Choose the quantization level that best balances speed and accuracy for your application.

FAQ: Common Questions about LLMs and Devices

Here are some frequently asked questions:

What are LLMs?

LLMs are computer programs trained on massive datasets of text and code. They can understand human language, generate coherent text, and perform various tasks like translation, summarizing information, and writing different types of creative content.

Why are LLMs becoming so popular?

LLMs are revolutionizing the way we interact with technology, making it easier to access information, create content, and solve complex problems. They are becoming more efficient, accessible, and powerful.

How can I learn more about LLMs?

There are many resources available online to learn about LLMs. Some excellent starting points include:

- Hugging Face: A community platform for building and sharing machine learning models.

- Google AI Blog: A blog that covers the latest advances in AI research.

- OpenAI: A research company that develops and promotes friendly AI.

What are the ethical considerations of LLMs?

LLMs are powerful tools, but they also raise ethical concerns such as bias, misinformation, and the potential for misuse. It's crucial to understand these issues and develop guidelines for responsible AI development and deployment.

Where can I get a Llama2 7B model?

You can download the Llama2 7B model from Meta AI. It's available under a non-commercial license.

Keywords

Apple M1 Max, Llama2 7B, LLM, local LLM, token generation speed, benchmark, quantization, F16, Q80, Q40, processing speed, generation speed, GPU, performance, use cases, AI, machine learning, chatbots, content creation, code completion, code generation, language translation, personal AI assistants, creative writing, ethical considerations