How Can I Prevent OOM Errors on NVIDIA RTX A6000 48GB When Running Large Models?

Introduction

If you're diving into the world of large language models (LLMs) and you've got your hands on a powerful NVIDIA RTX A6000 with 48GB of dedicated memory, you're ready to unleash some serious AI power. But even with a beast like the RTX A6000, you might still encounter the dreaded "Out of Memory" (OOM) error, especially when working with massive models. In this article, we'll explore common OOM concerns while running LLMs on the RTX A6000, and uncover practical strategies to prevent them, ensuring smooth and efficient model training and inference.

Let's dive in!

Understanding OOM Errors

Imagine your GPU as a giant warehouse with a limited number of storage shelves. These shelves represent the GPU's memory. When you load an LLM onto your GPU, it's like loading boxes filled with information onto those shelves. If you load too many boxes (LLM parameters), your warehouse (GPU) will run out of shelf space (memory), and you'll get the dreaded "Out of Memory" error!

Strategies for Preventing OOM Errors

Here are some techniques to help you avoid OOM errors:

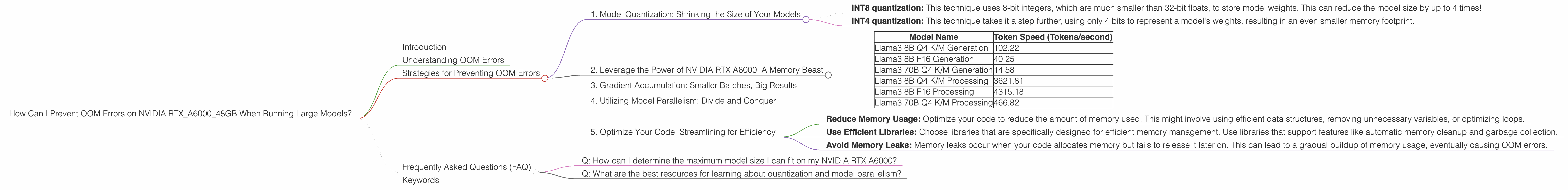

1. Model Quantization: Shrinking the Size of Your Models

Think of quantization as a compression technique for LLMs. Instead of storing every number in the model as a full-fledged 32-bit floating-point number (like a high-resolution photo), quantization lets you use smaller, "compressed" versions (like a smaller JPEG). This significantly reduces the memory footprint of your models while maintaining performance.

Popular Quantization Techniques:

- INT8 quantization: This technique uses 8-bit integers, which are much smaller than 32-bit floats, to store model weights. This can reduce the model size by up to 4 times!

- INT4 quantization: This technique takes it a step further, using only 4 bits to represent a model's weights, resulting in an even smaller memory footprint.

You can find more detailed info about quantization here.

2. Leverage the Power of NVIDIA RTX A6000: A Memory Beast

With its 48GB of memory, the RTX A6000 is a true powerhouse. It can handle much larger models than GPUs with less memory. When it comes to running LLMs, the RTX A6000 shines! It can handle larger models and provide increased speed.

Table 1: Model Performance on NVIDIA RTX A6000_48GB

| Model Name | Token Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4 K/M Generation | 102.22 |

| Llama3 8B F16 Generation | 40.25 |

| Llama3 70B Q4 K/M Generation | 14.58 |

| Llama3 8B Q4 K/M Processing | 3621.81 |

| Llama3 8B F16 Processing | 4315.18 |

| Llama3 70B Q4 K/M Processing | 466.82 |

Important: Data for Llama370BF16Generation and Llama370BF16Processing is missing.

Analysis of Table 1:

- Q4 K/M Quantization: The use of Q4 K/M quantization significantly reduces the memory requirements for both 8B and 70B Llama models, enabling the RTX A6000 to handle them comfortably.

- F16 Generation: The F16 format, while still smaller than 32-bit floats, requires more memory than Q4 K/M quantization. This is why we see lower token speeds for the F16-quantized 8B Llama model.

- Processing vs. Generation: Note the difference in token speeds between "generation" and "processing." "Generation" refers to the speed of generating text from the model, whereas "processing" handles the computational tasks involved in running the model.

3. Gradient Accumulation: Smaller Batches, Big Results

Even with the RTX A6000, it might not be feasible to fit the entire dataset into memory at once. To overcome this, gradient accumulation comes to the rescue. Instead of processing the entire dataset in one big batch, you can break it down into smaller batches and accumulate gradients across them.

Imagine you have a huge pile of laundry. You can either try to wash it all at once (big batch), risking overflowing the washing machine (OOM error), or you can divide it into smaller loads (smaller batches) and wash them one by one. Gradient accumulation is like washing the laundry in smaller loads, avoiding the overflow!

4. Utilizing Model Parallelism: Divide and Conquer

Model parallelism is a technique where you distribute different parts of the LLM across multiple GPUs. Think of it as assigning different tasks to multiple workers in a team – each worker focuses on a specific part of the job, and together they complete the whole task faster.

Example: Distributed Training with Multiple RTX A6000s

Let's say you have two powerful RTX A6000s. You can use model parallelism to split a huge LLM across these two GPUs. One GPU might handle the first half of the model, while the other handles the second half. This allows you to work with much larger models without overloading a single GPU.

5. Optimize Your Code: Streamlining for Efficiency

Even with the best hardware and techniques, inefficient code can lead to OOM errors. Here are some tips to optimize your code:

Tips for Optimization

- Reduce Memory Usage: Optimize your code to reduce the amount of memory used. This might involve using efficient data structures, removing unnecessary variables, or optimizing loops.

- Use Efficient Libraries: Choose libraries that are specifically designed for efficient memory management. Use libraries that support features like automatic memory cleanup and garbage collection.

- Avoid Memory Leaks: Memory leaks occur when your code allocates memory but fails to release it later on. This can lead to a gradual buildup of memory usage, eventually causing OOM errors.

Frequently Asked Questions (FAQ)

Q: How can I determine the maximum model size I can fit on my NVIDIA RTX A6000?

A: The maximum model size depends on the model's architecture, quantization level, and the presence of other processes running on your system. You can experiment with different models and settings to determine the maximum size you can comfortably fit.

Q: What are the best resources for learning about quantization and model parallelism?

A: For deep dives into quantization, check out resources like the Quantization in TensorFlow and the PyTorch Quantization Documentation.

For exploring model parallelism, we recommend the PyTorch Distributed Data Parallel (DDP) and the TensorFlow Model Parallelism documentation.

Keywords

OOM, RTX A6000, 48GB, LLM, large language models, NVIDIA, memory, GPU, quantization, INT8, INT4, gradient accumulation, model parallelism, optimization