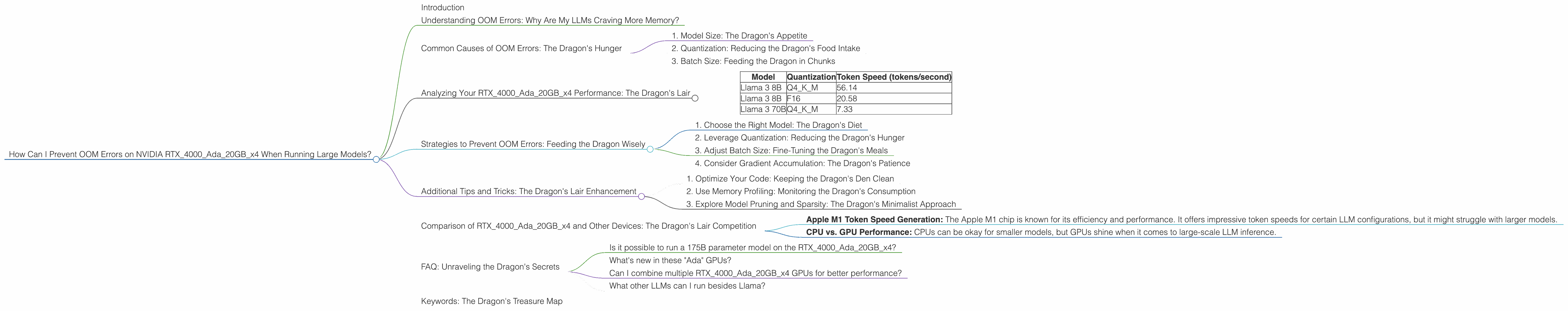

How Can I Prevent OOM Errors on NVIDIA RTX 4000 Ada 20GB x4 When Running Large Models?

Introduction

You've got your hands on a powerful NVIDIA RTX4000Ada20GBx4, ready to unleash the power of large language models (LLMs). But wait! You're encountering the dreaded "Out of Memory" (OOM) errors. What gives?

Fear not, fellow LLM enthusiast! This article will dive into the world of LLM performance optimization. We'll explore common causes of OOM errors on the RTX4000Ada20GBx4, and equip you with the knowledge and strategies to prevent those dreaded errors.

Understanding OOM Errors: Why Are My LLMs Craving More Memory?

Imagine your LLM as a hungry dragon, craving knowledge and eager to process vast text datasets. Your GPU is its den, a haven of processing power.

But just like dragons need their hoard, your LLM needs memory to store all of its knowledge and perform calculations. The problem arises when the LLM's appetite for memory exceeds the capacity of your GPU.

Think of it like trying to fit a giant dragon in a tiny cave – you're going to have some serious space issues! This is precisely what happens with OOM errors.

Common Causes of OOM Errors: The Dragon's Hunger

There are a few key factors that can contribute to OOM errors when running LLMs:

1. Model Size: The Dragon's Appetite

LLMs come in various sizes, from modest 7B parameter models to gigantic behemoths with 175B parameters. The larger the model, the more memory it requires to store its parameters and perform calculations.

2. Quantization: Reducing the Dragon's Food Intake

Quantization is like putting your LLM on a diet. It reduces the precision of the model's parameters, leading to smaller memory footprints.

Think of it like using smaller coins for your dragon's treasure. You'll have more coins, but each one will be worth less than the larger ones. The total value might remain the same, but you'll use less space to store it!

3. Batch Size: Feeding the Dragon in Chunks

Batch size determines how much data you feed the LLM at once. A larger batch size can be more efficient, but it also requires more memory.

Imagine your dragon is learning to breathe fire. You can either teach it one small burst at a time (small batch), or try to teach it a whole firestorm (large batch). The large batch might be more efficient in the long run, but it requires more resources.

Analyzing Your RTX4000Ada20GBx4 Performance: The Dragon's Lair

Now, let's delve into the specifics of the NVIDIA RTX4000Ada20GBx4 and analyze its performance with different LLM configurations.

Note: We'll be focusing on the LLM models and configurations specified in the title. Information about other devices or model variations will not be included for clarity.

Table 1: RTX4000Ada20GBx4 Performance with Llama Models

| Model | Quantization | Token Speed (tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 56.14 |

| Llama 3 8B | F16 | 20.58 |

| Llama 3 70B | Q4KM | 7.33 |

Observations:

- The RTX4000Ada20GBx4 delivers impressive performance with the Llama 3 8B model, achieving high token speeds for both Q4KM and F16 quantization levels.

- The performance drops significantly with the larger Llama 3 70B model. This is likely due to the increased memory requirements of the larger model, pushing the limits of the GPU's available memory.

Strategies to Prevent OOM Errors: Feeding the Dragon Wisely

We've identified the causes and analyzed the performance. Now, it's time for the real magic – strategies to tame your LLM's appetite and prevent those OOM errors.

1. Choose the Right Model: The Dragon's Diet

Start by selecting a model that suits your computational resources and needs. Smaller models like Llama 3 8B are a good starting point for the RTX4000Ada20GBx4.

2. Leverage Quantization: Reducing the Dragon's Hunger

Quantization is a powerful tool for reducing memory footprint. Q4KM quantization is a good option for the RTX4000Ada20GBx4, helping you run larger models smoothly.

3. Adjust Batch Size: Fine-Tuning the Dragon's Meals

Experiment with different batch sizes to find the sweet spot for your configuration. A smaller batch size may help prevent OOM errors, especially with larger models.

4. Consider Gradient Accumulation: The Dragon's Patience

Gradient accumulation allows you to process larger batches in chunks, effectively making your GPU work harder for longer periods. It can help reduce memory pressure and improve efficiency, especially if you're dealing with memory constraints.

Additional Tips and Tricks: The Dragon's Lair Enhancement

1. Optimize Your Code: Keeping the Dragon's Den Clean

Efficiently written code, along with using libraries such as PyTorch or TensorFlow, can significantly improve memory usage.

2. Use Memory Profiling: Monitoring the Dragon's Consumption

Tools like "nvidia-smi" and PyTorch's memory profiler can be helpful in identifying memory leaks and optimizing your code.

3. Explore Model Pruning and Sparsity: The Dragon's Minimalist Approach

Advanced techniques like model pruning and sparsity can further reduce the memory footprint of your LLM, making it a lighter and more efficient dragon!

Comparison of RTX4000Ada20GBx4 and Other Devices: The Dragon's Lair Competition

While this guide focuses on the RTX4000Ada20GBx4, let's briefly touch upon its performance compared to other popular devices:

- Apple M1 Token Speed Generation: The Apple M1 chip is known for its efficiency and performance. It offers impressive token speeds for certain LLM configurations, but it might struggle with larger models.

- CPU vs. GPU Performance: CPUs can be okay for smaller models, but GPUs shine when it comes to large-scale LLM inference.

FAQ: Unraveling the Dragon's Secrets

Is it possible to run a 175B parameter model on the RTX4000Ada20GBx4?

While not impossible, running a 175B parameter model on the RTX4000Ada20GBx4 with high-performance is unlikely without significant memory optimizations like heavy quantization.

What's new in these "Ada" GPUs?

The "Ada" GPUs from NVIDIA introduce innovations in terms of efficiency and memory management. They are equipped with new features like "Tensor Cores" which provide accelerated matrix math operations.

Can I combine multiple RTX4000Ada20GBx4 GPUs for better performance?

Yes, you can combine multiple RTX4000Ada20GBx4 GPUs to further boost performance, offering a larger memory pool for your LLM to utilize.

What other LLMs can I run besides Llama?

The strategies discussed in this guide are applicable to a wide range of LLMs, including GPT-3, PaLM, and many others. Be sure to consider the specific memory requirements of each model.

Keywords: The Dragon's Treasure Map

Large Language Model, LLM, NVIDIA, RTX4000Ada20GBx4, OOM, Out of Memory, Memory Optimization, Quantization, Batch Size, Token Speed, Performance, GPU, Model Pruning, Sparsity, Gradient Accumulation, Memory Profiling, Apple M1, CPU, Inference, Llama, GPT-3, PaLM.